How REWE Runs Multi-Country E-Commerce on 14-Day Sprint Cycles

Table of Contents+

- Why Does Enterprise E-Commerce Delivery Move So Slowly?

- What Is the Real Cost of Quarterly Release Cycles?

- What Does a 14-Day Sprint Cycle Look Like at Enterprise Scale?

- How Does REWE-Scale E-Commerce Work With This Model?

- How Do You Maintain Quality at This Speed?

- What Team Structure Makes This Work?

- Why Can't Most Agencies Deliver on a 14-Day Cadence?

- How Do You Get Started?

- References

TL;DR

The largest retailers in DACH do not relaunch their e-commerce platforms every 18 months. They ship working improvements every 14 days. REWE Group - EUR 90B+ in revenue, operations spanning multiple countries - runs its digital commerce on continuous sprint delivery.

Key Takeaways

- •Enterprise e-commerce teams that ship on quarterly release cycles lose 12-16 weeks of market feedback per cycle - while competitors running 14-day sprints compound improvements 26 times per year.

- •The 14-day sprint model works at enterprise scale when every sprint includes a QA gate, defined scope agreed with business stakeholders, and production deployment - not just a demo.

- •REWE Group operates e-commerce across multiple countries with continuous delivery. The model relies on parallel teams, feature flags for gradual rollout, and automated regression testing before every release.

- •Most agencies cannot deliver on a 14-day cadence because they sell hours, not outcomes. Sprint delivery requires a dedicated Project Owner, Customer Advocate, and development team with end-to-end ownership.

- •Scaling from 1 FTE to 4-6 FTE on a sprint cadence requires disciplined architecture, shared CI/CD pipelines, and clear domain boundaries between teams - not just adding more developers.

How enterprise retailers like REWE ship e-commerce features every 14 days. The sprint delivery model, team structure, and QA gates.

The largest retailers in DACH do not relaunch their e-commerce platforms every 18 months. They ship working improvements every 14 days. REWE Group - EUR 90B+ in revenue, operations spanning multiple countries - runs its digital commerce on continuous sprint delivery. This enterprise e-commerce delivery model replaced quarterly release trains, and it works at a scale most teams assume requires slower processes.

Why Does Enterprise E-Commerce Delivery Move So Slowly?

This article draws on easy.bi's delivery methodology refined across 100+ enterprise e-commerce projects, including work with REWE Group's digital commerce operations.

Enterprise e-commerce delivery is broken. Not because the engineers are slow, but because the delivery model was designed for a different era. Change advisory boards. Quarterly release trains. 6-month feature cycles where the original business requirement is outdated before the code reaches production.

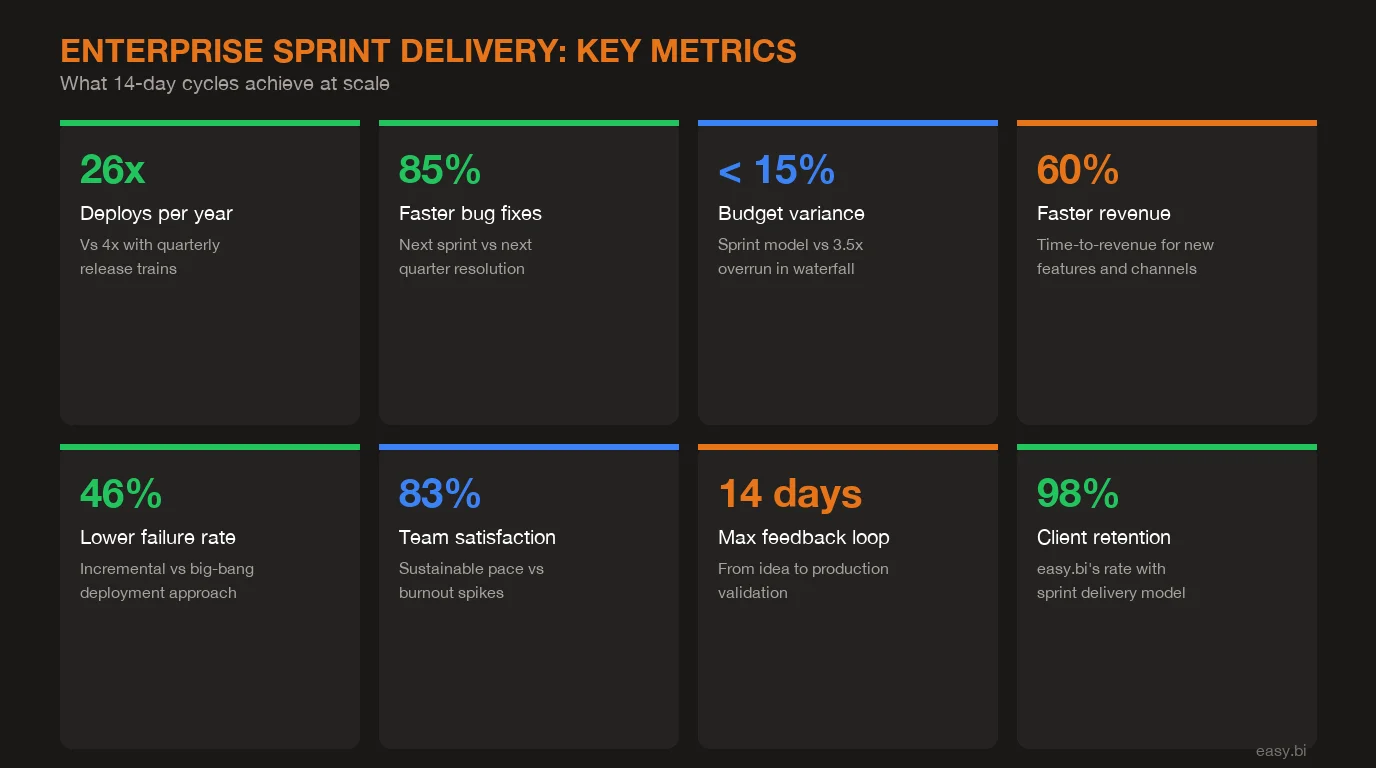

The numbers confirm the problem. 70% of digital projects fail to deliver their intended business outcomes[1]. The average enterprise software project runs 3.5x over budget when managed through multi-vendor coordination[2]. And the average time from feature request to production deployment in enterprise retail is 4-6 months[3].

That means when your e-commerce director identifies a conversion opportunity in January, the fix goes live in June. By June, the competitive landscape has shifted, user behavior has changed, and the original insight may no longer apply.

The delivery bottleneck is not technical. It is organizational. Enterprise e-commerce teams operate inside structures built for risk avoidance: approval committees, staging environment queues, release windows that happen 4 times a year. Each layer adds safety on paper and removes speed in practice.

Meanwhile, the competitors your enterprise is watching - the direct-to-consumer brands, the vertical SaaS players, the marketplace disruptors - ship weekly. They test pricing changes on Tuesday, measure results on Thursday, and iterate the following week. McKinsey research shows that companies in the top quartile of delivery speed grow revenue 60% faster than their peers[4].

The gap is not closing. It is widening every quarter your team spends in planning meetings instead of shipping to production.

See how our team delivers +35% avg conversion lift across 30+ e-commerce projects.

What Is the Real Cost of Quarterly Release Cycles?

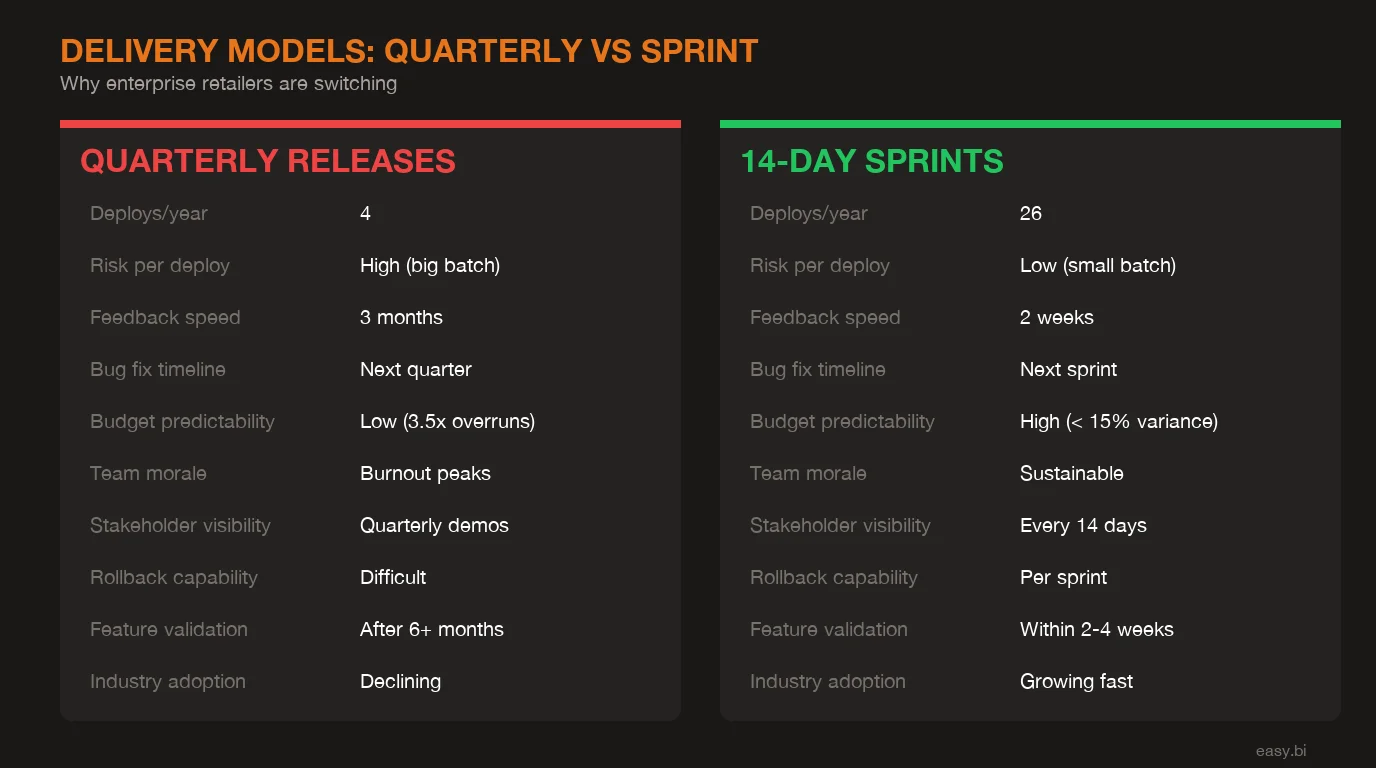

Quarterly releases are not just slow. They are expensive in ways that do not appear on any project budget.

Lost conversion opportunities. Every week a checkout improvement sits in a staging queue is a week of lost revenue. For a mid-market retailer processing EUR 500K in monthly online revenue, a 3% conversion improvement delayed by 12 weeks represents EUR 45K in unrealized revenue. Multiply that across 4-5 improvements per quarter, and the cost of slow delivery dwarfs the cost of the development itself.

Batch risk. Quarterly releases bundle 3 months of changes into a single deployment. When something breaks - and with 50+ changes going live simultaneously, something always breaks - isolating the root cause takes days instead of hours. DORA research shows that elite-performing teams deploy on demand and have a change failure rate under 5%, while low performers who batch releases see failure rates above 46%[5].

Team burnout. The quarterly release cycle creates a predictable pattern: slow planning phase, frantic development crunch, stressful deployment weekend, post-release firefighting. 83% of developers experience burnout, and batch release cycles are a primary contributor[6]. Burned-out teams ship worse code. Worse code creates more incidents. More incidents create more burnout. The cycle compounds.

Competitor agility gap. While your team plans Q3 releases, Shopify merchants are A/B testing checkout flows daily. Amazon runs 23 experiments per second on its platform. You do not need to match Amazon's scale, but you need to close the feedback loop faster than once per quarter.

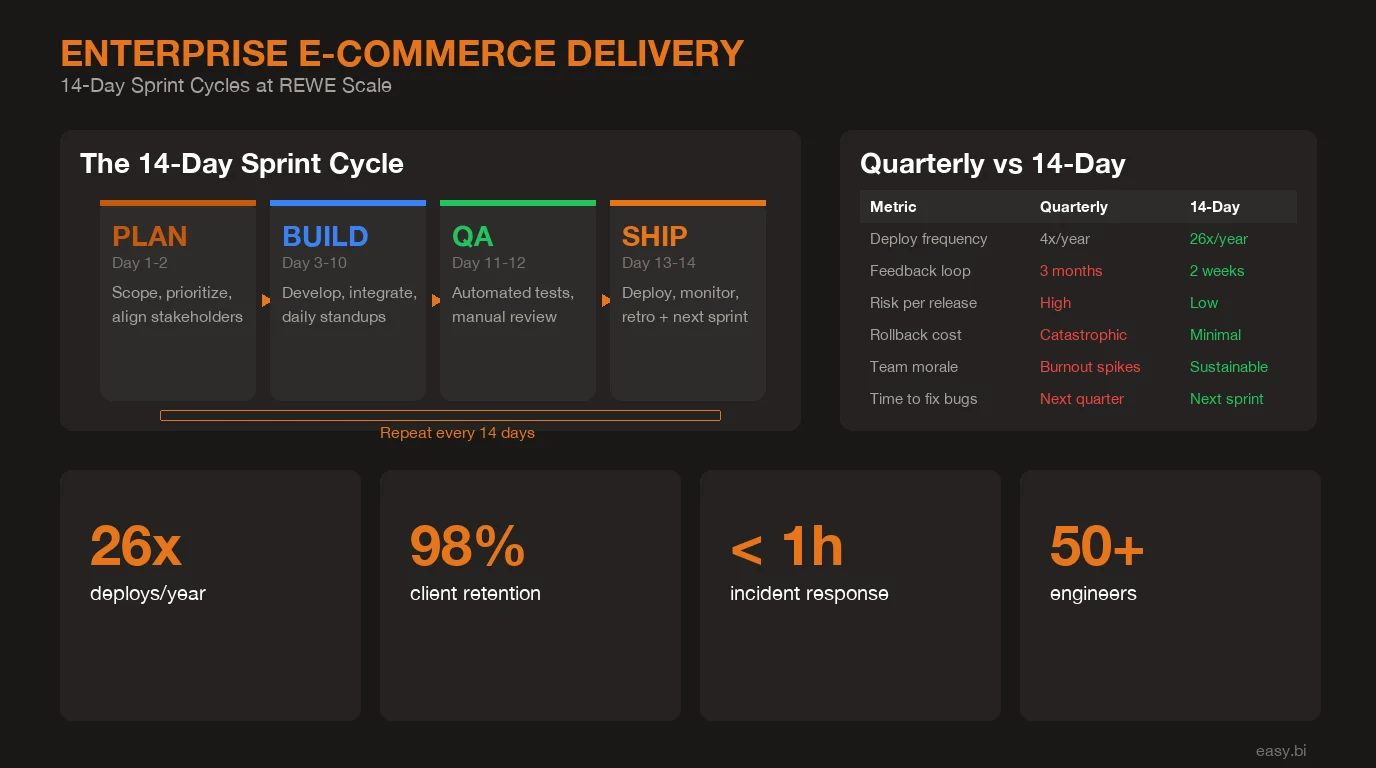

| Dimension | Quarterly Release Model | 14-Day Sprint Delivery |

|---|---|---|

| Deployment frequency | 4x per year | 26x per year |

| Feature request to production | 4-6 months | 2-6 weeks |

| Change failure rate | 31-45% | Under 15% |

| Batch risk per release | High (50+ changes) | Low (5-10 changes) |

| Team satisfaction | Crunch cycles, weekend deploys | Sustainable, predictable pace |

| Cost of delay per feature | 12-16 weeks of lost feedback | 0-2 weeks |

| Rollback complexity | Hours to days | Minutes to hours |

What Does a 14-Day Sprint Cycle Look Like at Enterprise Scale?

A 14-day sprint at enterprise scale is not a startup moving fast and breaking things. It is a structured delivery model with defined accountability at every stage. Here is how it works in practice.

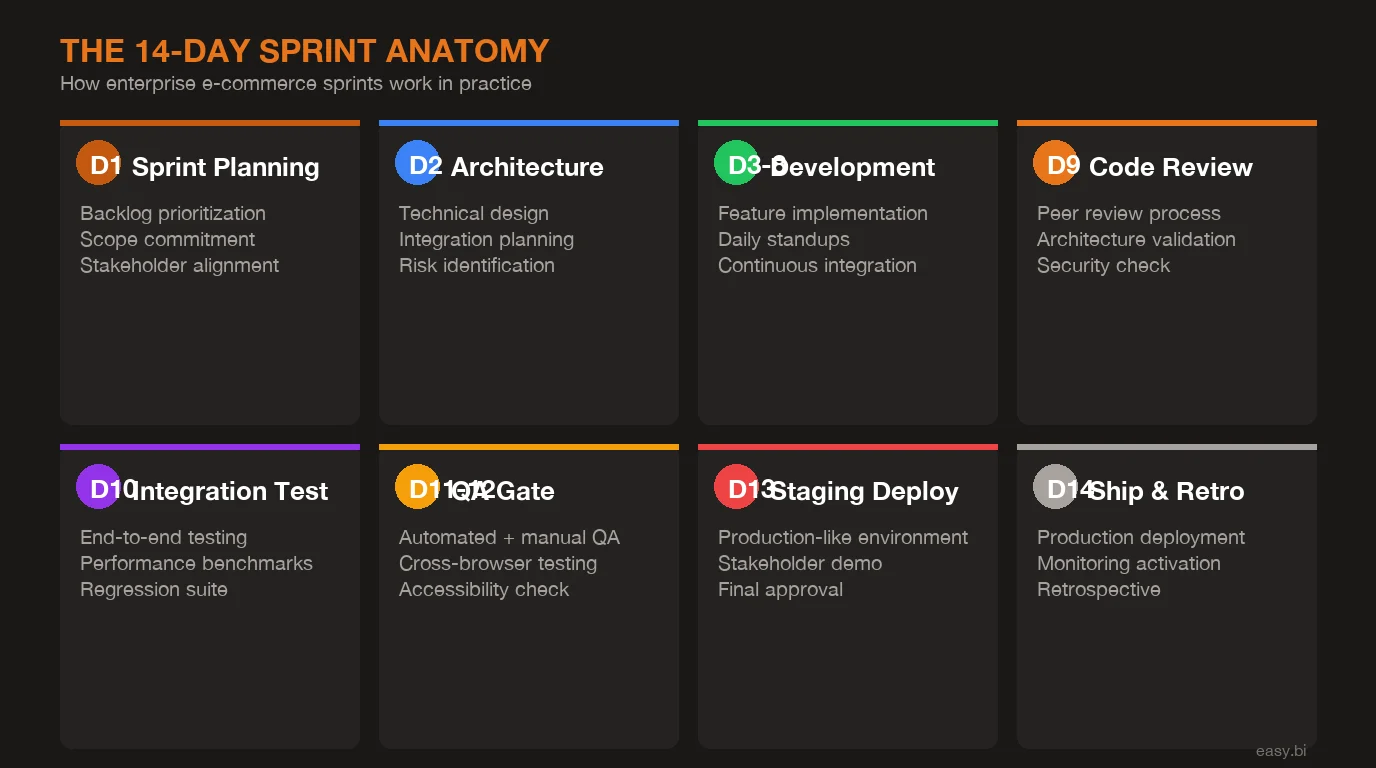

Days 1-2: Sprint planning with business stakeholders. The sprint does not start with a developer picking tickets from a backlog. It starts with a planning session where the Project Owner and business stakeholders agree on the scope for the next 14 days. This is not a feature wishlist. It is a commitment: these specific items will be in production in 12 days. The scope is defined in work packages with clear acceptance criteria.

Days 2-10: Development with daily standups. The development team executes against the defined scope. Daily standups surface blockers early - not at the end of the sprint when it is too late. Code reviews happen continuously, not in a batch at the end. Every commit triggers the CI/CD pipeline: automated tests, linting, security scanning. As detailed in our deep dive on the 14-day delivery methodology, the discipline of daily integration prevents the merge conflicts and integration failures that plague teams shipping less frequently.

Days 10-12: QA gate. This is non-negotiable. Automated regression tests cover existing functionality. Manual QA reviews every user-facing change. Performance testing validates that new code does not degrade page load times - critical for e-commerce where a 1-second delay reduces conversions by 7%[7]. If the QA gate fails, the deployment does not happen. Period. For teams scaling automated testing, our guide on automated QA with AI-assisted testing covers the frameworks that make this sustainable.

Day 13: Production deployment. Deployment happens during business hours, not on a Friday night. Feature flags control the rollout - new functionality can go live for 5% of users, then 25%, then 100%. Monitoring dashboards track error rates, conversion metrics, and performance in real time. If something breaks, rollback is automated and takes minutes, not hours.

Day 14: Retrospective and next sprint preparation. What went well. What needs improvement. What blocked progress. These are not feel-good meetings - they are the feedback loop that makes the next sprint better than the last one. By the afternoon, the next sprint's scope is already taking shape.

How Does REWE-Scale E-Commerce Work With This Model?

REWE Group is not a startup that can pivot its entire platform in a single sprint. It is one of Europe's largest retail groups with e-commerce operations spanning multiple countries, millions of customers, and complex integrations with supply chain, logistics, and payment systems.

Running continuous delivery at this scale requires specific architectural and operational patterns.

Parallel teams with clear domain boundaries. Multiple teams work on different areas of the commerce platform simultaneously - checkout, product catalog, promotions, app experience - without stepping on each other. Each team owns a defined domain, has its own sprint cadence, and deploys independently. This is possible because the architecture supports it: API boundaries between domains, shared design systems for UI consistency, and a common CI/CD pipeline. When you need to scale an engineering team quickly, these boundaries determine whether adding people adds velocity or adds chaos.

Feature flags for gradual rollout. No feature goes from zero to 100% of users in a single deployment. Feature flags allow new functionality to be tested with a small percentage of users in production, validated against real behavior data, and expanded or rolled back based on results. This is especially critical in multi-country operations where a checkout change that works in Germany may behave differently in Austria due to payment method preferences or regulatory requirements.

A/B testing integrated into sprint delivery. Sprint delivery and experimentation are not separate workstreams. When a sprint delivers a new product recommendation algorithm, it ships behind a feature flag with the current algorithm as the control. The A/B test runs for the statistically required duration, results are analyzed, and the winning variant becomes the default in a subsequent sprint. This means every sprint is not just a delivery event - it is a learning event.

Monitoring and rollback capabilities. Real-time monitoring tracks error rates, conversion funnels, page performance, and API response times after every deployment. Automated alerts trigger when metrics deviate from baseline thresholds. Rollback is a one-click operation that reverts to the previous deployment within minutes. In practice, this means the risk of any individual deployment approaches zero - which is exactly why you can afford to deploy 26 times per year instead of 4.

How Do You Maintain Quality at This Speed?

Speed without quality is not speed. It is technical debt with a deployment date. The reason 14-day sprints produce higher quality than quarterly releases is counterintuitive but well-documented.

DORA's State of DevOps research, based on data from over 36,000 professionals, shows a consistent pattern: teams that deploy more frequently have lower change failure rates[5]. This is not a contradiction. Smaller, more frequent deployments are easier to test, easier to review, and easier to debug when something goes wrong.

Automated regression testing. Every sprint builds on a growing automated test suite. Before any deployment, the full regression suite runs against the staging environment. For a mature e-commerce platform, this means 500-2,000 automated test cases executing in under 30 minutes. Tests cover critical user paths: search, product detail pages, add to cart, checkout, payment processing, order confirmation.

CI/CD pipeline enforcement. The pipeline is not optional. Every pull request must pass automated tests, code review, and security scanning before it can be merged. No developer, no matter how senior, can bypass the pipeline. This eliminates the class of errors that come from "just this once" shortcuts during crunch time - shortcuts that happen every quarter in a batch release model.

Manual QA for user experience. Automated tests catch functional regressions. They do not catch a confusing button placement, a misleading product description, or a checkout flow that technically works but feels broken. Manual QA by dedicated Assurance specialists reviews every user-facing change before deployment. This is why the QA gate exists as a distinct phase in the sprint, not an afterthought squeezed into the final hours.

I have seen teams cut QA to save sprint time. Every single one regretted it within 2 sprints. The QA gate is what makes the speed sustainable.

+35% conversion. +22% AOV. EUR 50M+ GMV processed.

Our Shopware-certified team delivers e-commerce at scale with 14-day sprint cycles. 80% less manual work through system integrations.

Start with a Strategy CallWhat Team Structure Makes This Work?

The 14-day sprint model requires a specific team structure. It does not work with a pool of interchangeable developers assigned to your project when they are available. It requires dedicated people with defined roles.

Project Owner. Not a project manager tracking hours in a spreadsheet. The Project Owner owns the delivery outcome. They run sprint planning, manage stakeholder expectations, prioritize scope when trade-offs are needed, and ensure that every sprint delivers business value - not just completed tickets. They are accountable for the sprint result every 14 days.

Customer Advocate. The bridge between business priorities and technical execution. The Customer Advocate ensures that the development team understands why a feature matters to the business, not just what needs to be built. They translate business KPIs into acceptance criteria and validate that sprint deliverables actually move the metrics that matter.

Development team (1-6 FTE). Senior engineers with domain expertise in e-commerce platforms - Shopware, commercetools, headless commerce architectures. Not junior developers learning on your project. The team scales based on velocity demands: start with 1 FTE to establish the sprint cadence and delivery rhythm, then scale to 4-6 FTE as the product roadmap requires it.

This is where most agencies fall apart. They sell you a team of 6 on day one, staff it with whoever is available, and bill hours regardless of output. The sprint model forces accountability: every 14 days, there is a visible, measurable delivery. Either the sprint produced working software in production, or it did not. There is no hiding behind timesheets.

Why Can't Most Agencies Deliver on a 14-Day Cadence?

The agency model is built on selling hours. The sprint model is built on delivering outcomes. These are fundamentally different business models, and most agencies cannot switch because their entire operation - sales, staffing, billing, incentives - is optimized for the former.

Agencies that sell hours have no incentive to ship faster. A feature that takes 6 weeks generates more revenue than a feature that takes 2 weeks. Project managers are measured on utilization rates, not delivery speed. Senior developers are spread across 3-4 projects because billing them at 100% utilization is more profitable than dedicating them to one project where they deliver faster.

Gartner reports that organizations using dedicated development partners with outcome-based delivery models see 40% higher satisfaction scores and 25% better project success rates compared to traditional staff augmentation[8].

The 14-day sprint model works when the delivery partner has skin in the game. At easy.bi, we measure results every 14 days. Not just output - results. Did the sprint deliver working software to production? Did it move the business metric it was designed to move? Did the QA gate hold? These questions get answered 26 times per year, not in a quarterly business review where problems are already months old.

With 100+ projects delivered and a 98% client retention rate, the sprint cadence is not a methodology slide in a pitch deck. It is the reason clients stay.

How Do You Get Started?

You do not need to transform your entire e-commerce operation overnight. The path from quarterly releases to continuous 14-day delivery follows a proven sequence.

Start with one team, one domain. Pick the area of your e-commerce platform with the highest business impact and the clearest ownership boundaries. Checkout conversion. Product search. Promotions engine. Start with 1 FTE, a dedicated Project Owner, and a 14-day sprint cadence. Prove the model works on a bounded scope.

Establish the CI/CD pipeline and QA gate. Before scaling, make sure the delivery infrastructure is solid. Automated tests for the target domain. A deployment pipeline that enforces quality checks. Monitoring that catches issues in real time. This investment pays for itself within the first 3 sprints because it eliminates the manual, error-prone deployment processes that make quarterly releases so risky.

Scale based on velocity demands. Once the sprint cadence is proven, expand the team to 4-6 FTE and add parallel tracks for additional domains. The architecture and processes established in the first phase make scaling predictable - adding a second team doubles throughput without doubling coordination overhead because the domain boundaries and CI/CD infrastructure are already in place.

The enterprises that ship the fastest are not the ones with the largest teams. They are the ones with the tightest feedback loops. A 14-day sprint cycle gives you 26 feedback loops per year instead of 4. Each loop compounds: better data, faster decisions, higher-quality software, happier teams.

That is how REWE-scale e-commerce delivery works. Not with bigger teams or longer planning cycles, but with a disciplined cadence that turns delivery into a competitive advantage.

References

- [1] McKinsey & Company, "Delivering Large-Scale IT Projects On Time, On Budget, and Source

- [2] Standish Group, CHAOS Report - Multi-vendor software projects average 3. Source

- [3] Forrester, "The State of Enterprise Commerce" - Average enterprise feature deliv Source

- [4] McKinsey & Company, "Developer Velocity" - Companies in the top quartile of deve Source

- [5] DORA, "Accelerate: State of DevOps" - Elite performers deploy on demand with a c Source

- [6] Haystack Analytics, "Developer Burnout Index" - 83% of software developers exper Source

- [7] Akamai / Google, "Web Performance Research" - A 1-second delay in page load time Source

- [8] Gartner, "IT Outsourcing Trends" - Organizations using outcome-based delivery mo Source

Explore Other Topics

Ready to scale your e-commerce?

30-minute call with an engineering lead. No sales pitch - just honest answers about your project.

98% engineer retention · 14-day delivery sprints · No lock-in contracts