Scaling From 2 to 20 Engineers in 6 Weeks (Without Losing Quality)

Table of Contents+

- When Does Rapid Scaling Become Necessary?

- Why Does Scaling from 2 to 20 Usually Fail?

- How Do You Onboard Engineers at Speed Without Losing Weeks?

- What Happens to Code Review Standards During Growth?

- How Do You Maintain Architecture Quality While Scaling?

- What Does the Team Topology Look Like at 20 Engineers?

- How Does easy.bi Scale Teams Across 4 Countries?

- A Practical Scaling Checklist for Your Next Project

- References

TL;DR

You signed the contract on Monday. The client needs a production MVP in 4 months. Your current team is 2 senior engineers and a Project Owner. By week 6, you need 20.

Key Takeaways

- •Teams of 5-9 engineers deliver the highest productivity per person. Teams larger than 15 see 50% lower per-capita output. Scaling to 20 means structuring multiple small squads with clear domain ownership, not growing one large group.

- •Onboarding at speed requires a documented architecture, automated environment setup, and a 'first PR on day 2' culture. Every day a new engineer spends reading code without contributing is a day of lost velocity for the entire team.

- •Code review standards must be established before scaling begins, not after quality problems surface. Reviews catch 60-65% of defects before production - but only if the process is non-negotiable regardless of delivery pressure.

- •The 149,000 IT specialist shortage in Germany makes internal scaling through hiring impractical for most mid-market timelines. A nearshore partner with an existing engineering culture can assemble 20 engineers in weeks, not months.

- •easy.bi scales teams from 2 to 20 across 4 countries by adding pre-integrated sub-teams - engineers who already share coding standards, CI/CD pipelines, and communication patterns. Scaling within a culture beats assembling strangers.

How to scale an engineering team from 2 to 20 in 6 weeks without losing code quality or delivery velocity. Onboarding frameworks, code review standards during growth, team topology at scale, and easy.bi's 4-country scaling model based on 100+ DACH projects.

You signed the contract on Monday. The client needs a production MVP in 4 months. Your current team is 2 senior engineers and a Project Owner. By week 6, you need 20.

This is not a theoretical scenario - it is a reality we face multiple times per year at easy.bi, and it is a reality that breaks most engineering organizations.

The challenge is not finding 18 additional bodies. The challenge is scaling from 2 to 20 without the quality collapse that typically follows rapid team growth.

More engineers means more communication channels, more merge conflicts, more architectural divergence, and more opportunities for the codebase to become a patchwork of conflicting patterns. Get the scaling wrong, and you ship slower at 20 than you did at 2.

This article covers how we approach rapid scaling across 100+ projects for DACH mid-market clients - the frameworks, the mistakes we have learned from, and the specific practices that keep code quality stable while team size multiplies by 10x.

When Does Rapid Scaling Become Necessary?

Rapid scaling is triggered by four scenarios we see repeatedly in the DACH mid-market.

New product launches with fixed deadlines. A client secures a market opportunity that closes in 6 months. The product needs to be live by then, and the scope requires 15-20 engineers working in parallel.

Hiring internally is not an option - the average time to fill an IT position in Germany is 7.1 months [1]. By the time your first hire starts, the market window has closed.

New product launches with fixed deadlines

Legacy modernization under business pressure. The old system can no longer support the business, and every week of delay costs revenue. Modernizing while keeping the legacy system running requires a larger team than maintenance alone - you need engineers building the new system while others maintain the old one.

Post-acquisition integration. Two companies merge, and their technology stacks need to integrate. The integration timeline is driven by business synergy targets, not engineering capacity. Suddenly you need 3x the engineering capacity you had yesterday.

Legacy modernization under business pressure

Regulatory deadlines. GDPR, PSD2, or industry-specific compliance requirements create hard deadlines that do not care about your hiring pipeline. The work must be done by a specific date, and your current team cannot absorb the additional scope.

In all four scenarios, the constraint is the same: you need engineering capacity faster than internal hiring can deliver. Germany faces a shortage of 149,000 IT specialists [2]. Even companies offering EUR 85,000+ salaries and modern tech stacks cannot fill senior positions in under 4-7 months.

Rapid scaling through internal hiring is a contradiction in terms in the current DACH talent market.

See how enterprises modernize with one team.

Why Does Scaling from 2 to 20 Usually Fail?

The failure pattern is predictable. A small founding team of 2-3 senior engineers builds a clean, well-architected system. Under deadline pressure, the team scales to 15-20 by adding engineers in bulk. Within 8 weeks, velocity drops instead of increasing. Code quality deteriorates.

The founding engineers spend all their time reviewing code instead of building features. The new engineers produce work that does not match the established patterns. Integration conflicts multiply. The team is larger but shipping slower.

Three forces drive this collapse.

Brooks's Law is real

Brooks's Law is real. Adding people to a software project increases communication overhead quadratically. A team of 5 has 10 communication channels. A team of 15 has 105. A team of 20 has 190. Without explicit structures to manage this complexity, every engineer spends more time coordinating than coding.

Implicit knowledge does not scale. The founding team of 2-3 engineers carries architecture decisions, coding conventions, and domain context in their heads. When the team was small, a quick conversation transferred that knowledge.

At 20 engineers, those conversations become a full-time job - and the founding team becomes a bottleneck for every decision.

Implicit knowledge does not scale

Architecture was not built for parallel work. A codebase designed for 2 engineers working on one feature at a time breaks when 10 engineers need to work on 5 features simultaneously.

Tight coupling, shared state, and monolithic deployments create merge conflicts and integration failures that eat the velocity gains from additional headcount.

Research confirms this pattern. Teams of 5-9 people deliver the highest productivity per person. Teams larger than 15 see 50% lower per-capita output [3]. The solution is not to avoid scaling - it is to scale deliberately with structures that manage the complexity.

How Do You Onboard Engineers at Speed Without Losing Weeks?

Standard enterprise onboarding takes 4-8 weeks before a new engineer reaches full productivity. When you are scaling from 2 to 20 in 6 weeks, you cannot afford to lose half that timeline to onboarding. The goal is: first meaningful pull request within 48 hours of joining the project.

This requires investment before scaling begins.

Automated environment setup

Automated environment setup. A new engineer should go from zero to a running local development environment in under 2 hours. That means a single script or container setup that installs dependencies, seeds the database, and runs the test suite.

If your environment setup requires a 10-page wiki and 3 hours of manual configuration, fix it before you scale. Every manual step is a delay multiplied by every new team member.

Architecture decision records (ADRs). The founding team must document the key architectural decisions - why the system is structured this way, what alternatives were considered, and what trade-offs were accepted. New engineers do not need to agree with every decision.

They need to understand the reasoning so they do not accidentally undermine it.

| Onboarding Component | Time Investment (before scaling) | Time Saved (per new engineer) |

|---|---|---|

| Automated environment setup script | 8-16 hours | 4-6 hours per person |

| Architecture decision records | 4-8 hours per major decision | 2-3 days of context-seeking per person |

| Coding standards document | 4-6 hours | 1-2 weeks of pattern inconsistency per person |

| Starter tasks with clear scope | 2-4 hours per task | 3-5 days of unproductive exploration per person |

| CI/CD pipeline documentation | 2-4 hours | 1-2 days of deployment confusion per person |

Starter tasks. Pre-define a set of small, well-scoped tasks that new engineers can pick up on day one. These tasks should be real work - not busywork - that introduces the engineer to the codebase, the testing patterns, and the PR review process.

A task like "add input validation to this existing endpoint" teaches more about the codebase in 4 hours than reading documentation for 2 days.

Architecture decision records (ADRs)

Pair programming during week one. Every new engineer pairs with a founding team member for their first 2-3 tasks. This transfers implicit knowledge at 10x the speed of documentation.

It also calibrates quality expectations from day one - the new engineer sees what "good" looks like in this specific codebase, not in theory.

The difference between a 2-day onboarding and a 4-week onboarding, multiplied by 18 new engineers, is the difference between shipping your MVP on time and missing the deadline by 2 months. Invest in onboarding infrastructure before you scale, not after.

What Happens to Code Review Standards During Growth?

Code review is the first casualty of rapid scaling. The pattern is always the same: the founding team, overwhelmed by the volume of incoming PRs, starts approving code faster and with less scrutiny. Review comments become superficial. Architectural violations slip through.

Technical debt accumulates at the exact moment you can least afford it.

This is a mistake with compounding costs. Code review catches 60-65% of defects before they reach production [4]. Relaxing review standards during scaling means more defects in production, more hotfixes disrupting sprint work, and more time spent debugging issues that should have been caught in review.

Distribute review responsibility

The solution is structural, not motivational. You cannot tell reviewers to "be more thorough" when they have 15 PRs waiting and a sprint deadline approaching. You need systems that maintain quality without depending on individual discipline.

Distribute review responsibility. Do not funnel all reviews through the founding 2-3 engineers. As you scale, identify senior engineers in each sub-team who become domain-level reviewers. The founding team reviews architecture-level decisions and cross-team interfaces. Sub-team leads review implementation details within their domain. This distributes the load while maintaining standards.

Automated quality gates

Automated quality gates. Linters, formatters, static analysis, and test coverage thresholds should run in CI before a human reviewer ever sees the PR. If the code does not pass automated checks, it does not reach the review queue.

This eliminates 30-40% of review comments (formatting, naming conventions, obvious bugs) and lets human reviewers focus on logic, architecture, and business correctness.

PR size limits. Enforce a maximum PR size - 400 lines of changed code is a practical upper bound. Large PRs get rubber-stamped because reviewers cannot maintain attention across 1,000+ lines of changes. Small PRs get thorough reviews because the cognitive load is manageable.

This one practice has more impact on review quality than any other during rapid scaling.

How Do You Maintain Architecture Quality While Scaling?

Architecture quality degrades during scaling because new engineers make reasonable local decisions that create global inconsistency. Engineer A implements a service using pattern X. Engineer B, working on a different feature, implements a similar service using pattern Y. Both approaches work. Neither engineer is wrong.

But the codebase now has two patterns for the same problem, and every future engineer must guess which one to follow.

Preventing this requires architectural guardrails that are enforced structurally, not by convention.

Modular architecture from day one

Modular architecture from day one. The codebase must support parallel work before parallel work begins. This means clear module boundaries, well-defined interfaces between modules, and independent deployability where possible. A monolith with 10 engineers all committing to the same set of files creates merge conflicts that kill velocity.

A modular monolith or microservices architecture with clear ownership boundaries enables 4-5 sub-teams to work independently.

API contracts between teams. When sub-teams need to integrate, they agree on interfaces before building implementations.

OpenAPI specifications, shared type definitions, or contract testing (using tools like Pact) prevent the integration failures that surface in the last sprint - when it is too late to fix them without slipping the deadline.

API contracts between teams

Architecture review for cross-cutting concerns. Not every PR needs an architecture review. But any change that introduces a new pattern, adds a new external dependency, or modifies a shared interface must go through the founding team or a designated architecture owner. This prevents pattern proliferation while keeping day-to-day development fast.

Continuous integration is the safety net that catches architectural drift early. CI reduces integration problems by 90%, allowing teams to develop cohesive software more rapidly [5]. Every sub-team merges to the main branch at least daily. Integration tests run on every merge. Architectural violations surface within hours, not weeks.

Siemens, Lekkerland, WeberHaus chose us

One integrated partner. Three core competencies. From insight to production, with no handover gaps.

Start with a Strategy CallWhat Does the Team Topology Look Like at 20 Engineers?

At 20 engineers, you are not managing one team. You are managing an engineering organization. The topology that works - validated across our largest client engagements - is a structure of 4-5 autonomous squads, each with clear domain ownership.

| Squad | Size | Ownership | Key Interfaces |

|---|---|---|---|

| Core Platform | 4-5 engineers | Shared services, authentication, data models | API contracts to all feature squads |

| Feature Squad A | 3-4 engineers | Customer-facing features, domain A | Core Platform APIs, Squad B events |

| Feature Squad B | 3-4 engineers | Customer-facing features, domain B | Core Platform APIs, Squad A events |

| Integration Squad | 3-4 engineers | Third-party integrations, data pipelines | Core Platform APIs, external systems |

| Quality + DevOps | 2-3 engineers | CI/CD, automated testing, infrastructure | All squads (platform-level support) |

Each squad operates as a mini-team with its own sprint backlog, its own deployment pipeline, and its own domain expertise. Squads synchronize through weekly cross-team standups and shared sprint reviews - not through constant cross-team Slack messages.

The Project Owner coordinates across squads, managing dependencies and priorities at the program level. This is not optional overhead - it is the coordination mechanism that prevents the 190 communication channels at 20 engineers from becoming chaos.

At easy.bi, every project at this scale gets a dedicated Project Owner whose sole job is keeping squads aligned while protecting their autonomy.

How Does easy.bi Scale Teams Across 4 Countries?

When a client needs to scale from 2 to 20 engineers, we do not post job ads and wait. We assemble the team from our existing 50+ engineers across Hamburg, Frankfurt, Ljubljana, and Skopje - engineers who already share coding standards, CI/CD practices, and communication patterns.

This is the critical difference between scaling through hiring and scaling within an existing engineering culture. When we add a sub-team of 4 engineers from Ljubljana to your project, those 4 engineers have already worked together. They have established code review norms. They know our Performance Scrum methodology.

They have delivered in 14-day sprint cycles on enterprise projects before. The onboarding period is about your domain - not about how to work together.

Talent pool depth

The 4-country structure serves scaling in three ways.

Talent pool depth. Drawing from Germany, Austria, Switzerland, and Slovenia gives us access to a combined talent pool that is impossible to match through single-country hiring. Slovenia alone employs over 30,000 IT professionals, 92% university-educated, with the highest English proficiency in CEE [6].

When a project needs to scale fast, we have the depth to staff it without diluting quality.

Timezone alignment

Timezone alignment. All 4 countries sit in the CET/CEST timezone or 1 hour adjacent. A 20-person team split across Hamburg and Ljubljana has full overlap during core working hours. No 18-hour feedback loops. No blocking issues that wait overnight for resolution.

This is why 73% of German IT decision-makers prefer CEE nearshore partners over offshore alternatives [7] - timezone alignment is not a convenience, it is a project success factor.

Dedicated Project Owner per project. Every scaled engagement gets a Project Owner who coordinates across squads, manages the client relationship, and ensures architectural coherence.

The Project Owner is not a part-time role added to an engineer's responsibilities - it is a full-time position staffed by someone whose only job is making your project successful. This role is the glue that holds a 20-person distributed team together.

Our scaling model has been tested on projects for Siemens (unified UI library across multiple teams), Lekkerland (SAP integration requiring parallel workstreams), and WeberHaus (complete process digitization with offline capability).

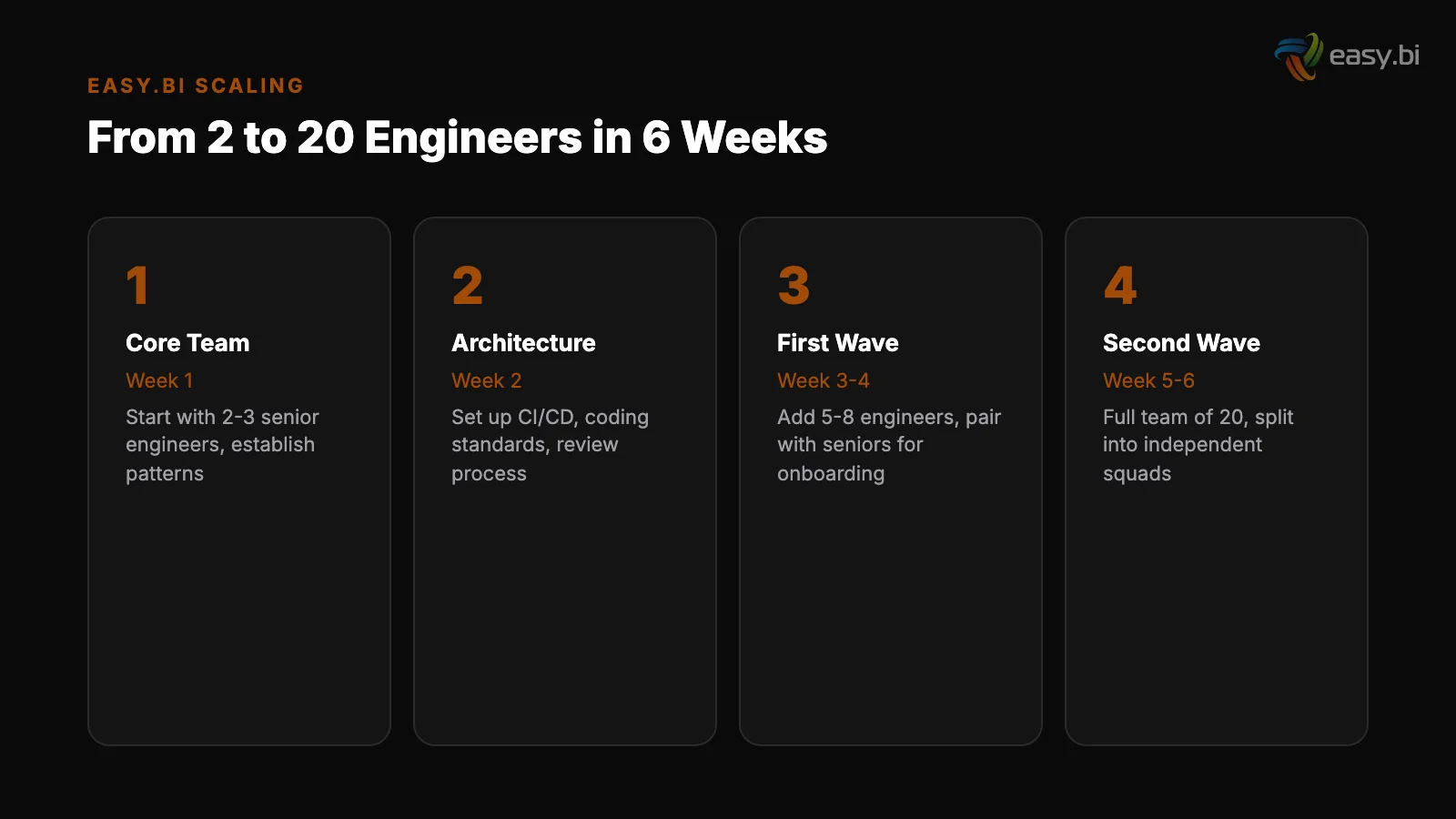

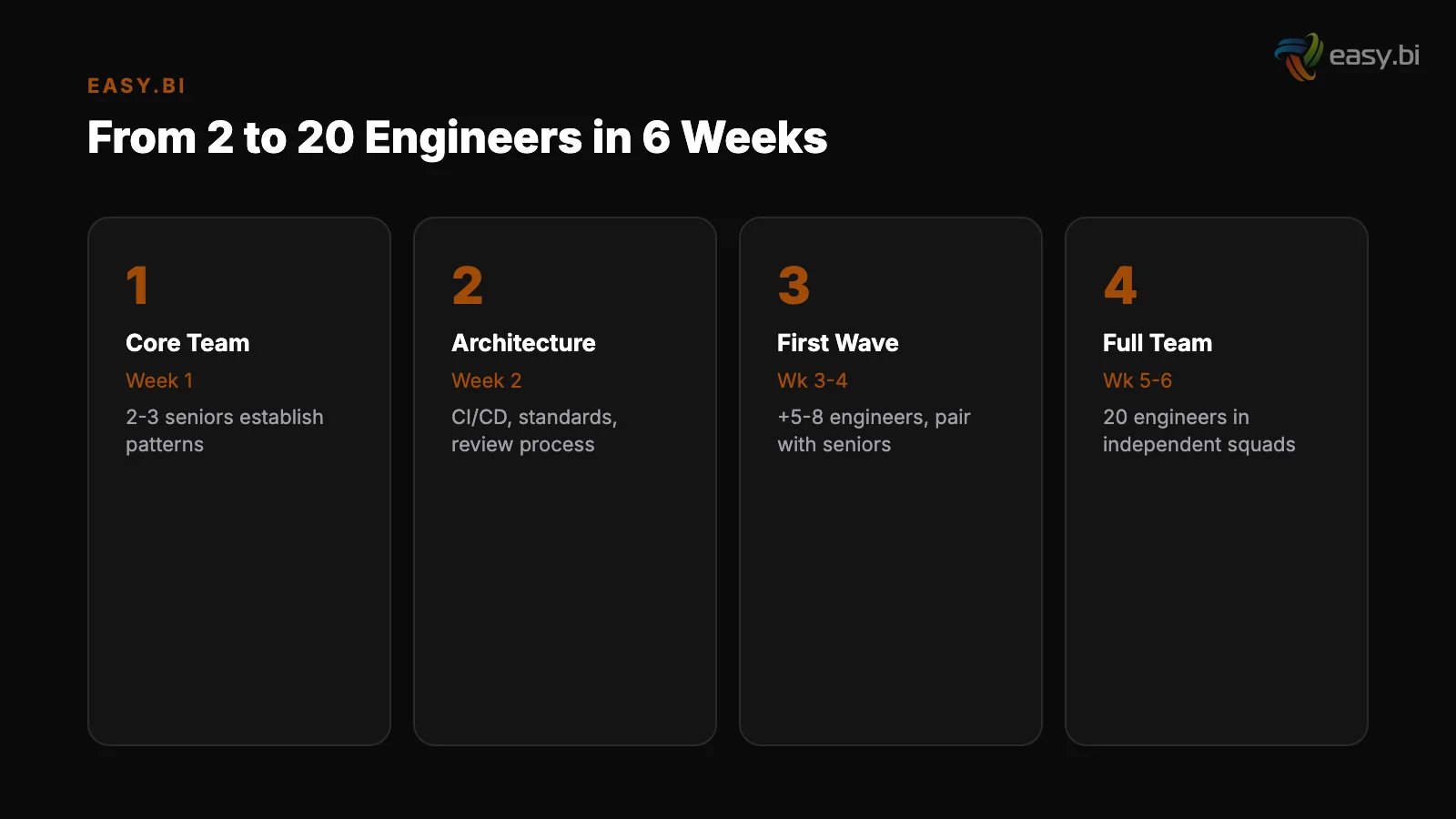

In each case, the pattern was the same: start with a core team of 3-5, establish architecture and standards, then scale by adding entire sub-teams with existing cohesion.

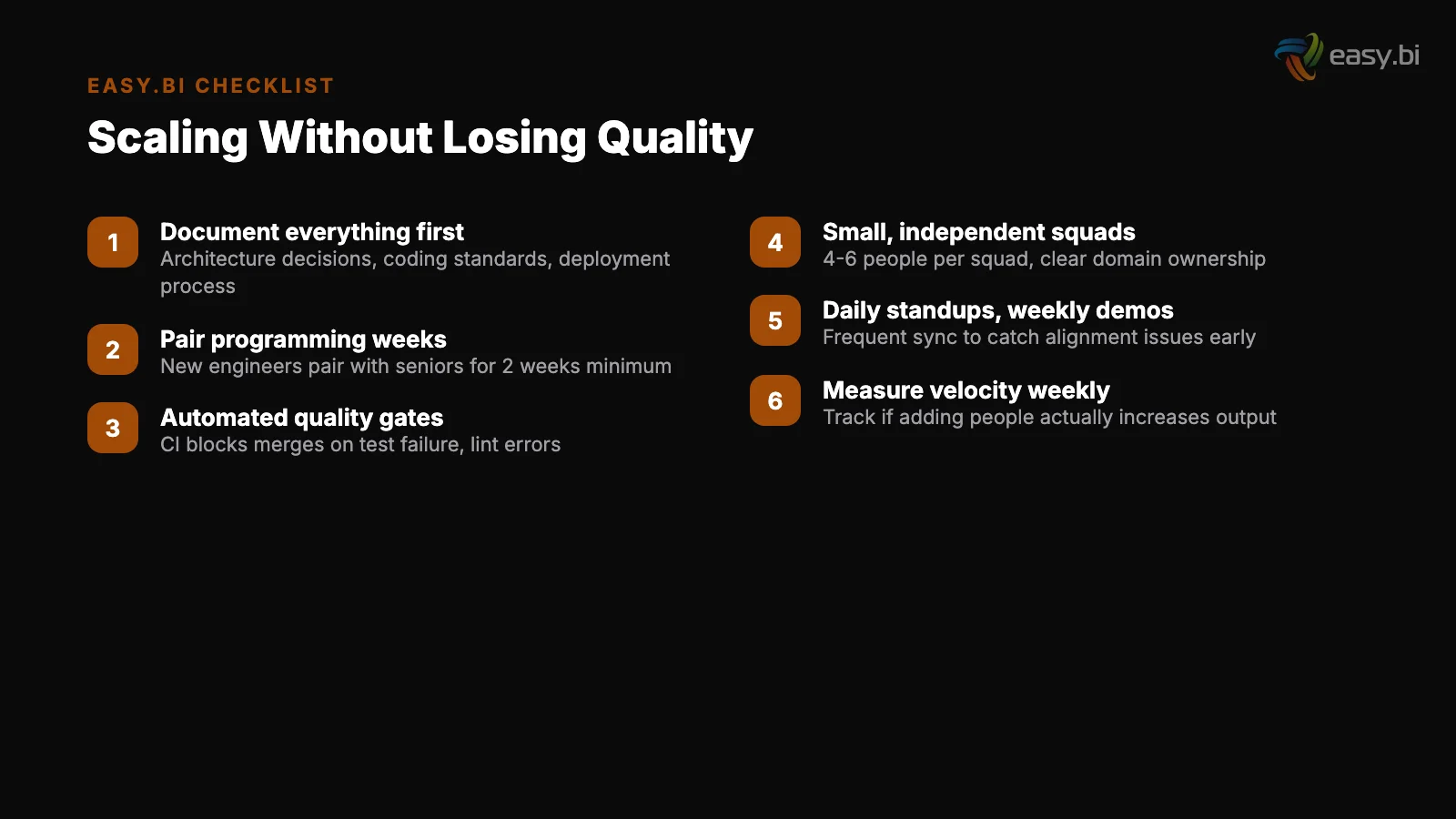

A Practical Scaling Checklist for Your Next Project

Before scaling from any team size to a larger one, validate these prerequisites. Missing even one creates the quality collapse that rapid scaling is known for.

Before scaling (week 0):

Before scaling (week 0)

- Automated environment setup runs in under 2 hours for a new engineer

- Architecture decision records exist for all major structural choices

- Coding standards are documented and enforced by CI (linters, formatters)

- Test coverage exceeds 70% on critical paths

- CI/CD pipeline deploys to staging automatically on every merge

- Module boundaries are defined with clear interfaces

- Starter tasks exist for new engineers to contribute within 48 hours

During scaling (weeks 1-4):

- New engineers pair with existing team members for first 2-3 tasks

- Sub-teams of 3-5 form around bounded domains, not random task assignments

- Code review responsibility distributes to sub-team leads

- PR size limits enforce reviewable changes (under 400 lines)

- Daily cross-team standup keeps squads synchronized (15 minutes maximum)

- Architecture owner reviews all cross-cutting changes

After scaling stabilizes (weeks 5-6):

During scaling (weeks 1-4)

- Each sub-team has independent sprint backlog and deployment pipeline

- Cross-team dependencies are managed through API contracts, not ad hoc coordination

- Sprint velocity per squad is tracked independently

- Retrospectives happen at both squad and program level

The companies that scale successfully treat team topology as an engineering discipline - not as an org chart exercise. Cross-functional teams are 35% more productive than siloed teams [8]. Structure your squads as cross-functional units with full-stack ownership, not as frontend/backend silos that hand work back and forth.

For the broader strategic framework on structuring your development capacity, read our pillar guide on the build vs. buy decision for enterprise software projects. For an honest comparison of where to source your scaled team, see in-house vs. nearshore vs. offshore.

And to understand why the team you build needs to stay together to deliver compound returns, read what drives 98% retention in software teams.

If you are facing a scaling challenge right now - a product launch, a legacy modernization, or a post-acquisition integration that needs more engineers than your current team can provide - explore our custom solutions approach or book an expert call to discuss your specific situation.

We will tell you honestly whether your project needs 20 engineers or whether 8 well-structured ones would ship faster.

References

- [1] Bundesagentur fur Arbeit (2024). arbeitsagentur.de

- [2] Bitkom (2024). "Germany faces a shortage of 149,000 IT specialists." bitkom.org

- [3] QSM / Putnam Research (2023). qsm.com

- [4] SmartBear / Cisco (2023). smartbear.com

- [5] ThoughtWorks / Martin Fowler (2023). thoughtworks.com

- [6] SPIRIT Slovenia (2024). spiritslovenia.si

- [7] Hays (2024). "73% of German IT decision-makers prefer CEE nearshore partners ove hays.de

- [8] Harvard Business Review / Bain (2023). hbr.org

Explore Other Topics

Ready to transform your business?

30-minute call with an engineering lead. No sales pitch - just honest answers about your project.

98% engineer retention · 14-day delivery sprints · No lock-in contracts