Performance Scrum: How We Deliver Working Software Every 14 Days

Table of Contents+

- Why Does Generic Agile Fail at Enterprise Scale?

- What Makes Performance Scrum Different?

- How Do Work Packages Create Predictability?

- What Does a 14-Day Sprint Cycle Look Like?

- How Does Performance Scrum Handle Scope Changes?

- Why Does 14-Day Delivery Beat 4-Week Cycles?

- What Results Does Performance Scrum Produce?

- How to Evaluate Whether Your Current Methodology Works

- References

TL;DR

Performance Scrum is the delivery methodology easy.bi uses across every engagement. It combines the governance structure of Prince2 - work packages, stage gates, defined roles - with the delivery mechanics of Scrum: 2-week sprints, daily standups, retrospectives, and working software at the end of every cycle.

Key Takeaways

- •Performance Scrum combines Prince2 governance with Scrum delivery mechanics. The result: structured work packages that make every sprint predictable and every outcome measurable - not the vague 'we do agile' that 71% of organizations claim.

- •2-week sprint cycles deliver 40% more features per quarter than 4-week cycles. The shorter feedback loop catches misalignment in days instead of months, which is why 57% of project failures trace to communication breakdowns.

- •Work packages are the core unit of delivery. Each one defines scope, acceptance criteria, estimated effort, and business outcome before a single line of code is written. This is the Prince2 discipline that generic Scrum lacks.

- •Sprint retrospectives that produce at least one process improvement per sprint increase team velocity by 18% over 6 months. Performance Scrum enforces this - every retro ends with a concrete change, not just a discussion.

- •The methodology has been validated across 100+ projects since 2015 with a 98% client retention rate. The industry average for IT outsourcing retention is 72-78%. The 20+ percentage point gap is the compounding result of delivering working software every 14 days.

Performance Scrum combines Prince2 governance with 2-week sprint delivery. Learn how structured work packages, fixed cadence, and outcome accountability produce working software every 14 days - backed by data from 100+ projects and 98% client retention.

Performance Scrum is the delivery methodology easy.bi uses across every engagement. It combines the governance structure of Prince2 - work packages, stage gates, defined roles - with the delivery mechanics of Scrum: 2-week sprints, daily standups, retrospectives, and working software at the end of every cycle.

The result is a methodology that ships production-ready code every 14 days while giving stakeholders the predictability and transparency they need to make business decisions.

This article explains how Performance Scrum works, why it outperforms both traditional waterfall and generic "we do agile" approaches, and what it looks like in practice across enterprise and mid-market projects in the DACH region.

Why Does Generic Agile Fail at Enterprise Scale?

71% of organizations report using agile approaches for their projects [1]. Yet 66% of software projects still fail or are challenged - over budget, over time, or with fewer features than planned [2]. If agile were a silver bullet, those numbers would not coexist. The problem is not agile itself.

The problem is how most teams implement it.

Generic agile - the kind described in a 2-day certification course - gives teams rituals (standups, sprints, retros) without the structural discipline needed for complex enterprise projects. A 3-person startup building an MVP can get away with loose sprint goals and informal backlog management.

A 10-person team building an order management system for a German manufacturer cannot.

The failure patterns are predictable. Sprint goals are vague ("make progress on the API"). Acceptance criteria are undefined or change mid-sprint. Scope creep affects 52% of all projects, increasing delivery time by an average of 27% [3]. Retrospectives happen but produce no actionable changes.

After 6 months, the team has been "agile" the entire time and has nothing to show for it except a backlog that grew faster than they could clear it.

The root cause: generic Scrum provides delivery cadence but not delivery governance.

It tells you to ship every 2 weeks but does not tell you how to define what "shipped" means, how to size work predictably, or how to ensure that every sprint moves the project toward a measurable business outcome. That governance gap is where Performance Scrum begins.

See how we deliver 60% faster time-to-market with 40% lower TCO than off-the-shelf.

What Makes Performance Scrum Different?

Performance Scrum bridges the gap between Prince2's governance discipline and Scrum's delivery speed. Prince2 provides the structure. Scrum provides the cadence. Together, they eliminate the two most common failure modes: projects that drift without accountability (generic agile) and projects that plan endlessly without shipping (traditional waterfall).

Three elements distinguish Performance Scrum from standard Scrum implementations:

Work packages instead of user stories alone

Work packages instead of user stories alone. A work package is a defined unit of delivery with explicit scope, acceptance criteria, estimated effort, dependencies, and the business outcome it serves. User stories describe what the user wants.

Work packages describe what the team will deliver, how it will be tested, and what business value it produces. This is the Prince2 discipline layered onto the Scrum delivery model.

Fixed 14-day delivery cadence with no exceptions. Every sprint ends with a production-ready increment. Not a demo of work in progress. Not a staging deployment. Code that could ship to production today.

This constraint forces the team to decompose work into 2-week deliverable chunks - which is the single most effective way to prevent scope creep and detect misalignment early.

Fixed 14-day delivery cadence with no exceptions

Mandatory process improvement per retrospective. Sprint retrospectives that produce at least one process improvement per sprint increase team velocity by 18% over 6 months [4]. In Performance Scrum, every retrospective ends with one concrete, trackable change to the team's process. Not a discussion. Not a feeling.

A specific change that will be measured in the next sprint.

Agile projects are 28% more successful than waterfall projects. But 66% of all software projects still fail. The gap between "doing agile" and delivering outcomes is where methodology discipline matters. [2]

How Do Work Packages Create Predictability?

Work packages are the core unit of delivery in Performance Scrum. They solve the predictability problem that undermines most agile projects: stakeholders cannot plan around deliverables they cannot predict.

A work package contains five elements:

| Element | Purpose | Example |

|---|---|---|

| Scope definition | What is included and explicitly excluded | "API endpoints for order CRUD. Excludes batch import." |

| Acceptance criteria | How the team and stakeholder agree it is done | "All endpoints pass contract tests. Response time under 200ms." |

| Effort estimate | Hours or story points with confidence level | "40 hours, high confidence (similar to previous API work)" |

| Dependencies | What must be complete before this can start | "Database schema migration from WP-003 must be deployed" |

| Business outcome | What business capability this enables | "Sales team can create orders without calling operations" |

The business outcome field is what separates work packages from standard user stories.

Every work package must answer the question: "What can the business do after this is delivered that it could not do before?" If the answer is "nothing visible" - for example, a purely technical refactoring task - the work package must still articulate the enabling value: "Reduces API response time from 800ms to 200ms, enabling the mobile app to load order lists in under 1 second."

This discipline creates a direct line from engineering effort to business value. CTOs and project sponsors can read work package descriptions and understand what they are getting for their investment - without needing to decode technical jargon or trust that "making progress on the API" means something concrete.

The predictability compounds over time. After 3-4 sprints, the team's velocity stabilizes. The average sprint velocity for a Scrum team typically stabilizes at 20-40 story points per 2-week sprint [5].

With calibrated work packages, stakeholders can forecast delivery timelines with confidence - not the false confidence of a Gantt chart, but the evidence-based confidence of a team that has consistently delivered what it committed to.

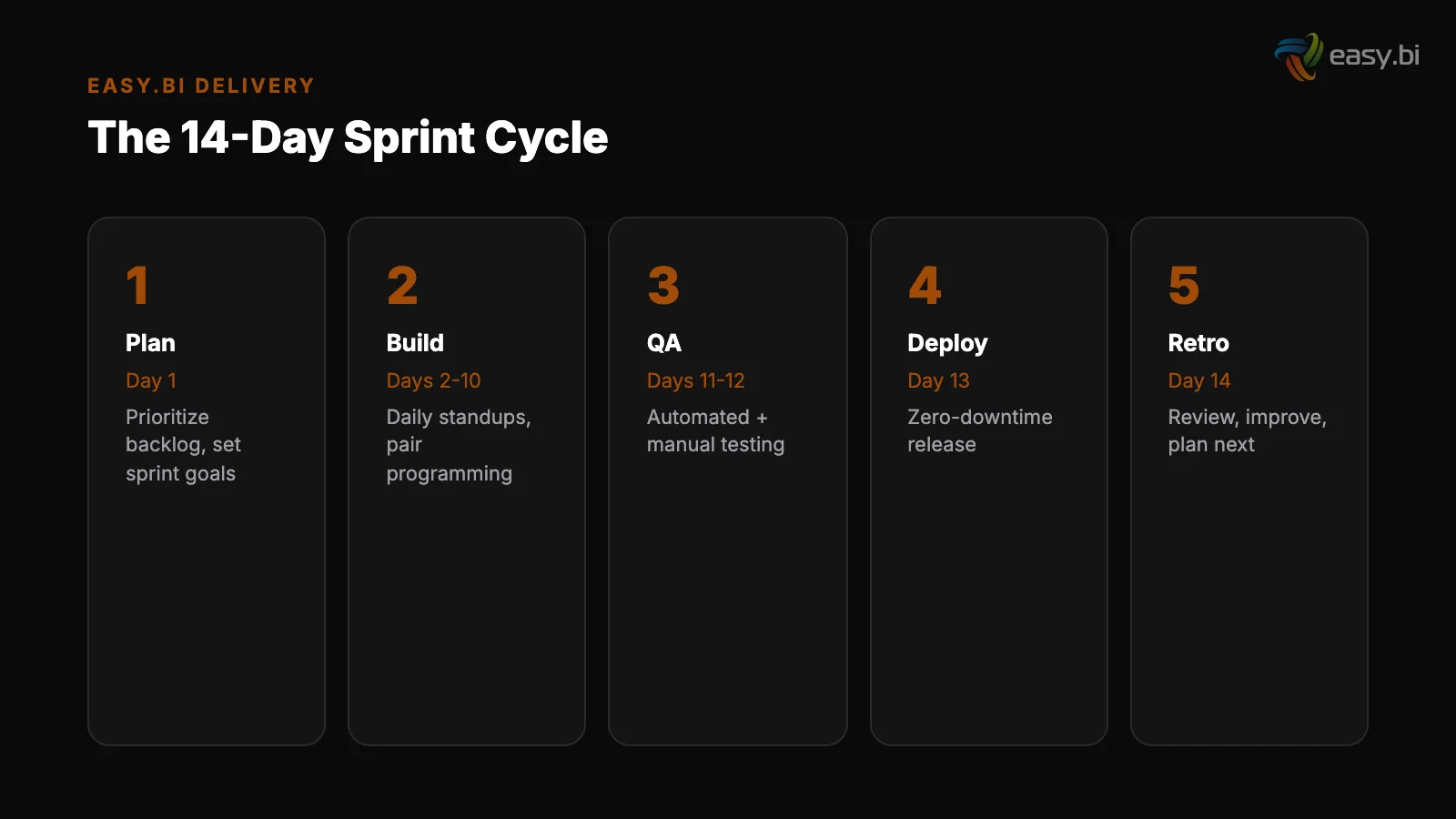

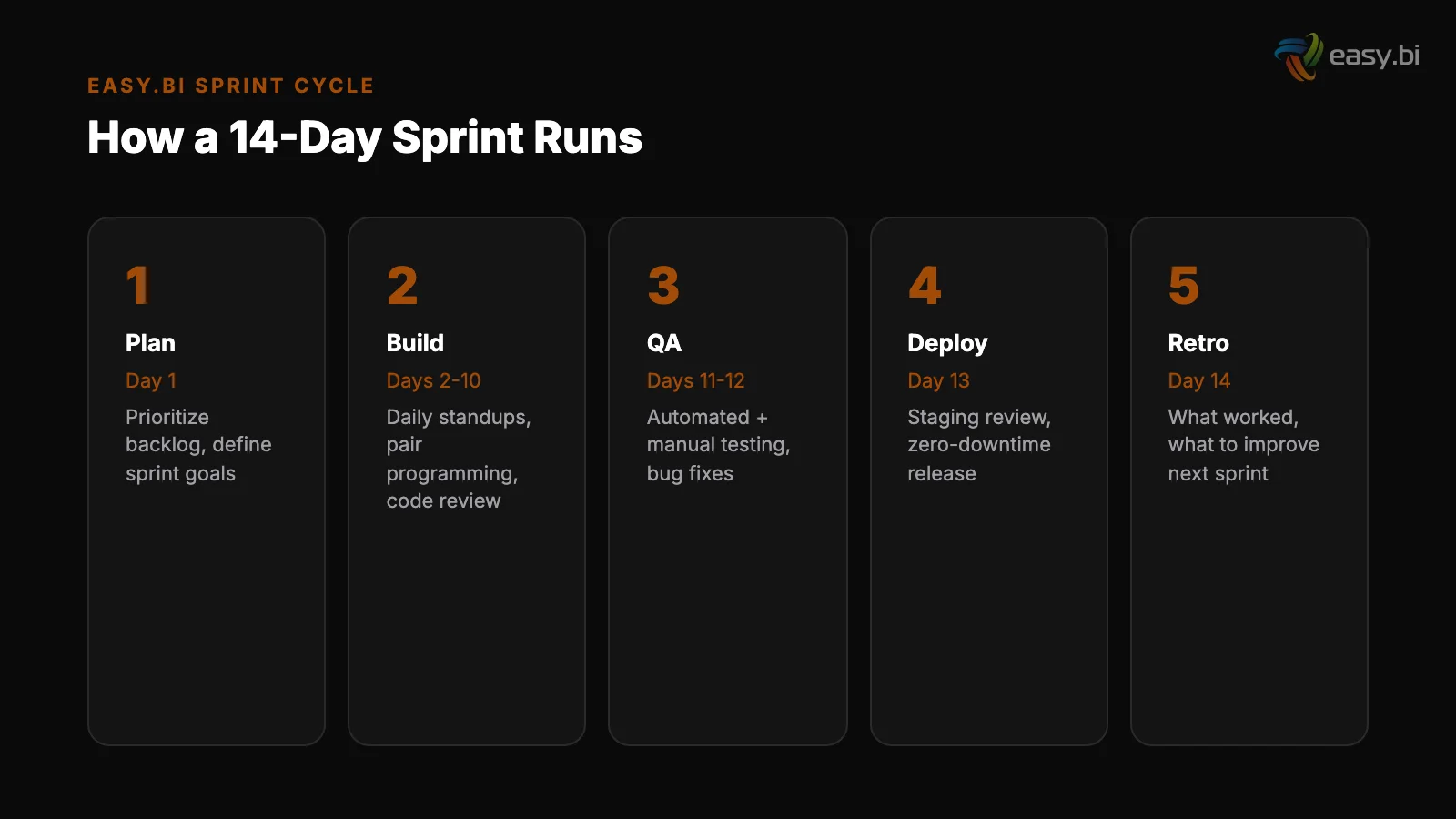

What Does a 14-Day Sprint Cycle Look Like?

Each Performance Scrum sprint follows a fixed structure. The rituals are familiar to anyone who has done Scrum. The discipline within each ritual is where Performance Scrum adds value.

Day 1: Sprint planning (2-3 hours). The team selects work packages from the prioritized backlog. Each work package is reviewed for clarity: is the scope unambiguous? Are acceptance criteria testable? Are dependencies resolved? If any work package fails these checks, it goes back to refinement.

The team commits to a sprint goal that is one sentence: "At the end of this sprint, [specific business capability] will be live in production."

Day 1: Sprint planning (2-3 hours)

Days 1-9: Development with daily standups (15 minutes). Standups answer three questions: What did you complete yesterday? What will you complete today? What is blocking you? The emphasis is on "complete" - not "worked on." In Performance Scrum, progress means finished, tested, and deployable work.

Half-finished features carry to the next day's standup as incomplete work, creating visible pressure to decompose tasks small enough to finish in a single day.

Day 10: Code freeze, QA, and integration testing. All new code is merged to the main branch by end of day 10. Days 10-13 are reserved for quality assurance, integration testing, and bug fixes. This buffer is not optional - it is built into every sprint.

Automated testing saves 20-40% of total QA costs and catches 80% of defects before production [6], but automated tests do not replace the human QA review that catches usability issues and edge cases.

Days 1-9: Development with daily standups (15 minutes)

Day 13: Sprint review (1-2 hours). The team demonstrates working software to the stakeholder. Not slides. Not mockups. The actual production-ready system. The stakeholder verifies that acceptance criteria are met, provides feedback for future sprints, and signs off on the increment.

This is the Prince2 stage gate adapted for 2-week cycles.

Day 14: Retrospective (1 hour). What went well? What went poorly? What is the one concrete process change the team will implement in the next sprint? The change is documented, assigned an owner, and tracked. If it does not happen, it appears in the next retrospective as an unresolved item.

This cadence repeats without variation. Every 14 days, stakeholders see working software. Every 14 days, the team improves its process. The compounding effect is significant: teams that follow this pattern for 6+ months are measurably faster and more reliable than they were in sprint 1.

How Does Performance Scrum Handle Scope Changes?

Scope changes are not the enemy. Uncontrolled scope changes are. Performance Scrum handles change requests through a structured process that respects both business agility and delivery predictability.

Any stakeholder can request a change at any time. The change is documented as a new work package with the same five elements: scope, acceptance criteria, effort estimate, dependencies, and business outcome.

The product owner evaluates the new work package against the existing backlog using a single criterion: does this deliver more business value per effort-hour than the work package it would replace?

If yes, the new work package enters the backlog at the appropriate priority. Something of equal or lesser value is deprioritized. The total scope of the sprint does not increase - it substitutes. This is the critical distinction.

In generic agile, scope creep happens because new work is added without removing existing work. In Performance Scrum, every addition requires a subtraction.

Mid-sprint changes follow a stricter rule: they are accepted only if the current sprint goal becomes invalid due to an external event (a regulatory change, a production incident, a market-critical pivot). Otherwise, the change enters the next sprint.

This protects the team's delivery cadence and prevents the context-switching that kills productivity. 58% of developers experience burnout, with inefficient processes being the second leading cause [7]. Protecting sprint focus is not just about delivery - it is about sustainability.

50+ custom projects. 99.9% uptime. 60% faster.

Senior-only engineering teams deliver production-grade platforms in under 4 months. No juniors on your project.

Start with a Strategy CallWhy Does 14-Day Delivery Beat 4-Week Cycles?

The data is clear: projects using 2-week sprint cycles deliver 40% more features per quarter than those using 4-week cycles [8]. The advantage comes from three compounding effects.

Faster feedback. A 2-week cycle means the maximum time between a wrong assumption and its correction is 14 days. In a 4-week cycle, that gap doubles. 57% of project failures trace to communication breakdowns between business and technology stakeholders [9].

Shorter cycles reduce the damage from every miscommunication by halving the time before it is discovered and fixed.

Faster feedback

Smaller batch sizes. A 2-week sprint forces the team to decompose work into smaller, independently deliverable pieces. Smaller batches are easier to estimate, easier to test, and easier to integrate. Continuous integration reduces integration problems by 90% [10] - but only when the integration happens frequently.

A 4-week sprint tempts teams to accumulate large batches that create merge conflicts and integration failures.

More decision points. With 2-week sprints, stakeholders make priority decisions 26 times per year instead of 13. Each decision point is an opportunity to pivot based on market feedback, user behavior data, or competitive intelligence.

In a market where 70% of digital transformations fail to reach their stated goals [11], the ability to course-correct 26 times per year instead of 13 is not a minor advantage - it is the difference between a project that adapts and a project that drifts.

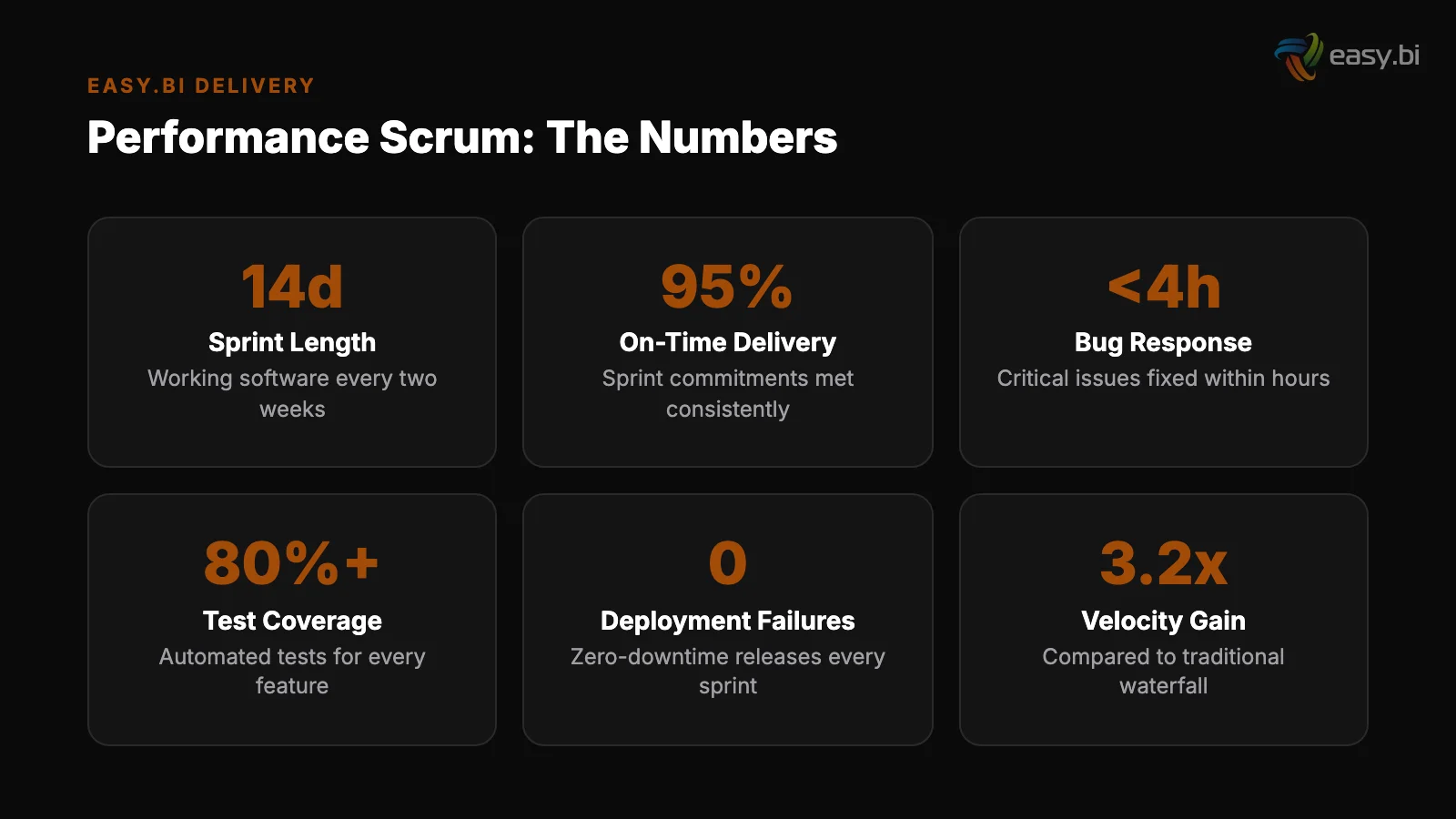

What Results Does Performance Scrum Produce?

Performance Scrum has been the delivery methodology for every easy.bi engagement since the company's founding in 2015. The results are measurable across 100+ projects delivered for clients including Siemens, REWE Group, WeberHaus, Lekkerland, and Fressnapf.

98% client retention rate. The industry average for IT outsourcing client retention is 72-78% [12]. The 20+ percentage point gap represents the accumulated trust that comes from delivering working software every 14 days - sprint after sprint, year after year.

Clients stay because the methodology produces results, not because of contractual lock-in.

98% client retention rate

Average partnership duration of 3.5+ years. The industry average for IT outsourcing relationships is 1.8 years. Partnerships lasting 3+ years deliver 25-30% more value than short-term engagements [13] because the team accumulates domain knowledge that compounds over every sprint.

By year 2, a Performance Scrum team understands the client's business as well as an internal team - and ships faster because they have the methodology discipline to maintain velocity.

Complex digital projects live in under 4 months. The WeberHaus digitization (100% process digitization with offline capability), the Lekkerland SAP integration (S4/HANA migration platform), and the Siemens unified UI library (Angular, Storybook, Figma) all reached production within 16 weeks.

The average time-to-market for a new software product is 6-9 months [14]. Performance Scrum consistently cuts that timeline by 40-50%.

These results are not exceptional individual outcomes. They are the predictable output of a methodology that enforces delivery discipline, measures everything, and improves every sprint.

The team of 50+ engineers across 4 countries shares the same methodology, the same tooling, and the same quality standards - so the results are replicable regardless of which specific engineers are assigned to a project.

How to Evaluate Whether Your Current Methodology Works

If your team claims to be agile but cannot answer these questions with specific numbers, the methodology is not working:

What was your average sprint velocity over the last 6 sprints? If the number is not stable within +/- 15%, the team is not estimating or delivering predictably.

How many production deployments happened in the last 30 days? If the answer is less than 2, the team is not delivering in 2-week cycles - they are accumulating work.

What was the last process change from a retrospective, and did it improve a measurable metric? If retrospectives produce discussion but not trackable improvements, they are a ritual without value.

Can a non-technical stakeholder read your last 5 sprint deliverables and understand the business value? If work packages are described in technical jargon that stakeholders cannot evaluate, the alignment between engineering and business is broken.

Performance Scrum was designed to answer all four questions affirmatively, every sprint, for every project. That is what "guaranteed delivery every 14 days" means in practice - not a marketing claim, but a methodology commitment backed by 100+ projects and a 98% retention rate that proves it works.

For a deeper look at how custom software projects succeed or fail, read our pillar guide on building custom software that does not fail.

If you want to see Performance Scrum applied to your project, explore our custom solutions approach or book an expert call to discuss your delivery challenges with an engineer who runs sprints - not a consultant who sells frameworks.

References

- [1] PMI Pulse of the Profession (2024). pmi.org

- [2] Standish Group CHAOS Report (2023). standishgroup.com

- [3] PMI Pulse of the Profession (2024). pmi.org

- [4] Scrum Alliance (2023). scrumalliance.org

- [5] Scrum Alliance / Mountain Goat Software (2023). mountaingoatsoftware.com

- [6] Capgemini World Quality Report (2024). capgemini.com

- [7] Haystack Analytics (2023). usehaystack.io

- [8] Scrum.org (2023). "Projects using 2-week sprint cycles deliver 40% more features scrum.org

- [9] PMI Pulse of the Profession (2024). pmi.org

- [10] ThoughtWorks / Martin Fowler (2023). thoughtworks.com

- [11] McKinsey (2023). "70% of digital transformations fail to reach their stated goal mckinsey.com

- [12] Deloitte Global Outsourcing Survey (2024). deloitte.com

- [13] Deloitte Global Outsourcing Survey (2024). deloitte.com

- [14] Accelerate / DORA (2024). dora.dev

Explore Other Topics

Ready to build your custom platform?

30-minute call with an engineering lead. No sales pitch - just honest answers about your project.

98% engineer retention · 14-day delivery sprints · No lock-in contracts