Automated QA: How AI Changes the Economics of Testing

Table of Contents+

- Why Does Traditional QA Break at Scale?

- How Does AI Test Generation Actually Work?

- What Types of Testing Benefit Most From AI?

- How Does Visual Regression Testing Catch What Unit Tests Miss?

- How Do You Solve the Flaky Test Problem?

- What Does the Test Maintenance Math Look Like With AI?

- How Does easy.bi Use AI in QA Delivery?

- How Do You Start Without Replacing Your Current QA Process?

- References

TL;DR

Quality assurance has always been the constraint in enterprise software delivery. Manual QA is slow, expensive, and scales in exactly the wrong direction - linearly with codebase complexity. Double the features, double the testing effort. Triple the integration points, triple the regression risk.

Key Takeaways

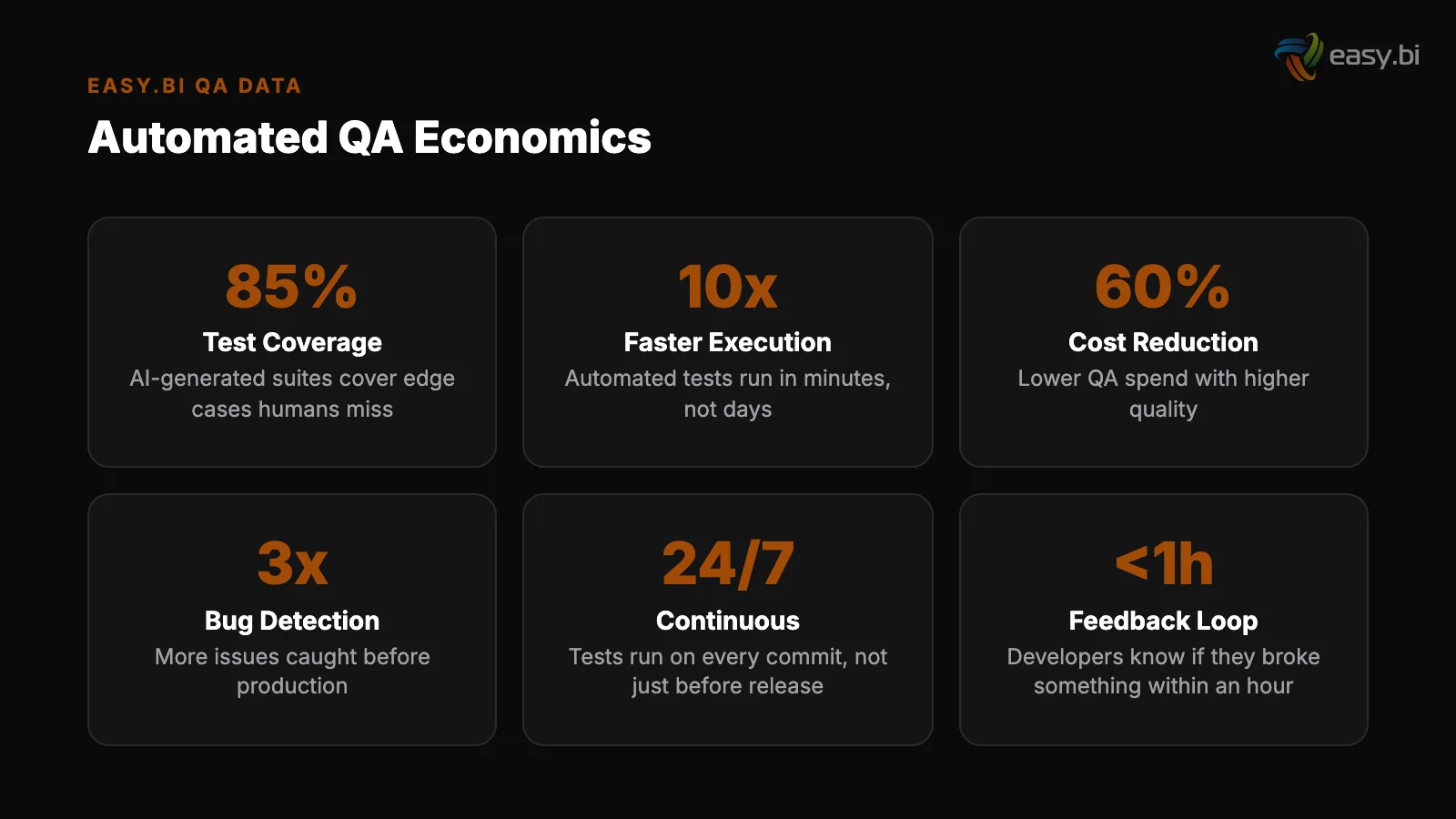

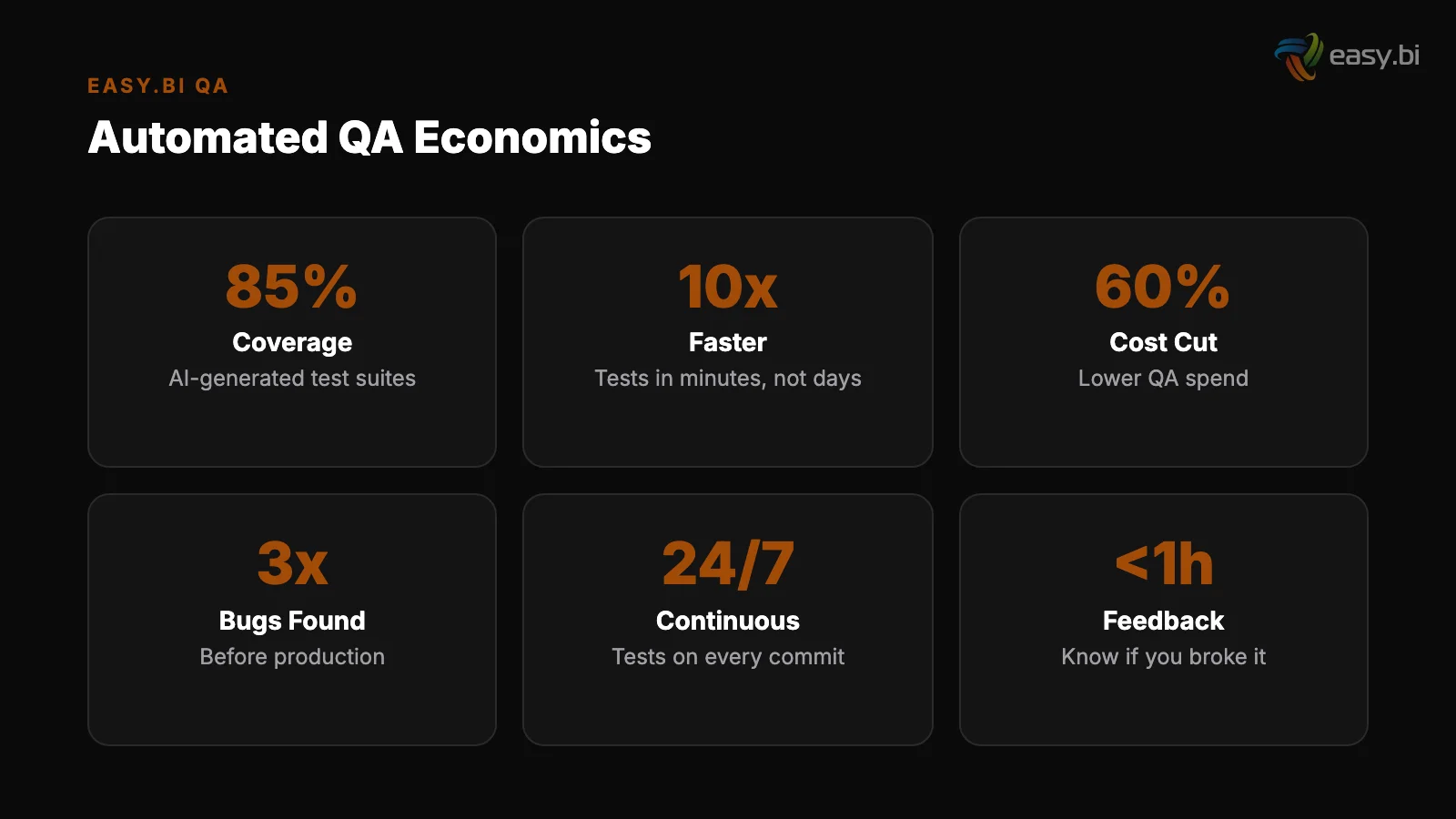

- •AI-powered test generation reduces manual test writing effort by 60% and increases test coverage by 25% - the tests engineers skip because they are tedious actually get written.

- •Traditional QA scales linearly with codebase complexity: double the features, double the testing effort. AI-assisted QA scales logarithmically, requiring roughly 2x effort for 5x more user flows.

- •Automated testing saves 20-40% of total QA costs and catches 80% of defects before production, but only when integrated into the CI/CD pipeline - not bolted on after the sprint.

- •Flaky tests consume 15-20% of CI/CD pipeline time in mature codebases. AI-powered flaky test detection identifies root causes and quarantines unreliable tests automatically.

- •easy.bi uses AI-powered QA as a standard part of delivery: AI-generated regression tests, visual comparison testing, and automated coverage analysis run on every sprint across 100+ projects.

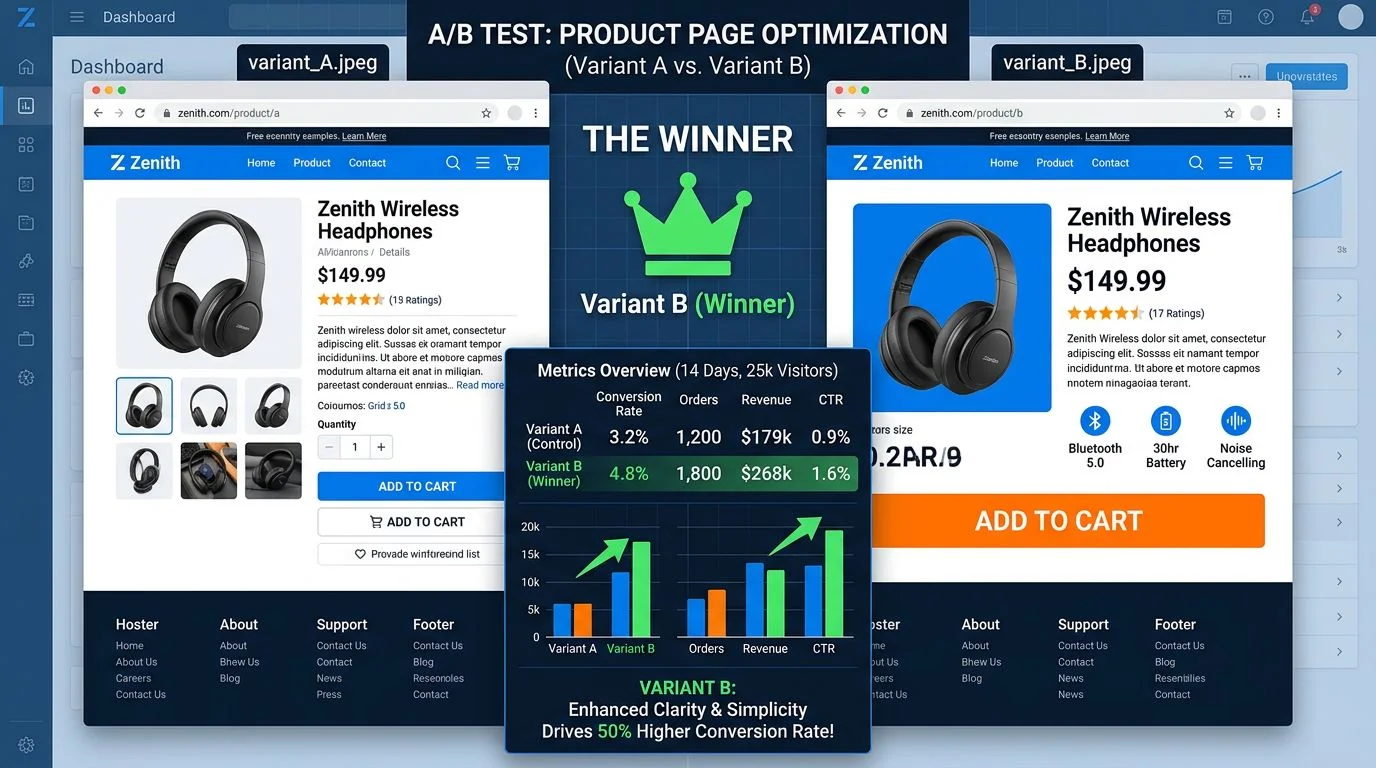

AI-powered QA reduces manual test writing by 60% and cuts testing cycles from weeks to hours. Learn how AI test generation, visual regression testing, and flaky test detection transform testing economics.

Quality assurance has always been the constraint in enterprise software delivery. Manual QA is slow, expensive, and scales in exactly the wrong direction - linearly with codebase complexity. Double the features, double the testing effort. Triple the integration points, triple the regression risk.

For teams shipping in 14-day sprint cycles, this math eventually breaks.

AI changes the economics of testing at a fundamental level. AI-powered test generation creates the tests engineers skip because they are tedious. Visual regression testing catches pixel-level UI changes that human testers miss. Flaky test detection identifies and quarantines unreliable tests that waste CI/CD pipeline time.

And AI-augmented testing compresses testing cycles from weeks to hours [1].

This is not a future-state projection. 61% of QA teams are already experimenting with AI-assisted testing tools [1].

The teams that have integrated AI into their QA workflows are seeing results that change the planning assumptions for every sprint: 60% less manual test writing, 25% more coverage, and defect escape rates that drop measurably sprint over sprint.

This article covers the practical applications of AI in QA - what works, what does not, and how to integrate AI testing into your existing delivery pipeline without disrupting current sprint velocity.

Why Does Traditional QA Break at Scale?

To understand what AI fixes, you need to understand what is broken. Traditional QA has three structural problems that no amount of hiring can solve.

Linear scaling. A codebase with 100 user flows requires a proportional testing effort. When that codebase grows to 500 user flows - through new features, integrations, and edge cases - the testing effort grows proportionally. Most teams respond by cutting test coverage instead of scaling testing resources.

The result: the parts of the system that need the most testing (new, complex, integration-heavy) get the least.

Linear scaling

Maintenance overhead. Tests are code. They need to be maintained when the application code changes. In mature enterprise codebases, test maintenance consumes 30-40% of total QA effort. A refactored API endpoint breaks 50 integration tests. A redesigned UI component invalidates 30 visual tests.

The QA team spends their sprint fixing tests instead of writing new ones.

The coverage gap. The industry average for test coverage with manually written tests is 50-60% [2]. That means 40-50% of the codebase has no automated safety net.

The untested code is typically the code that is hardest to test - complex business logic, error handling paths, edge cases in integration flows. These are also the areas where production bugs are most expensive to fix.

Maintenance overhead

The cost of these structural problems is quantifiable. Automated testing saves 20-40% of total QA costs and catches 80% of defects before production [3]. But the prerequisite is having comprehensive automated tests in the first place - and that is where most enterprise teams fall short. They have automation frameworks.

They do not have enough tests in those frameworks.

"The problem with enterprise QA is not a lack of automation tools. It is a lack of automated tests. Engineers know they should write more tests. They do not have the time. AI changes the time equation."

See how ebiCore accelerates development.

How Does AI Test Generation Actually Work?

AI test generation tools analyze your codebase, user stories, and existing tests to generate new test cases automatically. The approach varies by tool, but the core mechanism follows a consistent pattern.

Code analysis. The AI reads your application code - function signatures, class structures, API endpoints, database schemas - and identifies paths that need testing. It focuses on code paths with no existing coverage, complex branching logic, and recently changed modules.

Code analysis

Pattern learning. The AI studies your existing tests to learn your project's testing conventions: how you structure test files, what assertions you use, how you handle fixtures and mocks, what naming patterns you follow. Generated tests match your project's style, not a generic template.

Test creation. Based on code analysis and learned patterns, the AI generates test cases. For a REST API endpoint, this means tests for happy paths, validation errors, authentication failures, edge cases in request parameters, and response format verification.

For a business logic module, it means tests for each conditional branch, boundary conditions, and error handling paths.

Pattern learning

The results are measurable. AI-powered test generation reduces manual test writing effort by 60% and increases test coverage by 25% in enterprise codebases [4]. AI-generated unit tests achieve 60-80% code coverage on first generation, compared to the industry average of 50-60% with manually written tests [2].

At easy.bi, we used AI-generated regression tests on a Shopware 6 project that covered 340 user flows. Writing those tests manually would have taken a QA engineer 6 weeks. The AI framework generated them in 2 days.

When the codebase changed, the framework updated the affected tests automatically - eliminating the maintenance overhead that makes manual test suites decay over time.

What Types of Testing Benefit Most From AI?

Not all testing activities benefit equally from AI. The biggest gains come from test types that are repetitive, pattern-based, and high-volume - exactly the categories where manual testing is most expensive and most often skipped.

| Test Type | AI Impact | Manual Effort Reduction | Coverage Improvement |

|---|---|---|---|

| Unit tests | High - pattern-based, high-volume | 50-70% | 60-80% coverage on first generation |

| API / integration tests | High - endpoint coverage, edge cases | 60-70% | Endpoint coverage from 45% to 88% |

| Regression tests | Very high - repetitive, maintenance-heavy | 60-80% | Automated updates on code changes |

| Visual regression | High - pixel comparison at scale | 70-90% | Every page/component, every deployment |

| Performance tests | Medium - baseline generation automated | 30-40% | Load profile generation from production data |

| Exploratory testing | Low - requires human creativity and intuition | 10-15% | AI suggests areas to explore, humans execute |

AI-powered API testing tools deserve special mention. They reduce the time to generate comprehensive API test suites by 70% and increase endpoint coverage from 45% to 88% [5].

For enterprise systems with hundreds of API endpoints across multiple services, this is the difference between testing the happy paths and testing the system.

How Does Visual Regression Testing Catch What Unit Tests Miss?

Visual regression testing compares screenshots of your application before and after a code change. It catches the category of bugs that functional tests miss entirely: layout shifts, font rendering changes, color discrepancies, responsive breakpoints that break on specific screen sizes, and CSS changes that cascade into unintended visual side effects.

Traditional visual testing requires human testers to manually check screens after every deployment. With 50+ pages and 3+ breakpoints per page, that is 150+ visual checks per release.

At scale, teams either skip visual testing (accepting visual regressions as a cost of shipping) or slow down their release cadence (accepting slower delivery as the cost of quality).

AI-powered visual regression tools automate this entirely. They capture baseline screenshots, compare against new deployments using perceptual diff algorithms, and flag only meaningful changes - ignoring pixel-level rendering differences between browsers while catching intentional and unintentional visual changes.

For e-commerce projects - where visual presentation directly impacts conversion rates - visual regression testing catches issues that unit and integration tests fundamentally cannot detect.

A CSS change that passes every functional test but moves the "Add to Cart" button below the fold on mobile is a revenue-impacting bug that only visual testing catches.

How Do You Solve the Flaky Test Problem?

Flaky tests - tests that pass and fail intermittently without code changes - are the hidden tax on CI/CD pipelines. In mature codebases, flaky tests consume 15-20% of pipeline execution time.

Developers learn to distrust test results, re-running failed pipelines "just in case" and ignoring legitimate failures because "that test is always flaky."

The causes of flaky tests are well understood: timing dependencies, shared mutable state, external service dependencies, race conditions in asynchronous code, and order-dependent test execution. The problem is diagnosis.

Identifying which tests are flaky, why they are flaky, and how to fix them requires analyzing test execution history across hundreds of pipeline runs.

AI-powered flaky test detection automates this analysis. The tool tracks test results across pipeline executions, identifies tests with inconsistent pass/fail patterns, correlates failures with infrastructure conditions (CPU load, network latency, database state), and classifies root causes. It then recommends fixes - or in some cases, applies them automatically.

The operational impact is significant. AI-driven anomaly detection in CI/CD pipelines catches 35% more issues pre-production than traditional rule-based approaches [6]. When applied specifically to flaky test detection, teams recover 15-20% of pipeline time that was previously wasted on re-runs and false alarms.

At easy.bi, flaky test management is part of our standard delivery process. Every active project has automated flaky test detection running alongside the CI/CD pipeline. Tests identified as flaky get quarantined - they run in a separate pipeline that tracks their behavior without blocking deployments.

When the root cause is identified and fixed, the test returns to the main pipeline. This approach keeps pipeline trust high without sacrificing test coverage.

Our AI framework cuts development time in half

ebiCore is our proprietary agentic AI framework that accelerates innovation and reduces cost.

Start with a Strategy CallWhat Does the Test Maintenance Math Look Like With AI?

Test maintenance is the silent budget item that most project plans ignore. Writing 500 tests takes effort. Maintaining those 500 tests as the application evolves over 12-24 months takes more effort than writing them in the first place.

Traditional test maintenance is reactive. A developer changes an API endpoint. 15 integration tests break. A QA engineer spends half a day updating test assertions, fixtures, and mocks. Multiply this by 10-20 code changes per sprint, and test maintenance becomes a full-time job for someone on the team.

AI-assisted test maintenance is proactive. When application code changes, the AI framework identifies affected tests, generates updated assertions, and adjusts fixtures to match the new behavior. The QA engineer reviews the AI-proposed updates instead of writing them from scratch. The review takes minutes instead of hours.

The economic shift is dramatic. Traditional QA scales linearly: double the features, double the testing and maintenance effort. AI-assisted QA scales logarithmically. A codebase with 500 user flows does not require 5x the testing investment of a codebase with 100 flows. It requires roughly 2x.

The AI framework learns from existing tests, identifies patterns, and generates new coverage with diminishing marginal effort per additional flow.

For enterprise projects that grow over multiple years - the typical engagement at easy.bi lasts 3.5+ years - this scaling advantage compounds dramatically. A project that adds 50 new features per year with traditional QA needs proportionally more QA resources each year.

The same project with AI-assisted QA needs the same QA resources delivering proportionally more coverage.

How Does easy.bi Use AI in QA Delivery?

At easy.bi, automated QA powered by AI is a standard part of every delivery engagement, not an add-on. Here is how it integrates into our 14-day sprint cycle.

Sprint day 1-2: Test plan generation. Based on the sprint backlog, ebiCore generates a test plan that includes new tests for incoming features, regression tests for areas affected by code changes, and updated tests for modified functionality.

The QA engineer reviews and adjusts the plan - they do not build it from scratch.

Sprint day 1-2: Test plan generation

Sprint day 3-10: Continuous test generation. As developers commit code, ebiCore generates corresponding tests in parallel. Unit tests for new business logic. Integration tests for new API endpoints. Visual regression baselines for UI changes.

The tests enter the CI/CD pipeline as part of the same commit - not as a separate, delayed QA activity.

Sprint day 11-12: Regression and visual testing. The full regression suite runs against the sprint's accumulated changes. AI-generated regression tests cover not just the changed code but the integration points and dependent modules. Visual regression compares every affected page across breakpoints.

Issues flagged at this stage get fixed before the sprint demo, not after.

Sprint day 3-10: Continuous test generation

Sprint day 13-14: Quality gate and deployment. The sprint goes through a final quality gate that includes test coverage analysis (are we above the project's coverage threshold?), flaky test report (are any tests unreliable?), and security scan results. If all gates pass, the sprint deploys to production.

If any gate fails, the issue is fixed before deployment - no exceptions.

This process runs on every active project across our portfolio. The result: 60% fewer post-deployment bugs and a QA process that scales with the codebase instead of against it.

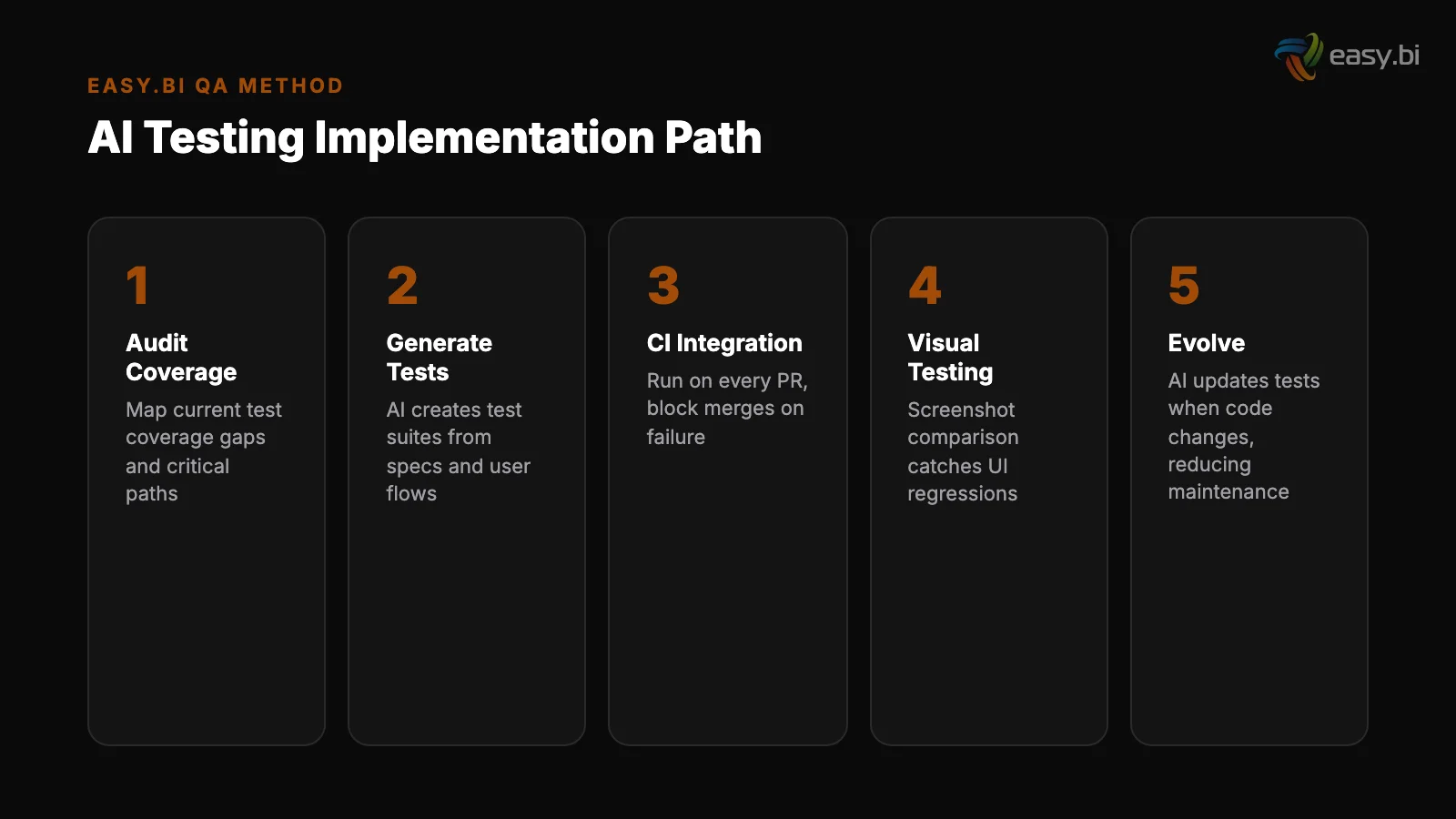

How Do You Start Without Replacing Your Current QA Process?

The integration path for AI-powered QA mirrors the path for AI-assisted code review - incremental, measurable, and designed to layer onto existing workflows rather than replace them.

Start with test generation for new code. Do not attempt to retrofit AI-generated tests onto your entire legacy codebase on day one. Instead, require AI-generated tests for every new feature and every modified module going forward.

This immediately improves coverage on the most active parts of the codebase without creating a massive backlog.

Start with test generation for new code

Add visual regression testing next. This is the highest-value, lowest-effort addition for most teams. Configure screenshot baselines for your key pages and components. Run visual comparisons on every deployment. The setup takes 1-2 days. The time saved in manual visual checks pays back within the first sprint.

Then tackle flaky test detection. Once your CI/CD pipeline has enough test history (4-6 weeks), deploy flaky test detection to identify and quarantine unreliable tests. This immediately improves pipeline reliability and developer trust in test results.

Add visual regression testing next

Finally, address legacy test maintenance. Use AI tools to analyze your existing test suite: identify tests with no assertions (they pass but test nothing), tests that duplicate coverage, and tests that are no longer reachable.

Clean up the test suite before adding new coverage - a smaller, reliable test suite is more valuable than a large, unreliable one.

For the broader context on how AI transforms the entire development lifecycle - from code generation and review through QA and deployment - see our pillar guide: AI in Enterprise Software: What's Real, What's Hype, and What to Build First.

For a look at the agentic framework that connects AI code review, test generation, and deployment automation into one integrated pipeline, read Agentic AI Frameworks: What They Are and Why Your Dev Team Needs One.

If your current QA process is a bottleneck - and for most enterprise teams, it is - the first step is a conversation about where AI can deliver the most value in your specific testing workflow. Talk to our engineering team about integrating AI-powered QA into your delivery pipeline.

References

- [1] World Quality Report / Capgemini, 2023 - AI-augmented software testing can reduc

- [2] Diffblue, 2023 - AI-generated unit tests achieve 60-80% code coverage on first g

- [3] Capgemini World Quality Report, 2024 - Automated testing saves 20-40% of total Q

- [4] Diffblue, 2023 - AI-powered test generation reduces manual test writing effort b

- [5] Postman, 2023 - AI-powered API testing tools reduce time to generate comprehensi

- [6] CircleCI, 2023 - AI-driven anomaly detection in CI/CD pipelines catches 35% more

Explore Other Topics

Ready to accelerate with AI?

30-minute call with an engineering lead. No sales pitch - just honest answers about your project.

98% engineer retention · 14-day delivery sprints · No lock-in contracts