AI in Enterprise Software: What's Real, What's Hype, and What to Build First

Table of Contents+

- The AI Adoption Reality Check: Numbers vs. Noise

- Why Do Most Enterprise AI Projects Never Leave the Pilot Stage?

- LLM Integration Patterns That Work in Production

- Agentic AI: From Chatbot to Autonomous Workflow

- How Does AI Change the Way Teams Write Software?

- AI-Assisted Code Review, Testing, and Quality Assurance

- AI Governance: The Risk You Cannot Afford to Ignore

- Should You Build or Buy Your AI Capabilities?

- Where to Start: The First 90 Days of Enterprise AI

- How Do You Measure AI ROI Without Guessing?

- From Pilot to Production: What Separates the 22% That Succeed

- References

TL;DR

Enterprise AI adoption has crossed the tipping point: 55% of organizations now use AI in at least one business function. But the gap between adoption and production value remains wide - only 22% of AI projects survive from pilot to production.

Key Takeaways

- •55% of organizations have adopted AI in at least one function, but only 22% of AI projects make it from pilot to production - execution, not technology, is the bottleneck.

- •Retrieval-Augmented Generation (RAG) is the most production-ready LLM pattern: 51% of enterprises deploying LLMs use it, and it reduces hallucinations by 40-60%.

- •AI-assisted development tools cut code review time by 30-50% and boost junior developer productivity by 35%, but AI-generated code requires 40% more review time for security.

- •56% of enterprises lack formal AI governance - the EU AI Act makes this a compliance risk, not just a best practice.

- •Start with high-value, low-risk use cases in your first 90 days. Companies that do report a median ROI of 3-5x within 14 months of deployment.

55% of enterprises adopted AI, but only 22% get past pilot. Learn which LLM patterns, governance frameworks, and first-90-day strategies deliver real ROI.

Enterprise AI adoption has crossed the tipping point: 55% of organizations now use AI in at least one business function. But the gap between adoption and production value remains wide - only 22% of AI projects survive from pilot to production.

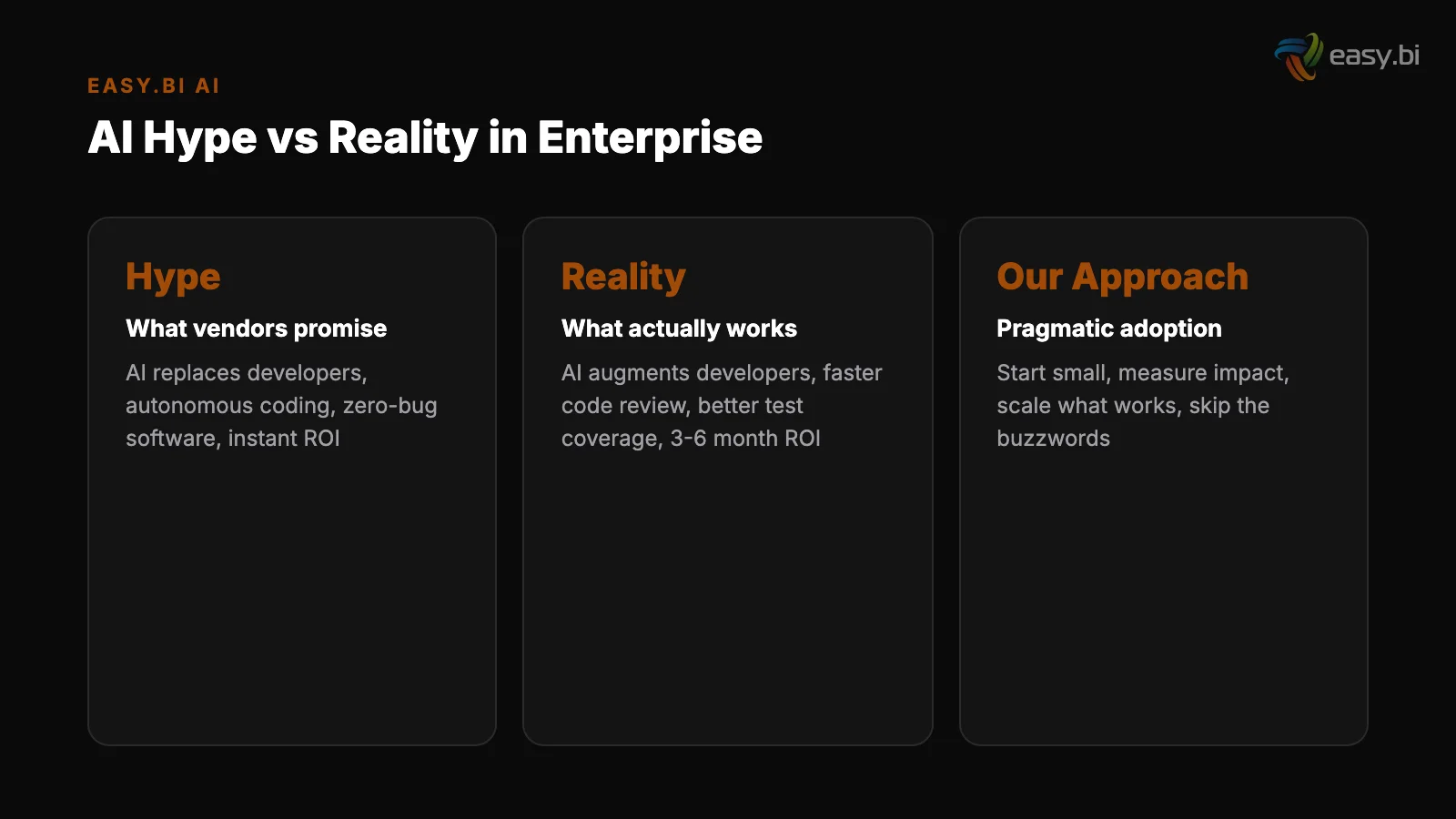

The companies winning with AI are not chasing the latest model. They are solving specific business problems with proven integration patterns and disciplined governance.

This article covers what actually works in enterprise AI today: the integration patterns delivering ROI, the governance frameworks protecting your business, and the practical first steps that separate the 22% who succeed from the 78% who stall.

If you are evaluating AI for your organization, this is the reality check before the budget request.

The AI Adoption Reality Check: Numbers vs. Noise

The headline numbers paint a picture of unstoppable momentum. In practice, the story is more nuanced - and more useful for decision-makers who need to separate signal from noise before committing budget.

McKinsey reports that 55% of organizations have adopted AI in at least one business function, up from 20% in 2017 [1]. One-third of organizations use generative AI regularly in at least one function [2]. Global corporate AI investment reached $189.6 billion in 2023, nearly 8x the 2017 level [3].

Gartner predicts that by 2026, 80% of enterprises will have used generative AI APIs or deployed generative AI-enabled applications [4].

These numbers are real. But they obscure a critical detail: most of this adoption is shallow. Teams experimenting with ChatGPT for email drafting counts as "AI adoption." A production-grade system that processes customer orders using LLM-powered intent recognition is a different category entirely.

The more revealing metric is the pilot-to-production rate. Only 22% of AI and data science projects make it from pilot to production, up from 13% in 2020 [5]. That means 78% of AI initiatives generate cost without generating value.

For the DACH market specifically, the picture is even starker. Germany ranks 3rd in Europe for AI adoption, with 24% of companies using AI in production [6]. Among the Mittelstand - the mid-sized enterprises that form the backbone of the German economy - only 15% use AI in production [7].

The opportunity gap is enormous, but so is the execution risk.

| Metric | Current State | Source |

|---|---|---|

| Organizations using AI in at least one function | 55% | McKinsey, 2023 |

| Organizations using generative AI regularly | 33% | McKinsey, 2024 |

| AI projects reaching production | 22% | Gartner, 2023 |

| Enterprises planning to use GenAI APIs by 2026 | 80% | Gartner, 2023 |

| German Mittelstand using AI in production | 15% | Bitkom, 2023 |

| Large companies reporting positive AI ROI | 92% | NewVantage, 2024 |

The takeaway: AI adoption is accelerating, but production deployment remains the exception, not the rule. The question is not whether to invest in AI. It is how to be in the 22% that reach production.

See how ebiCore accelerates development.

Why Do Most Enterprise AI Projects Never Leave the Pilot Stage?

The 78% failure rate from pilot to production is not caused by bad models or insufficient compute power. It is caused by organizational and architectural failures that repeat across industries, company sizes, and use cases.

Data quality is the first wall. 67% of enterprises cite data quality as the number-one barrier to AI adoption. Organizations spend 45% of their AI project time on data preparation alone [8].

Your LLM can be state-of-the-art, but if it is trained on or retrieves from inconsistent, duplicated, or incomplete data, the outputs will be unreliable. Most enterprises underestimate the data engineering effort by 3-5x.

Data quality is the first wall

Change management outranks technology. BCG found that enterprises deploying AI at scale report change management - not technology - as the number-one success factor, cited by 62% of AI leaders [9].

The best model in the world delivers zero value if your operations team does not trust it, your compliance team blocks it, or your end users work around it.

The readiness gap is real. 86% of IT leaders expect AI to play a central role in their organizations within 2 years, but only 34% feel their organization is prepared [10].

This gap between ambition and readiness is where most projects die - somewhere between the executive presentation and the production deployment.

Change management outranks technology

"Only 22% of AI projects make it from pilot to production. The 78% that fail almost never fail because of the model. They fail because of data quality, change management, and the gap between ambition and execution readiness."

The pattern is consistent: enterprises that succeed with AI treat it as an engineering and organizational challenge, not a technology procurement decision. They invest as much in data infrastructure and team enablement as they do in model selection.

LLM Integration Patterns That Work in Production

Three LLM integration patterns have emerged as production-ready for enterprise use. For a deep technical treatment of RAG, fine-tuning, and agentic workflows with architecture decisions and implementation timelines, see LLM Integration Patterns for Enterprise: RAG, Fine-Tuning, and Agents.

Each serves a different need, and choosing the wrong one is a common source of project failure. Understanding the trade-offs before you write a line of code saves months of rework.

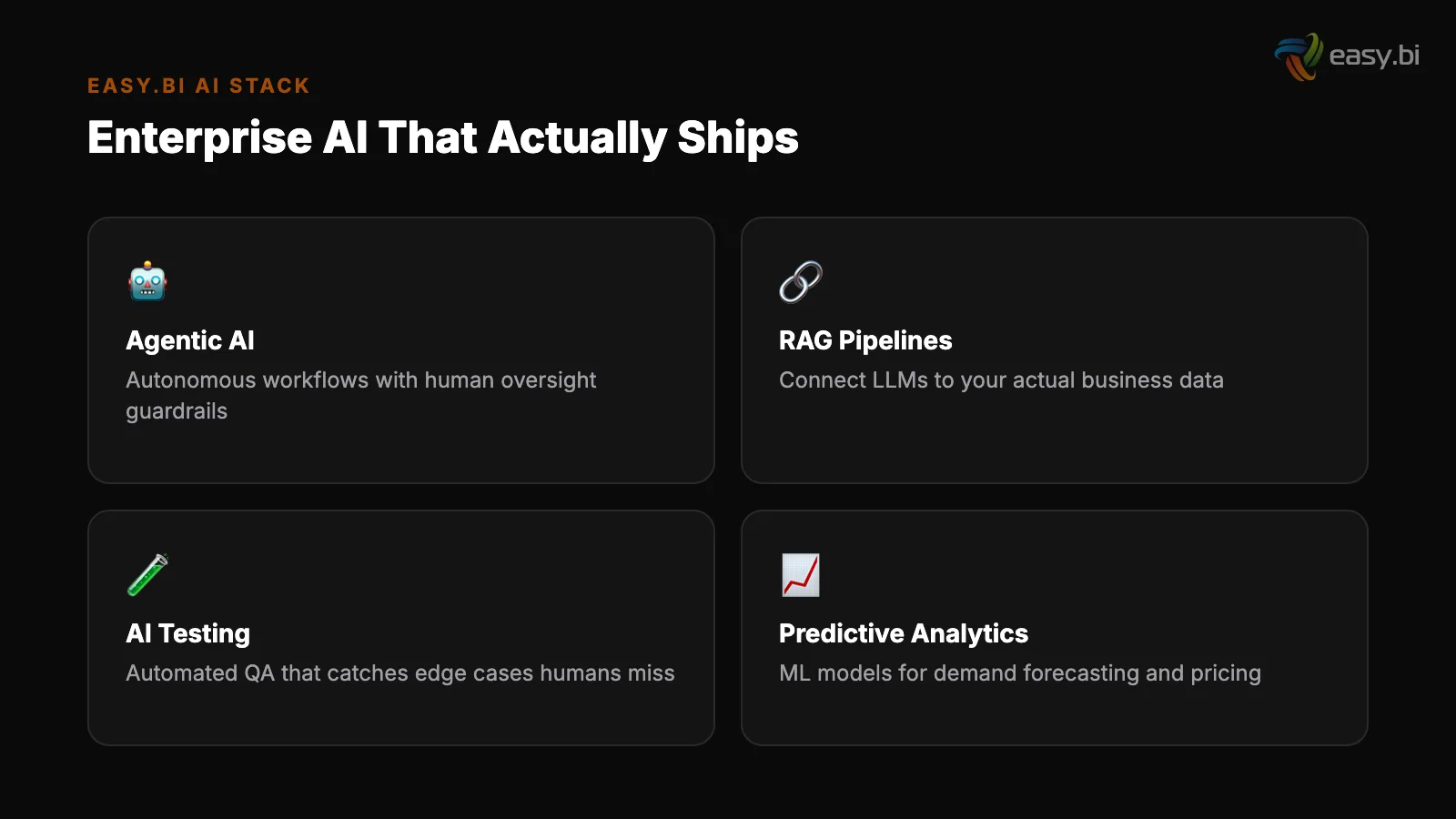

Retrieval-Augmented Generation (RAG) is the most widely deployed pattern. 51% of enterprises using LLMs have adopted RAG [11]. RAG connects a general-purpose LLM to your enterprise data through a retrieval layer - typically a vector database.

The model generates responses grounded in your actual documents, knowledge bases, and databases rather than relying solely on its training data.

Retrieval-Augmented Generation (RAG)

RAG reduces hallucinations by 40-60% compared to using a base LLM alone [12]. It requires no model training - you keep your data in-house and query it at inference time.

This makes it the lowest-risk, fastest-to-deploy pattern for most enterprise use cases: internal knowledge assistants, customer support automation, document summarization, and code documentation.

Fine-tuning takes a pre-trained LLM and further trains it on your domain-specific data. This improves task accuracy by 20-40% compared to prompt engineering alone [13]. Fine-tuning makes sense when you need consistent output formatting, domain-specific terminology, or behavior that prompt engineering cannot reliably achieve.

The trade-off: it requires curated training data, compute budget, and ongoing retraining as your domain evolves.

Fine-tuning

Prompt engineering with function calling is the lightest-weight approach. You design structured prompts that guide the LLM to call external APIs, query databases, or trigger workflows. No model training, no vector database.

This works well for straightforward classification, extraction, and routing tasks where the LLM acts as a natural-language interface to existing systems.

| Pattern | Best For | Data Requirement | Time to Production | Hallucination Control |

|---|---|---|---|---|

| RAG | Knowledge retrieval, Q&A, search | Document corpus + vector DB | 4-8 weeks | High (40-60% reduction) |

| Fine-tuning | Domain-specific tasks, formatting | Curated training dataset | 8-16 weeks | Medium (model-dependent) |

| Prompt engineering + function calling | Classification, routing, simple extraction | API access only | 1-4 weeks | Low (no grounding) |

The average enterprise now uses 3.8 different LLM providers [14]. This multi-model approach is intentional - different models excel at different tasks, and vendor diversification reduces lock-in risk. Your architecture should support model routing from day one.

Agentic AI: From Chatbot to Autonomous Workflow

Agentic AI represents the next evolution beyond chat-based interactions. For a technical breakdown of the frameworks and real use cases, see Agentic AI Frameworks: What They Are and Why Your Dev Team Needs One. Instead of answering questions, agentic systems autonomously plan, execute, and iterate on multi-step tasks.

They chain tool calls, make decisions, and adapt their approach based on intermediate results.

The adoption curve is early but accelerating. Agentic AI systems are in production at 8% of enterprises, with 42% actively experimenting [15]. Agentic AI frameworks like AutoGen, LangGraph, and CrewAI saw 10x growth in GitHub stars from Q1 2023 to Q1 2024 [16].

This signals strong developer interest that will translate into production deployments over the next 12-18 months.

At easy.bi, we built ebiCore - our proprietary agentic AI-based SaaS development framework running on enterprise high-availability architecture. ebiCore is not a chatbot wrapper. It is a production-grade framework that orchestrates AI agents across the software development lifecycle: from requirements analysis through code generation, testing, and deployment.

No competitor in the DACH nearshore space has an equivalent AI-powered development framework.

ebiCore accelerates innovation while reducing cost because it handles the repetitive, pattern-based work that consumes engineering hours - boilerplate code generation, test scaffolding, documentation, and code review triage. Engineers focus on architecture decisions, business logic, and the creative problem-solving that AI cannot do well.

The practical applications of agentic AI in enterprise software extend beyond development:

- Automated incident response: Agent detects anomaly, queries logs, identifies root cause, applies known fix, and escalates only if the fix fails.

- Intelligent document processing: Agent receives a document, classifies it, extracts structured data, validates against business rules, and routes it to the correct workflow.

- Multi-system orchestration: Agent coordinates actions across ERP, CRM, and warehouse systems to fulfill a complex business process without human intervention.

The key constraint with agentic AI is trust boundaries. Autonomous systems need guardrails: human-in-the-loop checkpoints for high-stakes decisions, audit trails for compliance, and fallback mechanisms when the agent encounters situations outside its training. Building these guardrails is more work than building the agent itself.

How Does AI Change the Way Teams Write Software?

AI-assisted development has moved from experiment to standard practice.

If you are looking to add AI capabilities to an existing product without a full rewrite, see Building AI Features Into Existing Products (Without Starting Over). 72% of developers report using AI tools for coding in 2024, up from 44% in 2023 [17].

GitHub Copilot alone surpassed 1.8 million paid subscribers by February 2024 [18]. This is no longer a question of "should we adopt AI coding tools" - it is a question of how to adopt them effectively and safely.

The productivity numbers are compelling but come with caveats. Copilot-assisted developers complete tasks 55% faster and complete pull requests 55% faster [19]. AI pair programming improves junior developer productivity by 35%, compared to 21% for senior developers [20].

This narrowing of the experience gap means AI tools have the biggest impact on team members who need the most support.

Copilot usage in enterprises increases developer satisfaction by 75%, with developers reporting they can focus on more fulfilling work [21]. This is not just a productivity metric - in an industry with 13.2% average turnover, developer satisfaction directly impacts retention and hiring costs.

But speed without quality is technical debt accumulation. AI-generated code requires 40% more review time than human-written code due to subtle logic errors and security vulnerabilities [22]. Code generation tools produce secure code only 67% of the time [23]. This means AI-generated code needs stricter review processes, not looser ones.

The teams that benefit most from AI-assisted development are those that already have strong engineering practices: code review culture, automated testing, CI/CD pipelines, and clear coding standards. AI amplifies existing quality - it does not create it from nothing.

AI-Assisted Code Review, Testing, and Quality Assurance

Beyond code generation, AI is transforming the quality assurance side of software development. For a focused guide on AI code review, see AI-Assisted Code Review: The 60% Time Savings Nobody Expected. For AI-powered testing economics, see Automated QA: How AI Changes the Economics of Testing.

These applications are often higher-value than code generation because they catch problems before they reach production - where fixing a bug costs 6x more than during implementation.

AI-assisted code review reduces review time by 30-50% and catches 15-20% more bugs than human-only reviews [24]. This does not replace human reviewers.

It augments them by handling the pattern-matching work - identifying common vulnerabilities, style inconsistencies, and performance anti-patterns - so human reviewers can focus on logic, architecture, and business correctness.

AI-assisted code review

AI-powered test generation reduces manual test writing effort by 60% and increases test coverage by 25% in enterprise codebases [25]. AI-generated unit tests achieve 60-80% code coverage on first generation, compared to the industry average of 50-60% with manually written tests.

For teams struggling with test coverage on legacy codebases, this is a high-value starting point.

AI-augmented testing cycles compress testing from weeks to hours. 61% of QA teams are experimenting with AI-assisted testing tools [26]. AI-powered API testing reduces time to generate comprehensive test suites by 70% and increases endpoint coverage from 45% to 88%.

AI-powered test generation

AI-driven anomaly detection in CI/CD pipelines catches 35% more issues pre-production than traditional rule-based approaches [27]. Combined with AI-powered log analysis that reduces troubleshooting time by 60%, the entire feedback loop from code commit to production issue resolution gets faster.

"AI does not replace your quality process. It accelerates it. The teams seeing the biggest gains already had strong engineering foundations - code review culture, automated testing, CI/CD. AI amplifies what is already working."

At easy.bi, ebiCore integrates AI-assisted quality assurance into every sprint. Automated code review triage, AI-generated test scaffolding, and intelligent anomaly detection are part of the development workflow - not separate tools bolted on after the fact. This is how we maintain quality while delivering results every 14 days.

AI Governance: The Risk You Cannot Afford to Ignore

56% of enterprises lack a formal AI governance framework. For a practical mid-market governance roadmap, see AI Governance for Mid-Market: You Need Less Than You Think. [28].

With the EU AI Act now requiring high-risk AI systems to meet strict transparency and safety requirements by 2025-2026 [29], this gap is shifting from a best-practice issue to a compliance liability.

The risks are not theoretical. Shadow AI - employees using AI tools without IT approval - is present in 60% of enterprises [30]. Every time someone pastes proprietary data into an unvetted LLM, they create a data leak.

Every time an unreviewed AI output reaches a customer, they create a liability exposure. AI model bias incidents increased 26% in 2023, and only 35% of companies have bias testing in their deployment pipeline [31].

The upside of governance is measurable. AI governance frameworks reduce compliance incidents by 45% and increase stakeholder trust by 33% in regulated industries [32]. Responsible AI practices are not overhead - they are risk management with quantifiable returns.

A practical AI governance framework covers 5 areas:

- Data governance: What data can be used to train or query AI systems? What data must never leave your infrastructure? Define this before deploying any model.

- Model evaluation: How do you measure accuracy, bias, and hallucination rates for each use case? Establish baselines and monitoring before production deployment.

- Access control: Who can deploy AI models? Who can approve AI-generated outputs for customer-facing use? Define roles and approval workflows.

- Audit trail: Every AI decision that impacts customers, finances, or operations needs a log. The EU AI Act requires this for high-risk systems - build it into your architecture from day one.

- Incident response: What happens when an AI system produces a harmful output? Define escalation paths, rollback procedures, and communication templates.

For DACH enterprises, governance is not optional. The EU AI Act applies directly, and German data protection standards (GDPR enforcement is among the strictest in Europe) add another layer. Building governance into your AI architecture from the start costs a fraction of retrofitting it after a compliance incident.

Our AI framework cuts development time in half

ebiCore is our proprietary agentic AI framework that accelerates innovation and reduces cost.

Start with a Strategy CallShould You Build or Buy Your AI Capabilities?

Every enterprise faces this question as AI moves from experiment to production. For a detailed breakdown of the cost comparisons and vendor lock-in strategies, see The AI Build vs. Buy Decision: Custom Models vs. API Wrappers.

The answer depends on your competitive position, your data, and your team - not on what your competitors are doing or what a vendor demo showed you last week.

Build when AI is your competitive advantage. If AI directly differentiates your product or service - personalization engines, pricing algorithms, domain-specific automation - you need to own the capability. This means in-house data science teams, proprietary training data, and custom model development.

The investment is significant: the average AI budget is $3.5M for mid-market companies [33], and the talent gap means AI/ML roles take an average of 68 days to fill [34].

Build when AI is your competitive advantage

Buy when AI is a utility. Code completion, email drafting, meeting summarization, generic customer support - these are commodity AI applications. Buy them. The LLM inference cost dropped 10x between March 2023 and March 2024 [35].

The economics of running your own model for generic tasks rarely justify the infrastructure and talent investment.

Partner when you need production-grade AI but lack the team to build it. This is where most mid-market enterprises land. You have domain expertise and data, but you do not have 10 ML engineers and a dedicated MLOps team.

A development partner with AI framework expertise - like easy.bi with ebiCore - bridges that gap. You get production-grade AI capabilities delivered in sprint cycles, without the 12-18 month ramp-up of building an internal AI team.

Buy when AI is a utility

The critical mistake is treating this as a binary choice. The most effective AI strategy combines all three: build what differentiates you, buy commodities, and find a partner for the production engineering that connects them.

For custom AI solutions built on enterprise architecture, the partner model delivers faster time-to-value than building in-house. You ship production AI in weeks, not quarters. And because the partner has done it before, you avoid the 78% of pilot-to-production failures that come from learning on the job.

Where to Start: The First 90 Days of Enterprise AI

The biggest risk in enterprise AI is not starting with the wrong technology. It is starting with the wrong scope. Companies that try to boil the ocean with AI - enterprise-wide transformation, multi-system integration, novel research-grade applications - end up in the 78% that never reach production.

The companies that succeed start small, prove value, and expand systematically. Here is a practical 90-day framework:

Days 1-30: Identify and scope

Days 1-30: Identify and scope. Find 3-5 candidate use cases where AI could reduce manual effort by 50% or more.

Prioritize based on data readiness (is clean, structured data available?), business impact (will leadership notice the results?), and risk tolerance (what happens if the AI is wrong 10% of the time?). Pick one. Not three. One.

Days 31-60: Build and validate. Deploy a minimum viable AI solution for your chosen use case. If you are starting with internal knowledge retrieval, stand up a RAG pipeline against your documentation. If you are starting with development productivity, roll out AI coding tools to a pilot team.

Measure everything: accuracy, time saved, user adoption, error rates.

Days 31-60: Build and validate

Days 61-90: Measure and decide. Compare results against your baseline. If the pilot shows measurable value - and the numbers say 92% of large companies report positive AI ROI [36] - build the business case for production deployment.

Define the governance framework, the scaling plan, and the budget for phase two.

The first use case matters less than you think. What matters is that you build the organizational muscle: the data pipeline, the evaluation framework, the governance structure, and the team confidence to ship AI into production. That muscle transfers to every subsequent use case.

For a deeper look at how AI is reshaping the enterprise software landscape and the strategic implications for your technology stack, see our analysis: How AI Is Reshaping Enterprise Software.

How Do You Measure AI ROI Without Guessing?

AI ROI measurement is where most organizations get sloppy. They measure "time saved" without defining the baseline. They count "AI-generated outputs" without measuring whether those outputs were actually used. Vague metrics lead to vague results, which lead to budget cuts.

92% of large companies report positive AI ROI, with a median return of 3-5x within 14 months of deployment [36]. But this number comes from companies that measured properly. Here is how they do it:

Efficiency metrics

Efficiency metrics: Measure the actual reduction in time or cost for a specific task. AI-assisted code review reduces review time by 30-50% [24]. AI test generation reduces manual effort by 60% [25]. These numbers are measurable at the team level within 2-4 weeks of deployment.

Track hours saved per sprint, not percentage improvements in a slide deck.

Quality metrics: Measure defect rates before and after AI deployment. AI-assisted code review catches 15-20% more bugs [24]. AI anomaly detection catches 35% more pre-production issues [27].

If your production incident count drops, that is real money - the cost of fixing a bug in production is 6x higher than during implementation.

Quality metrics

Revenue metrics: 79% of AI high performers report that AI has increased revenue in areas where it is deployed [37]. For customer-facing AI (personalization, recommendation engines, intelligent search), measure conversion rates and revenue per session before and after deployment.

Adoption metrics: If nobody uses the AI tool, it has zero ROI regardless of its technical capabilities. Track daily active users, feature utilization rates, and user satisfaction scores. Copilot usage increases developer satisfaction by 75% [21] - that is a leading indicator of sustained adoption and retention.

The formula is straightforward: define the baseline before you deploy, measure the same metrics after deployment, and attribute the delta. Avoid measuring what the AI could do in theory. Measure what it actually did in production.

From Pilot to Production: What Separates the 22% That Succeed

The 22% of AI projects that reach production share patterns that are not about technology selection. They are about execution discipline, organizational alignment, and architectural decisions made in the first 30 days.

They start with data, not models. Successful teams spend the first 2-4 weeks on data quality assessment, not model evaluation. They know that a mediocre model on clean data outperforms a state-of-the-art model on dirty data every time.

Organizations with mature MLOps practices deploy models 5x faster and with 30% fewer production incidents [38].

They start with data, not models

They build governance first. Enterprises with AI Centers of Excellence are 3x more likely to scale AI from pilot to production [39]. The governance framework - data policies, model evaluation criteria, access controls, audit trails - comes before the first model deployment, not after the first incident.

They use proven integration patterns. RAG for knowledge retrieval. Fine-tuning for domain-specific tasks. Function calling for system integration. They do not invent new architectures when established patterns work.

They measure relentlessly. Every AI deployment has a baseline metric, a target metric, and a measurement cadence. If the numbers do not improve, they pivot or stop - they do not throw more compute at a failing approach.

They build governance first

They invest in people. Change management is the number-one success factor [9]. Training, documentation, feedback loops, and executive sponsorship are as much a part of the deployment plan as the technical architecture.

At easy.bi, we have delivered 100+ projects with a 98% client retention rate across enterprise clients including Siemens, REWE Group, and Fressnapf. Our ebiCore framework embeds AI capabilities directly into the development process - from code generation and testing through deployment and monitoring.

This is not AI as a bolt-on feature. It is AI as an integrated part of how production software gets built and delivered in 14-day sprint cycles.

The gap between AI hype and AI value is not closing on its own. It closes when organizations combine proven technology patterns with disciplined execution. Start with one use case, build the muscle, and scale from there.

References

- [1] McKinsey, "The State of AI," 2023 - 55% of organizations have adopted AI in at l

- [2] McKinsey, "The State of AI," 2024 - One-third of organizations use generative AI

- [3] Stanford HAI, "AI Index Report," 2024 - Global corporate AI investment reached $

- [4] Gartner, 2023 - By 2026, 80% of enterprises will have used generative AI APIs or

- [5] Gartner, 2023 - Only 22% of data science and AI projects make it from pilot to p

- [6] Bitkom, 2023 - Germany ranks 3rd in Europe for AI adoption, with 24% of companie

- [7] Bitkom, 2023 - German Mittelstand: only 15% use AI in production.

- [8] Deloitte, "State of AI," 2023 - Data quality is the #1 barrier, cited by 67% of

- [9] BCG, 2023 - Change management (not technology) is the #1 success factor for AI a

- [10] Cisco, "AI Readiness Index," 2023 - 86% of IT leaders expect AI to play a centra

- [11] Databricks, 2024 - RAG is used by 51% of enterprises deploying LLMs.

- [12] Vectara, "Hallucination Leaderboard," 2024 - RAG reduces hallucinations by 40-60

- [13] Databricks, 2023 - Fine-tuning improves task accuracy by 20-40% vs.

- [14] Menlo Ventures, 2024 - The average enterprise uses 3.8 different LLM providers.

- [15] Sequoia Capital, 2024 - Agentic AI in production at 8% of enterprises; 42% exper

- [16] GitHub, 2024 - Agentic AI frameworks saw 10x growth in GitHub stars from Q1 2023

- [17] Stack Overflow, "Developer Survey," 2024 - 72% of developers report using AI too

- [18] GitHub, 2024 - GitHub Copilot surpassed 1.

- [19] GitHub / Microsoft Research, 2023 - Copilot users complete tasks 55% faster and

- [20] Microsoft Research, 2023 - AI pair programming boosts junior dev productivity by

- [21] GitHub, 2023 - Copilot usage in enterprises increases developer satisfaction by

- [22] Stanford University, 2023 - AI-generated code requires 40% more review time due

- [23] Stanford University, 2023 - Code generation tools produce secure code only 67% o

- [24] Google Research, 2023 - AI-assisted code review reduces review time by 30-50% an

- [25] Diffblue, 2023 - AI test generation reduces manual effort by 60% and increases c

- [26] World Quality Report / Capgemini, 2023 - 61% of QA teams experimenting with AI-a

- [27] CircleCI, 2023 - AI-driven anomaly detection catches 35% more issues pre-product

- [28] Deloitte, 2023 - 56% of enterprises lack a formal AI governance framework.

- [29] European Commission, 2024 - EU AI Act requires high-risk AI systems to meet tran

- [30] Salesforce, 2023 - Shadow AI usage present in 60% of enterprises.

- [31] Stanford HAI, 2024 - AI model bias incidents increased 26% in 2023; only 35% hav

- [32] MIT Sloan, 2023 - AI governance frameworks reduce compliance incidents by 45% an

- [33] Deloitte, 2023 - Average AI budget is $3.

- [34] LinkedIn, 2023 - 2x more AI job postings than qualified candidates; average 68 d

- [35] a16z, 2024 - LLM inference costs dropped 10x between March 2023 and March 2024.

- [36] NewVantage Partners, 2024 - 92% of large companies report positive AI ROI; media

- [37] McKinsey, 2023 - 79% of AI high performers report revenue increases in areas whe

- [38] Algorithmia / DataRobot, 2023 - Mature MLOps practices: 5x faster model deployme

- [39] Deloitte, 2023 - Enterprises with AI Centers of Excellence are 3x more likely to

Explore Other Topics

Ready to accelerate with AI?

30-minute call with an engineering lead. No sales pitch - just honest answers about your project.

98% engineer retention · 14-day delivery sprints · No lock-in contracts