Why Your MVP Takes 9 Months (And How to Ship in 8 Weeks)

Table of Contents+

- Why Do MVPs Take 9 Months Instead of 8 Weeks?

- What Does the 8-Week Sprint Model Look Like?

- How Do You Decide What to Cut?

- Why Does Team Size Matter More Than Team Speed?

- What Does "Ship Then Iterate" Actually Mean?

- What Does the 8-Week Model Cost?

- How Do You Prevent the Post-MVP Feature Spiral?

- From 9 Months to 8 Weeks

- References

TL;DR

Most MVPs take 9 months because of scope creep, perfectionism, oversized teams, and waterfall planning disguised as agile. The 8-week alternative uses 2 weeks of focused discovery to define the single hypothesis to test, followed by 3 sprints of 14 days each to build and ship. The key discipline is cutting everything that does not directly validate whether users will pay for the core value proposition.

Key Takeaways

- •The number one reason startups fail is building something nobody wants - 42% cite no market need. An MVP that takes 9 months to ship is not an MVP; it is a full product built on unvalidated assumptions.

- •The four reasons MVPs take too long are scope creep during weeks 3 to 4, perfectionism disguised as quality, wrong team size, and waterfall planning disguised as agile sprints.

- •The 8-week sprint model works in three phases: 2 weeks of discovery to define the core problem, then 3 sprints of 14 days each to build, test, and ship the minimum product that validates the hypothesis.

- •Cut features ruthlessly using the 'would a user pay for only this' test. If the answer is no, the feature is not part of the MVP - it belongs in version 2.

- •Shipping is not the end. It is the beginning of learning. The 8-week model budgets 4 additional weeks post-launch for iteration based on real user behavior, not pre-launch assumptions.

90% of startups fail, with 42% citing no market need. Learn why MVPs take 9 months instead of 8 weeks, and the sprint model that eliminates scope creep, perfectionism, and waterfall disguised as agile.

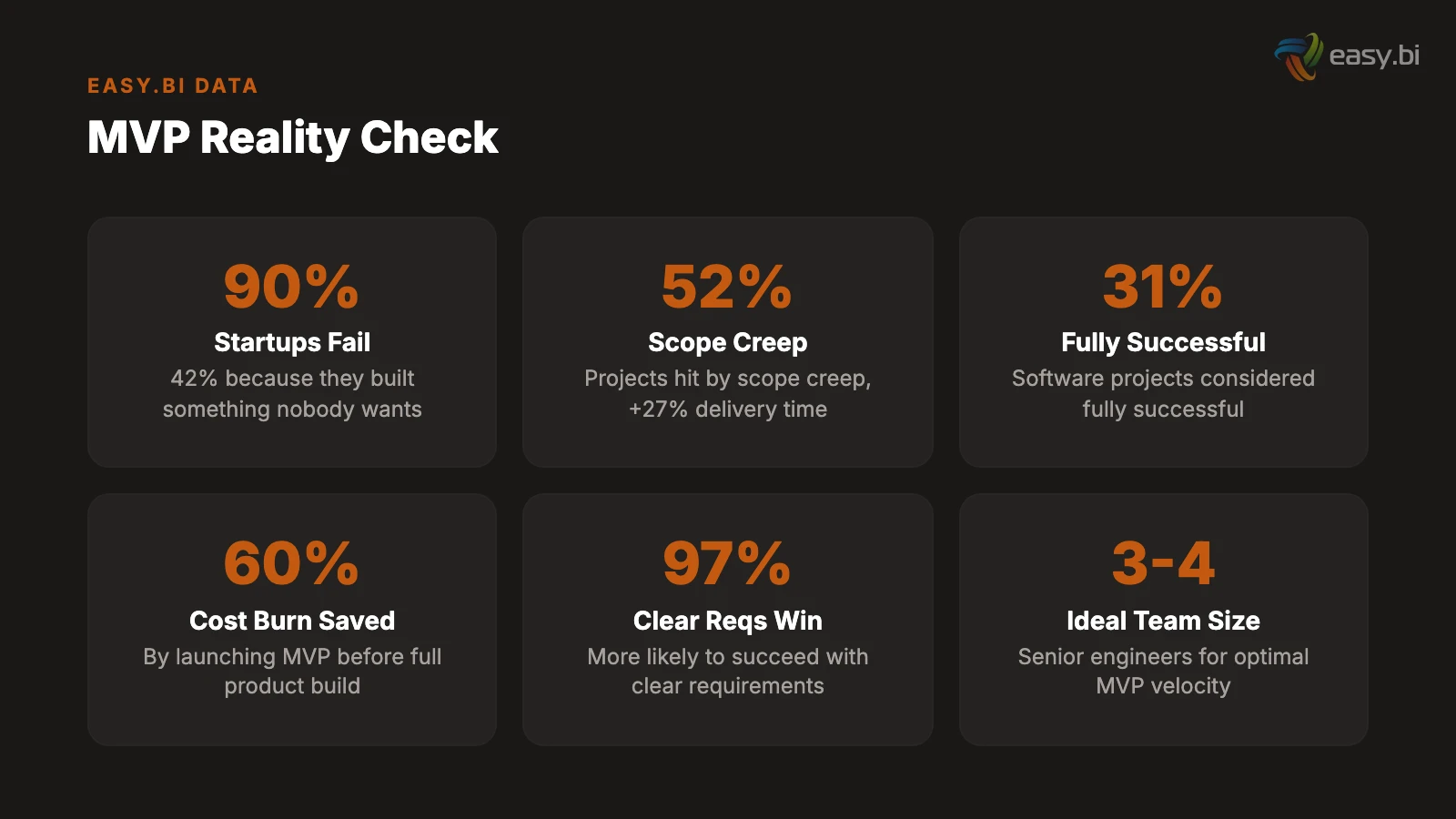

90% of startups fail. The number one reason, cited by 42% of failed startups, is building a product that nobody wants.[1] The antidote is the minimum viable product - a stripped-down version that tests whether your core assumption about the market is correct before you invest years and millions building the full vision.

The theory is sound. The execution is where it breaks down.

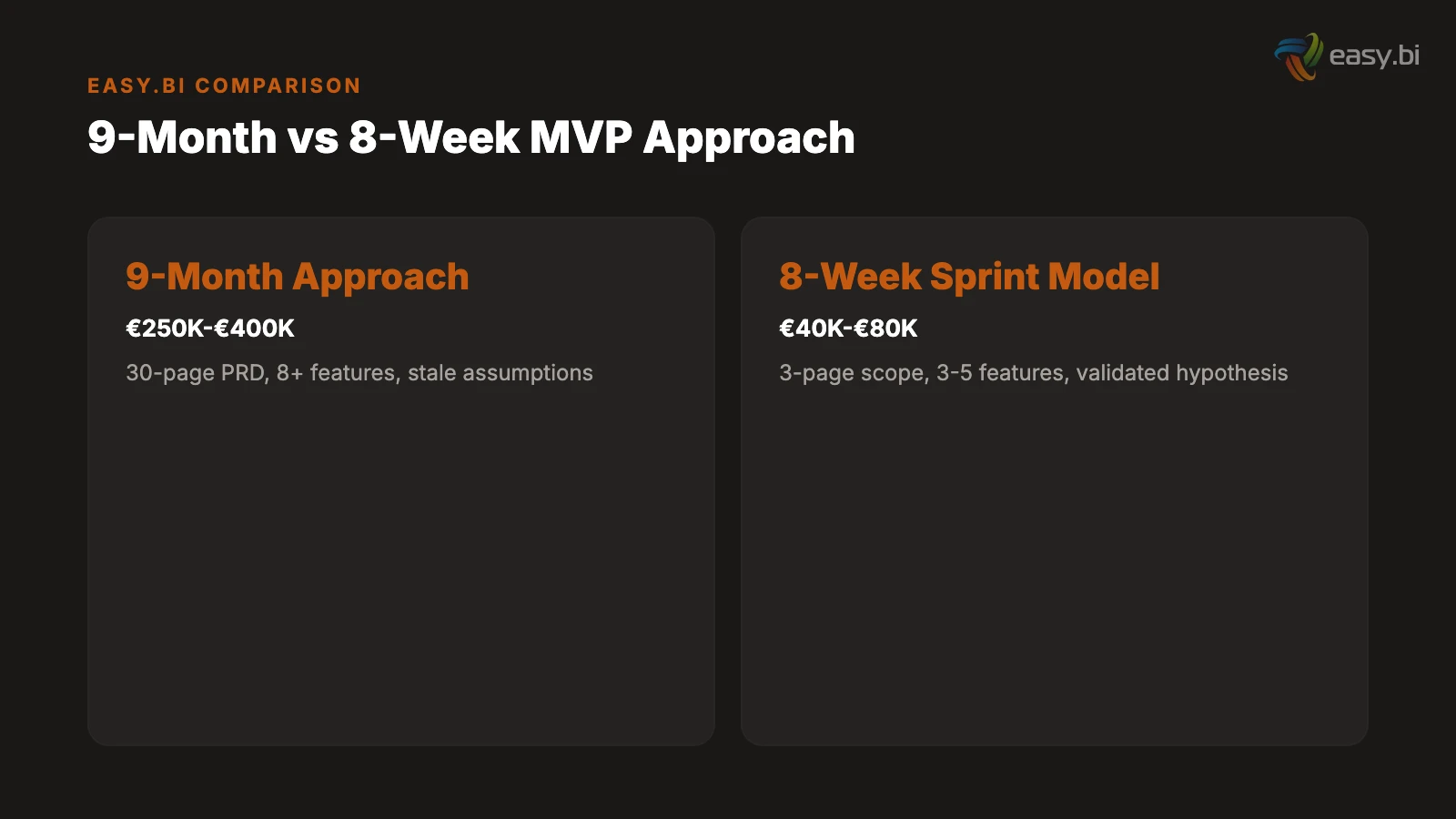

Most MVPs do not ship in 8 weeks. They ship in 9 months. By the time they reach users, the team has burned through EUR 250,000 to EUR 400,000, the original hypothesis is stale, and the "minimum" product has accumulated enough features to qualify as a version 2.0 that nobody asked for.

The problem is not that teams do not understand the concept of "minimum." The problem is that four specific failure patterns - scope creep, perfectionism, wrong team size, and waterfall disguised as agile - systematically expand every MVP timeline beyond the point where it delivers the learning it was designed to produce.

This article breaks down why MVPs take too long, presents the 8-week sprint model that prevents each failure pattern, and provides the framework for deciding what to build, what to cut, and when to ship.

Why Do MVPs Take 9 Months Instead of 8 Weeks?

Four failure patterns account for nearly every MVP timeline overrun. They are organizational problems, not technical ones. Faster servers and better frameworks do not fix them. Discipline does.

Failure pattern 1: Scope creep in weeks 3 to 4. The discovery phase defines 5 core features. By week 3, the founder has talked to 3 more potential customers and added 4 more features. By week 4, the product manager has identified 6 "must-have" edge cases. The backlog has doubled. The timeline has not. Scope creep affects 52% of all projects and increases delivery time by an average of 27%.[2] In MVP context, that 27% increase turns 8 weeks into 10 - and the creep compounds because each added feature creates new edge cases that spawn additional scope.

Failure pattern 2: Perfectionism disguised as quality. The sign-up flow must have social login, email verification, two-factor authentication, and a beautifully animated onboarding sequence. The dashboard needs real-time updates, drag-and-drop customization, and export to 4 file formats. None of these are necessary to test the core hypothesis. They are quality-of-life improvements for a product that has not yet proven it deserves to exist. Teams that launch MVPs before full builds reduce early-stage cost burn by up to 60%.[3] Every week spent polishing a feature that might be cut after user testing is a week of capital wasted.

Failure pattern 3: Wrong team size. A 9-month MVP timeline usually reveals a team that is either too large or too small. Too large: 8 engineers generating coordination overhead, merge conflicts, and communication latency. Teams larger than 15 see 50% lower per-capita output.[4] Too small: a solo developer handling frontend, backend, infrastructure, and testing with no second pair of eyes for code review or architectural decisions. The optimal MVP team is 3 to 4 senior engineers who can each cover multiple disciplines.

Failure pattern 4: Waterfall disguised as agile. The team runs sprint ceremonies - standups, retrospectives, sprint reviews - but the entire product is specified upfront in a 30-page PRD. No feature ships to production until all features are "ready." The sprints are not delivering working software every 2 weeks. They are delivering progress reports on a waterfall plan with agile vocabulary. Only 31% of software projects are considered fully successful,[5] and the success rate for waterfall-disguised-as-agile MVPs is lower than that because they combine the rigidity of waterfall with the false confidence of believing they are agile.

In my two decades of building and advising on software products, the single biggest predictor of MVP failure is the number of features in the initial scope. Every feature above 5 adds a week. Every integration adds 2 weeks. Every custom design element adds 3 days. The math is relentless - and founders consistently underestimate it because they confuse the product vision with the MVP scope.

See how we deliver 60% faster time-to-market with 40% lower TCO than off-the-shelf.

What Does the 8-Week Sprint Model Look Like?

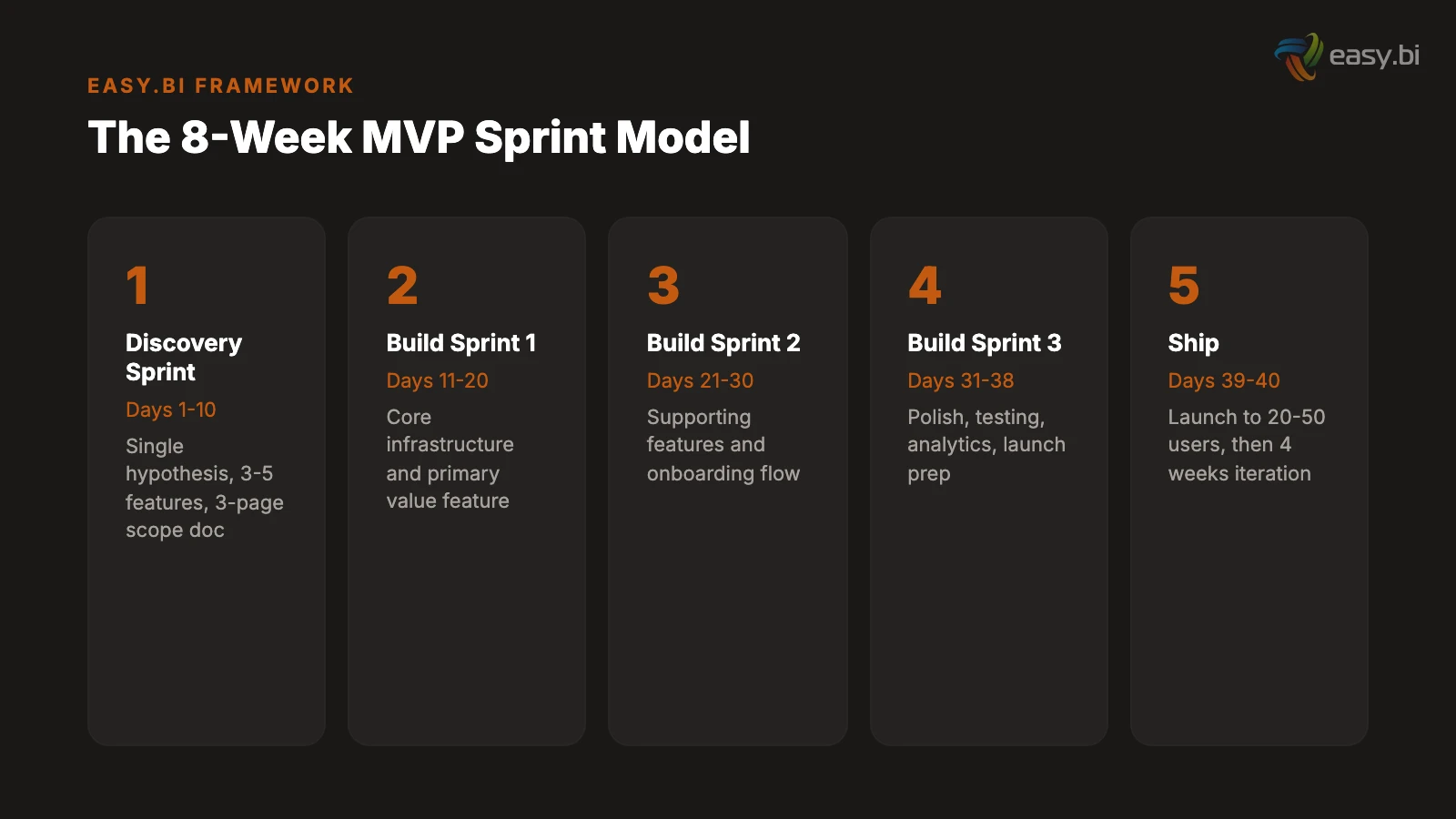

The 8-week model compresses the MVP lifecycle into three phases with a total budget of 6 working weeks of engineering time (the remaining 2 weeks are discovery and buffer). It assumes a team of 3 to 4 senior engineers, a signed scope document by day 5, and a willingness to ship a credibly functional product rather than a polished one.[6]

Phase 1: Discovery sprint (days 1-10). Two weeks devoted entirely to defining what the MVP must do and, critically, what it must not do. The output is a scope document containing: the single hypothesis the MVP will test, the 3 to 5 features required to test it, the acceptance criteria for each feature, the explicit list of features that are out of scope, and the success metric that determines whether the hypothesis is validated.

The discovery sprint replaces the 30-page PRD with a 3-page scope document. If it takes more than 3 pages to describe your MVP, you are building too much. Projects with clear requirements upfront are 97% more likely to succeed.[7] The discovery sprint produces those clear requirements in 10 business days.

Phase 2: Build sprints (days 11-38). Three sprints of 9 to 10 working days each. Each sprint delivers a working, deployable increment.

Sprint 1 (days 11-20): Core infrastructure and the primary value feature. User authentication, database schema, CI/CD pipeline, and the single feature that delivers the core value proposition. At the end of sprint 1, the product does one thing - but it does that one thing in production, with real data, accessible to test users.

Sprint 2 (days 21-30): Supporting features and the onboarding flow. The 2 to 3 additional features that support the core value feature. A basic onboarding flow that gets new users to the value moment in under 3 minutes. Error handling for primary workflows. At the end of sprint 2, the product is usable by early adopters who are willing to tolerate rough edges.

Sprint 3 (days 31-38): Polish, testing, and launch preparation. Bug fixes from sprint 1 and 2 testing. Performance optimization for the critical path. Basic analytics to track the success metric. Launch checklist: security review, data backup procedures, monitoring alerts, user feedback mechanism. At the end of sprint 3, the product ships.

Phase 3: Ship (days 39-40). Two days for final deployment, smoke testing, and launch to the initial user cohort. Not a press release launch. Not a Product Hunt launch. A quiet launch to 20 to 50 users who match the target profile and have agreed to provide feedback. The goal is learning, not fanfare.

| Phase | Duration | Deliverable | What Gets Cut If Behind |

|---|---|---|---|

| Discovery sprint | 2 weeks | 3-page scope doc, signed off | Nothing - this phase is non-negotiable |

| Build sprint 1 | ~10 days | Core feature in production | Secondary authentication methods |

| Build sprint 2 | ~10 days | Supporting features, onboarding | Nice-to-have features moved to v2 |

| Build sprint 3 | ~8 days | Polish, testing, launch prep | Visual polish - ship functional, not pretty |

| Ship | 2 days | Live product with initial users | Nothing - the product ships on day 40 |

How Do You Decide What to Cut?

The defining skill of MVP development is not building. It is cutting. Every feature, every integration, every design element must pass a single test: does this directly validate the core hypothesis? If the answer is not an immediate yes, cut it.

Three frameworks for making cut decisions.

The "would a user pay for only this" test. Take each feature in isolation. If the product had only this feature, would a user pay for it? If yes, it belongs in the MVP. If no, it is a supporting feature that might belong in version 2 but does not belong in the first release. This test is ruthless, and it is meant to be. McKinsey research shows that companies using rapid testing and iteration shorten development cycles by up to 50%.[3] Rapid iteration requires a small starting surface.

The integration deferral rule. Every third-party integration adds 1 to 2 weeks of development time (authentication, error handling, rate limiting, testing against a live API). For the MVP, ask: can we use a manual process, a CSV upload, or a webhook instead of a full integration? The answer is almost always yes. Build the integration in version 2 when you have confirmed that users want the underlying functionality.

The 5-feature ceiling. If your MVP has more than 5 features, it is not an MVP. It is a version 1.0 with ambitions of being an MVP. Count the features. If the count exceeds 5, rank them by hypothesis-validation importance and cut from the bottom until you reach 5 or fewer. Realistic MVP timelines for products with 3 to 5 features are 6 to 8 weeks with a senior team.[6] Products with 10 features take 12 to 16 weeks. Products with 15 features take 6 months. The math does not lie.

What to keep regardless. Some elements are non-negotiable even in the most minimal MVP: user authentication (even if it is email/password only), data backup procedures, basic error handling on critical paths, HTTPS, and GDPR-compliant data handling for DACH market products. These are not features. They are infrastructure. Skipping them creates legal liability and user trust issues that no amount of iteration can fix.

"The hardest conversation in MVP development is telling a founder that 80% of their product vision does not belong in the first release. But that conversation in week 1 prevents the conversation in month 8 where we explain why the budget is gone and the product has not shipped."

Why Does Team Size Matter More Than Team Speed?

The intuition is that adding engineers makes the MVP ship faster. The reality is the opposite. Beyond 4 engineers on an MVP, every additional person slows the project down.

The reasons are structural, not motivational.

Communication overhead scales quadratically. A team of 3 has 3 communication channels. A team of 6 has 15. A team of 10 has 45. Each channel requires synchronization: code reviews, design discussions, API contracts, database schema decisions. On an 8-week timeline, the overhead of a 6-person team consumes 2 to 3 weeks of the schedule - weeks that a 3-person team would have spent building. Teams of 5 to 9 deliver the highest productivity per person, with teams larger than 15 seeing 50% lower per-capita output.[4]

Senior engineers are 3x more productive than juniors on MVP work. MVP development requires constant architectural decisions: which shortcuts are acceptable for now, which will create unmanageable debt later, how to structure the codebase for rapid iteration post-launch. Senior engineers make these decisions in minutes. Junior engineers make them in hours - or make the wrong decision and spend days refactoring. The optimal MVP team is 3 to 4 senior engineers who have built MVPs before, not 6 to 8 mid-level engineers who are learning on your timeline.

The single-threaded decision model. MVP development requires rapid decision-making: API design, database schema, UI component library, deployment strategy. When decisions require consensus from 6 people, they take 3x longer than when one tech lead decides and 2 engineers execute. Assign one person as the technical decision-maker for the MVP. Their word is final on implementation choices. Debate strategy during discovery. Execute without committee during build sprints.

Germany faces a shortage of 149,000 IT specialists.[8] For startups and mid-market companies, assembling a 3-person senior team internally is often impossible within the timeline an MVP demands. This is where a development partner with an established team - already collaborating, already aligned on tooling and processes - provides a structural advantage over hiring.

What Does "Ship Then Iterate" Actually Mean?

"Ship then iterate" is the most quoted and least practiced principle in product development. Teams say it. They put it on their wall. Then they spend 4 more weeks polishing the dashboard before launch because it "does not feel ready."

Here is what "ship then iterate" means in concrete, operational terms.

Ship means production, not staging. The product is accessible to real users with real data generating real feedback. It is not a demo environment that the team shows to investors. It is not a beta that requires an invitation code and a personal walkthrough from the founder. Real users, using the product without hand-holding, encountering real friction.

Ship means incomplete. The product does 3 things well and does not do 20 things at all. Users will ask for features that are not there. That is the point. Their requests tell you which features matter enough to build next - which is information you cannot get from a PRD written in a conference room. Lean validation practices increase the likelihood of achieving product-market fit by 2.5x.[3] That validation requires real usage, which requires shipping.

Iterate means scheduled, not reactive. After the 8-week build, budget 4 weeks for iteration. Week 1: collect user feedback and usage data. Week 2: prioritize findings into 3 categories - fix (broken), improve (works but frustrating), build (missing functionality users actively request). Weeks 3 to 4: execute the top-priority improvements in 2 sprints. This structured iteration cycle prevents the common trap of reactive development - chasing every user complaint instead of systematically addressing the patterns that indicate product-market fit or the absence of it.

Iterate means data-driven, not opinion-driven. The success metric defined in the discovery sprint determines whether the hypothesis is validated. If the metric is "50 weekly active users within 30 days of launch" and you have 12, the product has a problem that iteration must address. If you have 80, the hypothesis is validated and you move to scaling. The metric removes opinion from the equation. It does not matter if the founder thinks the product is great. It matters if users behave as though the product is great.

50+ custom projects. 99.9% uptime. 60% faster.

Senior-only engineering teams deliver production-grade platforms in under 4 months. No juniors on your project.

Start with a Strategy CallWhat Does the 8-Week Model Cost?

Transparency on cost prevents the two most common budgeting mistakes: underestimating the MVP budget (leading to a half-finished product) and overallocating (leading to scope inflation because "we have the budget").

In the DACH market, with a mid-size specialized development partner, the 8-week model costs EUR 40,000 to EUR 80,000. This assumes a team of 3 to 4 senior engineers at EUR 100 to EUR 140 per hour, 40 engineering days of build time, and 10 days of discovery and project management.

The global IT services market continues to grow rapidly, with the Germany IT services market projected to reach USD 130.5 billion by 2031.[9] Within that market, MVP development pricing varies significantly by partner type.

| Partner Type | Typical MVP Cost (EUR) | Timeline | Team Composition |

|---|---|---|---|

| Freelancer / solo developer | 15,000 - 30,000 | 10-16 weeks | 1 generalist |

| Offshore agency | 20,000 - 50,000 | 8-14 weeks | 4-6 mixed seniority |

| Mid-size specialized partner | 40,000 - 80,000 | 6-10 weeks | 3-4 seniors |

| Large consultancy | 120,000 - 300,000 | 12-24 weeks | 6-10 mixed, heavy PM overhead |

| Internal team (hiring cost) | 80,000 - 150,000 | 14-20 weeks (includes hiring) | Depends on market availability |

The cheapest option is rarely the fastest, and the fastest option is rarely the cheapest. The mid-size specialized partner hits the optimal balance: senior engineers who have built MVPs before, an established team that does not need ramp-up time, and a structured methodology that prevents the scope creep and timeline inflation that plague both freelancer and large-consultancy engagements.

Compare these costs against the alternative: a 9-month MVP that costs EUR 250,000 to EUR 400,000 and ships a product based on assumptions that are 9 months stale. The cost difference is not EUR 200,000. It is EUR 200,000 plus the opportunity cost of 7 months of market learning that the 8-week model would have delivered.

How Do You Prevent the Post-MVP Feature Spiral?

The 8-week model gets the product to market. The next risk is the post-MVP feature spiral: the product ships, users provide feedback, and the team adds every requested feature without a framework for prioritization. Within 3 months, the lean MVP is a bloated application with 30 features, 3 users love it, and nobody remembers what hypothesis they were testing.

Maintain the hypothesis discipline. After launch, every proposed feature must answer: does this help validate or invalidate our current hypothesis? If the hypothesis is "warehouse managers will adopt mobile-first inventory management," then adding desktop support is a distraction, not an improvement. It belongs in the backlog for after the hypothesis is resolved.

Batch iteration into 2-week sprints. Do not ship features as they are built. Batch them into 2-week sprints with clear themes. Sprint 5 (post-launch): critical bug fixes and the single highest-impact user request. Sprint 6: onboarding optimization based on drop-off data. Sprint 7: the second-highest user request plus performance improvements. This cadence prevents reactive development while maintaining continuous delivery. Agile teams deliver software 60% faster and with 25% fewer defects.[10] The 2-week sprint cadence is what keeps post-MVP iteration agile in practice, not just in name.

Set a v2 decision point. At week 12 (4 weeks post-launch), review the success metrics. If the hypothesis is validated, plan version 2 with a full discovery phase, expanded team, and proper product roadmap. If the hypothesis is invalidated, pivot: change the target user, change the core feature, or change the market. Do not invest another EUR 100,000 iterating on a product that has not proven demand. The 42% of startups that fail due to no market need[1] are the ones that kept iterating without questioning the fundamental assumption.

For a deeper look at how discovery phases prevent scope creep in both MVP and full-product contexts, see our guide on the discovery phase that saves you 6 months. For the sprint methodology that keeps post-MVP iteration disciplined, our article on 14-day delivery cycles covers the operational detail. And for teams evaluating whether to build internally or with a partner, our build vs. buy analysis for software teams provides the framework for that decision.

From 9 Months to 8 Weeks

The difference between a 9-month MVP and an 8-week MVP is not talent, budget, or technology. It is discipline. The discipline to run a focused discovery sprint that produces a 3-page scope document instead of a 30-page PRD. The discipline to cut every feature that does not directly validate the core hypothesis. The discipline to ship a product that is functional but imperfect, and iterate based on real user behavior instead of internal opinions.

The 8-week model works because it treats the MVP as what it is: an experiment, not a product. The goal is learning, not launch. The metric is validated hypothesis, not feature count. The exit criteria are clear: at week 12, the data tells you whether to scale, pivot, or stop.

That clarity - knowing in 12 weeks whether your product has a market - is worth more than any feature you could build in the 7 months the 8-week model saves.

References

- [1] CB Insights (2024). "42% of startups fail because there is no market need - the cbinsights.com

- [2] PMI (2024). "Scope creep affects 52% of all projects, increasing delivery time b pmi.org

- [3] McKinsey / June.so (2025). june.so

- [4] QSM / Putnam Research (2023). qsm.com

- [5] Standish Group (2023). standishgroup.com

- [6] Netguru / Mansoori Technologies (2026). netguru.com

- [7] Standish Group (2023). standishgroup.com

- [8] Bitkom (2024). "Germany faces a shortage of 149,000 IT specialists." bitkom.org

- [9] Mordor Intelligence (2025). mordorintelligence.com

- [10] Standish Group (2023). standishgroup.com

Explore Other Topics

Ready to build your custom platform?

30-minute call with an engineering lead. No sales pitch - just honest answers about your project.

98% engineer retention · 14-day delivery sprints · No lock-in contracts