AI Governance for Mid-Market: You Need Less Than You Think

Table of Contents+

- Why Is AI Governance Different for Mid-Market Companies?

- What Does the EU AI Act Actually Require?

- How Does Risk Classification Work in Practice?

- What Does a Practical Mid-Market Governance Framework Look Like?

- How Do You Handle Data Privacy With LLMs?

- What Model Monitoring Do You Actually Need?

- Getting Started: The 90-Day AI Governance Roadmap

- References

TL;DR

Every week, a mid-market CTO tells me the same story: they want to deploy AI capabilities - a recommendation engine, an internal automation tool, an intelligent search function - but they are paralyzed by governance concerns.

Key Takeaways

- •56% of enterprises lack a formal AI governance framework - but the enterprise frameworks that do exist are designed for organizations with dedicated AI ethics teams, legal departments, and compliance budgets that mid-market companies do not have. The answer is not 'adopt a lighter version of what Google does.' It is building governance right-sized for your scale and risk profile.

- •The EU AI Act classifies AI systems into 4 risk levels: unacceptable, high, limited, and minimal. Most mid-market AI applications - recommendation engines, internal automation, customer service chatbots - fall into the limited or minimal risk categories, which require transparency measures but not the extensive compliance apparatus that high-risk systems demand.

- •Data privacy with LLMs is the governance area where mid-market companies face the most immediate risk. When employees paste customer data into ChatGPT or a support chatbot sends conversation history to a third-party API, the GDPR implications are real and enforceable. A clear data classification policy takes 2 days to create and eliminates 80% of this risk.

- •Model monitoring for mid-market does not require MLOps infrastructure. For most applications, a weekly review of model outputs against expected behavior, a quarterly bias audit on a sample of decisions, and automated alerts when output distributions shift significantly are sufficient. Start with spreadsheets, not platforms.

- •72% of DACH enterprises plan to increase AI investment in 2025. The companies that establish basic governance now - before they scale - will move faster than those who build AI capabilities first and retrofit governance later. Governance is not a brake on AI adoption. It is the steering wheel.

Enterprise AI governance frameworks are overkill for mid-market companies. Learn what the EU AI Act actually requires, how risk classification works in practice, and a 90-day governance roadmap that covers data privacy with LLMs, model monitoring, and regulatory compliance without paralysis.

Every week, a mid-market CTO tells me the same story: they want to deploy AI capabilities - a recommendation engine, an internal automation tool, an intelligent search function - but they are paralyzed by governance concerns.

They have read about the EU AI Act, they have seen enterprise governance frameworks with 50-page policy documents and dedicated ethics review boards, and they have concluded that AI governance is too complex for a company their size.

So they do nothing, or worse, they deploy AI tools informally with no governance at all.

Both responses are wrong. The enterprise governance playbook is overkill for a 200-person company. But no governance is a compliance and reputational time bomb.

The right answer is a governance framework that matches your scale, your risk profile, and your regulatory obligations - and it is far simpler than most people think.

Why Is AI Governance Different for Mid-Market Companies?

Enterprise AI governance frameworks - the ones published by McKinsey, Deloitte, and the Big 4 - are designed for organizations that deploy AI at massive scale, across hundreds of use cases, with billions of data points and direct impact on millions of consumers.

These frameworks assume you have a Chief AI Officer, a dedicated AI ethics committee, a legal team with AI specialization, and a compliance budget in the millions.

Mid-market companies have none of these things.

What they have is a CTO who also manages infrastructure, a legal counsel who handles everything from contracts to GDPR, and a product team that wants to ship AI features without creating liability. 56% of enterprises lack a formal AI governance framework [1] - and among mid-market companies, that number is likely above 80%.

Governance paralysis

The disconnect creates two failure modes:

Governance paralysis: The CTO reads an enterprise governance guide, realizes they cannot implement 80% of it, and delays all AI initiatives until "we have the resources to do it properly." Meanwhile, competitors ship AI features and capture market share.

Shadow AI

Shadow AI: Teams deploy AI tools - ChatGPT, Copilot, various SaaS products with embedded AI - without any governance structure. Customer data flows to third-party APIs without data processing agreements. Employees use AI-generated content without quality review. Model outputs affect business decisions without documentation or auditability.

The mid-market needs a third path: governance that covers the essential risks, meets regulatory requirements, and can be implemented by a small team in weeks rather than months.

See how ebiCore accelerates development.

What Does the EU AI Act Actually Require?

The EU AI Act [2] is the world's first comprehensive AI regulation. It entered into force in August 2024, with different provisions applying on a staggered timeline through 2026. For DACH companies, understanding what applies to your specific AI use cases is the first governance step.

The Act uses a risk-based classification system with 4 levels:

| Risk Level | Examples | Requirements | Mid-Market Relevance |

|---|---|---|---|

| Unacceptable | Social scoring, real-time biometric surveillance, manipulative AI | Banned entirely | Low - most mid-market companies do not build these |

| High | AI in hiring, credit scoring, medical devices, critical infrastructure | Conformity assessment, risk management system, human oversight, data governance | Medium - applies if your AI makes consequential decisions about people |

| Limited | Chatbots, emotion recognition, deepfake generators | Transparency obligations - users must know they are interacting with AI | High - most mid-market AI applications fall here |

| Minimal | Spam filters, recommendation engines, search optimization | No specific obligations, voluntary codes of conduct encouraged | High - many internal AI tools fall here |

For most mid-market companies, the practical implication is straightforward: if your AI application falls into the "limited" category (which most customer-facing chatbots, content generation tools, and recommendation systems do), your primary obligation is transparency - making users aware they are interacting with AI and providing information about the system's capabilities and limitations.

If your AI application falls into the "high" category - for example, an AI system that screens job applications or assesses creditworthiness - the requirements are substantially more demanding.

You need a risk management system, data governance protocols, technical documentation, human oversight mechanisms, and in some cases a conformity assessment before deployment.

The critical action for mid-market companies: classify every AI system you operate or plan to deploy according to the EU AI Act risk framework. Most will be limited or minimal risk, requiring manageable governance.

The few that are high risk need dedicated attention - but knowing which is which prevents both over-governance of low-risk systems and under-governance of high-risk ones.

How Does Risk Classification Work in Practice?

Risk classification sounds abstract until you apply it to real products. Here is how it works for typical mid-market AI use cases:

Internal document search with LLM (Minimal risk): Employees use a search tool powered by an LLM to find information in company documents. No decisions about people. No customer-facing outputs. Governance needs: data access controls, basic output quality monitoring, and an internal user guide.

Internal document search with LLM (Minimal risk)

Customer support chatbot (Limited risk): A chatbot handles initial customer inquiries using an LLM. It interacts directly with customers and influences their experience.

Governance needs: clear disclosure that the user is talking to AI, escalation path to human agents, conversation logging for quality review, and a data processing agreement with the LLM provider.

Product recommendation engine (Minimal risk): An algorithm suggests products based on browsing and purchase history. Governance needs: basic bias monitoring to ensure recommendations are not systematically excluding product categories, and privacy compliance for the data used as input.

Customer support chatbot (Limited risk)

AI-assisted hiring screening (High risk): An AI system scores or filters job applications. This directly affects people's employment opportunities. Governance needs: full risk management system, bias testing across protected categories, human oversight for every consequential decision, data governance documentation, and conformity assessment.

Notice the pattern: the governance effort scales with the impact on people. A search tool that helps employees find documents faster needs minimal governance. A system that determines which job applicants move forward needs extensive governance.

The EU AI Act formalizes this proportionality, and mid-market companies should embrace it - not gold-plate every AI system with enterprise-grade oversight.

What Does a Practical Mid-Market Governance Framework Look Like?

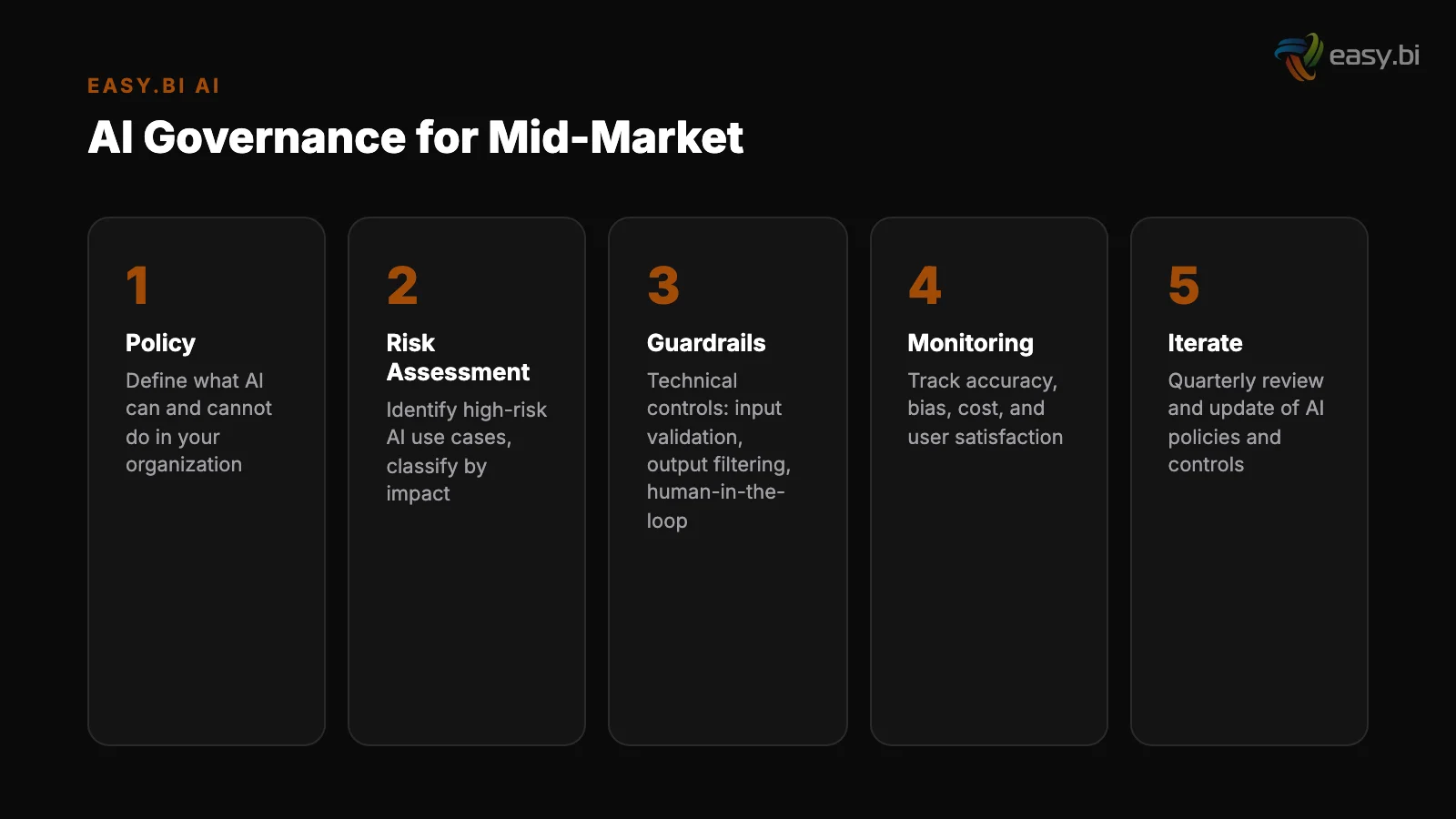

Based on working with mid-market DACH companies deploying AI, here is a governance framework that meets regulatory requirements without creating bureaucratic overhead:

1. AI inventory (create once, update quarterly): A simple spreadsheet listing every AI system in use - both built and bought. For each: name, purpose, risk classification, data inputs, data outputs, vendor (if external), and the person responsible.

Most mid-market companies are surprised to discover they have 10-20 AI systems in use when they thought they had 2-3.

2.

Data classification policy (create once, review annually): Categorize your data into 3 tiers: public (can be sent to any AI system), internal (can be processed by AI systems with data processing agreements), and restricted (cannot be sent to external AI systems under any circumstances - customer PII, financial data, health data).

This single policy eliminates 80% of data privacy risk with LLMs.

3. Acceptable use guidelines (create once, update as needed): A 2-page document that tells employees what AI tools they can use, what data they can input, and what approval is needed for new AI tools. Not a 50-page policy - a clear, actionable guide that people will actually read.

4. Vendor assessment checklist (use for each new AI vendor): 10 questions covering data processing location, data retention, GDPR compliance, security certifications, and model training practices. Does the vendor train on your data? Where is the data processed? What happens to the data after the contract ends?

These questions take 30 minutes to answer and prevent the most common vendor risk issues.

AI governance for mid-market is not about building a compliance department. It is about creating enough structure that your team can move fast without creating liability. A 4-document governance framework that people follow is infinitely more valuable than a 200-page governance manual that sits in a SharePoint folder.

How Do You Handle Data Privacy With LLMs?

Data privacy is the area where mid-market companies face the most immediate and enforceable risk. GDPR applies regardless of whether data is processed by a human or an AI system, and the penalties for non-compliance are up to EUR 20 million or 4% of global annual revenue.

The primary risk scenarios with LLMs:

Employee use of public AI tools

Employee use of public AI tools: When a sales manager pastes a customer email into ChatGPT to draft a response, customer data has been sent to OpenAI's servers. Depending on the subscription tier and terms of service, that data may be used for model training.

This is a GDPR data transfer that most companies have not authorized through a data processing agreement.

Customer data in AI pipelines: When your customer support chatbot sends conversation history to an LLM API, every piece of customer information in that conversation is processed by a third party. If the API provider is outside the EU, this adds data transfer compliance requirements under GDPR.

Customer data in AI pipelines

Training on sensitive data: If you fine-tune or train models on datasets containing personal data, GDPR requires a lawful basis for processing, data minimization, and the ability to respond to data subject access requests - including the right to erasure.

The practical solutions:

- Deploy enterprise-tier AI tools that include data processing agreements, EU data residency options, and contractual commitments not to train on your data. OpenAI Enterprise, Azure OpenAI Service, and AWS Bedrock all offer these protections.

- Implement data scrubbing in your AI pipelines. Before sending any data to an external LLM, strip personal identifiers - names, email addresses, phone numbers, customer IDs. This reduces GDPR exposure even if the underlying data processing agreement is incomplete.

- Create a clear policy about which AI tools are approved for which data tiers. Public data can go to any approved tool. Internal data requires tools with data processing agreements. Restricted data stays on-premises or uses private model deployments.

55% of organizations have adopted AI in at least one business function [3]. Among those, data privacy practices range from rigorous to non-existent.

Mid-market companies that establish clear data handling practices now - before they scale AI usage - avoid the costly remediation that companies face when they discover their data practices do not meet GDPR requirements after a complaint or audit.

Our AI framework cuts development time in half

ebiCore is our proprietary agentic AI framework that accelerates innovation and reduces cost.

Start with a Strategy CallWhat Model Monitoring Do You Actually Need?

Enterprise model monitoring involves real-time dashboards tracking prediction accuracy, data drift detection, model performance degradation alerts, A/B testing infrastructure, and automated retraining pipelines. For a mid-market company running 3-5 AI features, this infrastructure costs more than the AI features themselves.

What mid-market companies actually need:

Weekly output review

Weekly output review: A 30-minute weekly review of a sample of AI outputs. For a chatbot: read 20 conversations and flag any that were inaccurate, inappropriate, or failed to escalate when they should have. For a recommendation engine: check that recommendations are diverse, relevant, and not systematically biased.

This manual review catches issues that automated monitoring would also catch - at a fraction of the cost.

Quarterly bias audit: For any AI system that affects customers or employees, run a quarterly check on whether outputs differ systematically across demographic groups. For a hiring tool: do screening scores differ by gender or ethnicity? For a recommendation engine: are certain product categories systematically underrepresented?

This does not require a data science team - it requires exporting data to a spreadsheet and running basic statistical comparisons.

Quarterly bias audit

Automated distribution alerts: Set up simple alerts that fire when AI output distributions shift significantly. If your chatbot's average response length suddenly doubles, or your recommendation engine starts suggesting the same 5 products to everyone, something has changed.

These alerts can be implemented with basic scripting and do not require an MLOps platform.

Incident log: Document every AI-related incident - a wrong recommendation that a customer complained about, a chatbot conversation that required human correction, a model output that was factually incorrect. Over time, this log reveals patterns that inform both governance improvements and model improvements.

Only 22% of AI projects make it from pilot to production [4]. Part of the reason is that companies over-engineer the monitoring and governance infrastructure before they have validated the AI capability itself. Start with manual monitoring.

Scale to automated monitoring when your AI usage justifies the investment - not before.

Getting Started: The 90-Day AI Governance Roadmap

Here is the implementation sequence that works for mid-market companies deploying or scaling AI capabilities:

Days 1-14: Inventory and classify. List every AI system in use (including SaaS tools with embedded AI that employees may have adopted independently). Classify each according to the EU AI Act risk framework. Identify the 2-3 highest-risk systems that need immediate attention.

Days 1-14: Inventory and classify

Days 15-30: Create core policies. Write the 4 governance documents: AI inventory, data classification policy, acceptable use guidelines, and vendor assessment checklist. These should total 8-12 pages, not 80-120. Get legal review, then distribute to all teams that use AI tools.

Days 31-60: Address high-risk systems. For any systems classified as high risk, begin the compliance work: risk management documentation, bias testing, human oversight protocols, and technical documentation. 72% of DACH enterprises plan to increase AI investment in 2025 [5] - getting high-risk governance right now prevents it from becoming a bottleneck when you scale.

Days 61-90: Establish monitoring rhythms. Implement weekly output reviews for all customer-facing AI systems. Schedule quarterly bias audits. Set up distribution alerts for your highest-traffic AI features. Create the incident log and assign ownership for maintaining it.

Days 15-30: Create core policies

After 90 days, you have a functioning governance framework that meets EU AI Act requirements, protects customer data, and enables your team to deploy new AI capabilities with confidence.

The total investment is approximately 2-3 weeks of focused effort from your CTO, legal counsel, and one senior engineer - not a 6-month program with external consultants.

For the complete perspective on integrating AI into enterprise software products - including the technical architecture that makes governance practical - read our pillar guide on AI in enterprise software. For specific patterns on integrating LLMs into existing applications, see LLM integration patterns.

And for the practical considerations of building vs. buying AI capabilities, read adding AI features to existing products.

If you are navigating AI adoption and want to move fast without creating compliance risk, explore our custom solutions approach. We help mid-market companies build AI capabilities with governance built in from the start - not bolted on after the first incident.

References

- [1] Deloitte (2023). "56% of enterprises lack a formal AI governance framework. deloitte.com

- [2] European Commission (2024). ec.europa.eu

- [3] McKinsey (2023). "55% of organizations have adopted AI in at least one business mckinsey.com

- [4] Gartner (2023). "Only 22% of data science and AI projects make it from pilot to gartner.com

- [5] Bitkom / DFKI (2024). "72% of DACH enterprises plan to increase investment in AI bitkom.org

Explore Other Topics

Ready to accelerate with AI?

30-minute call with an engineering lead. No sales pitch - just honest answers about your project.

98% engineer retention · 14-day delivery sprints · No lock-in contracts