Building AI Features Into Existing Products (Without Starting Over)

Table of Contents+

- Why the Rewrite Instinct Is Wrong

- Where to Start: The Three Highest-Value AI Insertion Points

- The API-First Integration Pattern

- When Does Semantic Search Beat Keyword Search?

- How Do You Handle Data Privacy With AI APIs?

- The Incremental Integration Roadmap

- What Does the Cost Structure Look Like?

- Avoiding the Three Most Common Integration Mistakes

- References

TL;DR

Your product works. Customers use it. Revenue flows. Now the board wants AI features, the product team has a list of ideas, and somewhere in the background, a competitor just shipped an AI-powered search bar. The pressure to respond is real. The temptation to start over is dangerous.

Key Takeaways

- •You do not need to rewrite your product to add AI - API-based AI services from OpenAI, Anthropic, and Google let you integrate AI features into existing codebases in days, not months.

- •Start where AI creates the most visible user value: search, recommendations, and content generation are the three highest-ROI entry points for existing products.

- •92% of large companies report positive AI ROI with a median 3-5x return within 14 months - but only when they start with a specific use case, not a platform rewrite.

- •The incremental integration pattern - API layer first, then RAG for knowledge grounding, then fine-tuning for domain accuracy - lets you ship AI features every sprint without disrupting your existing architecture.

- •The biggest risk is not starting too small. It is starting too big. Companies that attempt AI-first rewrites join the 78% of AI projects that never reach production.

You don't need a rewrite to add AI to your product. Learn where to start - search, recommendations, content generation - plus practical integration patterns and API-based approaches that work with your existing architecture.

Your product works. Customers use it. Revenue flows. Now the board wants AI features, the product team has a list of ideas, and somewhere in the background, a competitor just shipped an AI-powered search bar. The pressure to respond is real. The temptation to start over is dangerous.

Most products do not need an AI rewrite. They need an AI layer - a set of targeted capabilities added incrementally to an existing architecture. The companies that ship AI features fastest are not the ones rebuilding from scratch.

They are the ones who identify the highest-value insertion points and integrate AI through APIs, retrieval pipelines, and well-scoped feature sprints.

This article covers where to start, which integration patterns work for existing products, and how to avoid the rewrite trap that stalls 78% of AI projects before they reach production [1].

Why the Rewrite Instinct Is Wrong

When leadership says "add AI," the engineering team's first instinct is often to redesign the architecture. New data pipelines. New model serving infrastructure. New microservices. A 6-month roadmap that delivers AI features sometime next year - if it delivers at all.

This instinct comes from a misunderstanding of how modern AI integration works. In 2023, adding AI to a product meant building ML infrastructure. In 2026, it means making API calls. The infrastructure layer has been abstracted away by providers like OpenAI, Anthropic, Google, and AWS Bedrock.

Your product does not need a model training pipeline. It needs an HTTP client and a well-designed prompt.

The numbers support the incremental approach. 92% of large companies report positive AI ROI, with a median return of 3-5x within 14 months of deployment [2]. But that ROI comes from targeted deployments, not platform rewrites.

Gartner projects that by 2026, 80% of enterprises will have used generative AI APIs or deployed generative AI-enabled applications [3]. The emphasis is on APIs - not on custom models.

The rewrite trap is a specific failure mode. A team spends months building AI infrastructure, loses momentum, runs over budget, and delivers a capability that could have been shipped in 2 sprints using an API.

Meanwhile, the competitor who integrated a third-party AI search feature in 3 weeks captured the market attention.

"The fastest path to AI features in your product is not a rewrite. It is a well-scoped API integration delivered in a 2-week sprint. Ship the feature, measure the impact, then decide if you need deeper integration."

See how ebiCore accelerates development.

Where to Start: The Three Highest-Value AI Insertion Points

Not all AI features deliver equal value. Across 100+ projects, three categories consistently generate the fastest user-visible impact when added to existing products.

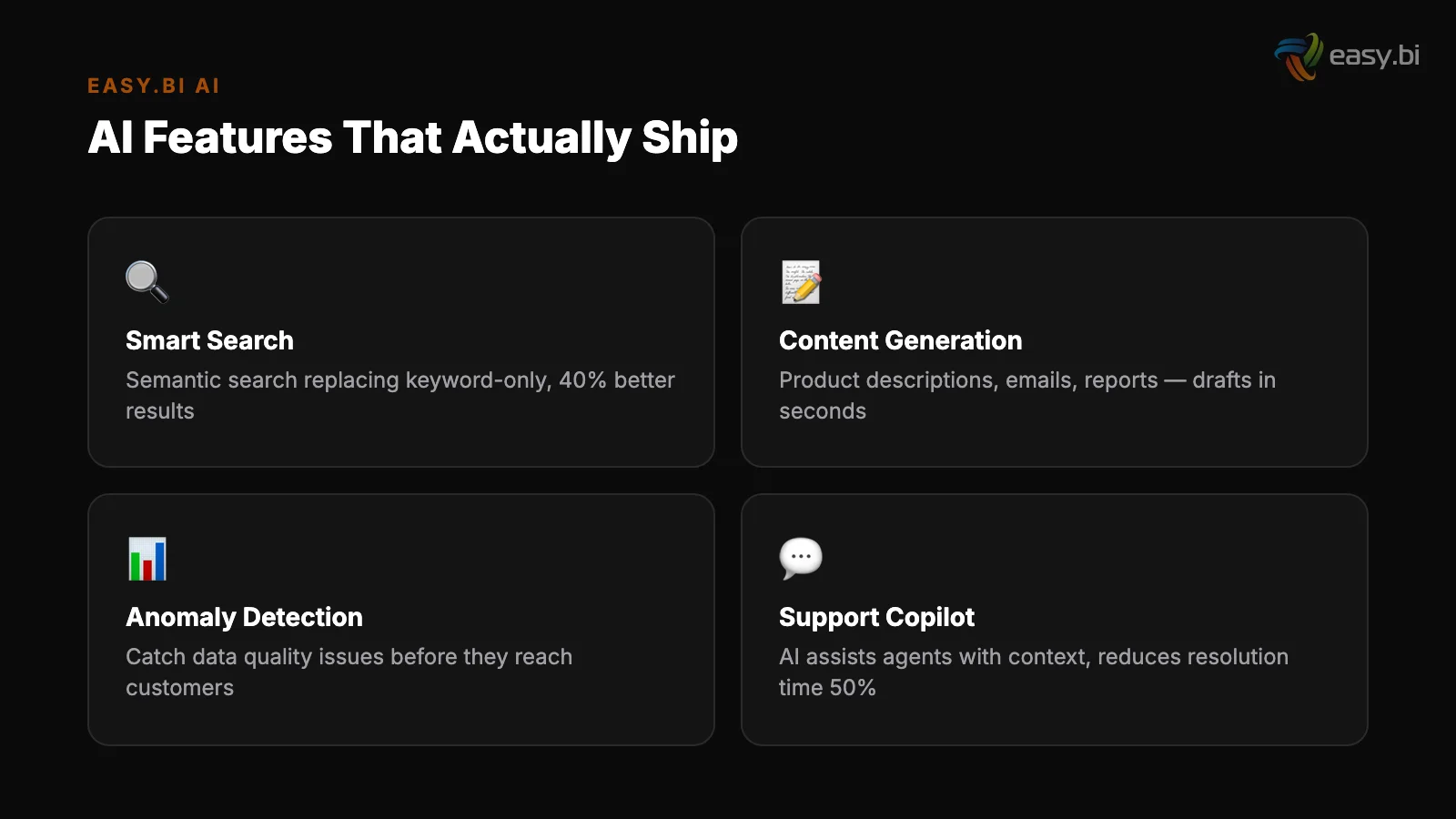

Intelligent search. Traditional keyword search returns documents that contain the query terms. AI-powered semantic search returns documents that match the query intent.

A user searching for "how to cancel my subscription" finds the cancellation flow even if the page never uses the word "cancel." Semantic search improves search relevance by 30-50% and reduces support tickets by 15-25% in most B2B products.

Implementation requires a vector database (Pinecone, Weaviate, or pgvector), an embedding model, and an API endpoint that sits alongside your existing search service.

Intelligent search

Personalized recommendations. If your product has user behavior data - clicks, purchases, usage patterns - you have the foundation for AI-powered recommendations.

Collaborative filtering models from AWS Personalize or custom embeddings can surface relevant content, products, or features based on what similar users engaged with. 79% of AI high performers report that AI has increased revenue in areas where it is deployed [4].

Recommendations are the most direct path from AI investment to revenue impact.

Content generation and assistance. Email drafts, report summaries, product descriptions, documentation suggestions - any feature where users currently write text from scratch is a candidate for AI-assisted generation.

The integration pattern is straightforward: capture the user's context, send it to an LLM API with a well-designed prompt, and present the output as a suggestion the user can edit. One-third of organizations already use generative AI regularly in at least one business function [5]. Your users expect this capability.

| AI Feature | Time to Ship (Existing Product) | Primary Technology | Typical Impact |

|---|---|---|---|

| Semantic search | 2-4 weeks | Embeddings + vector DB | 30-50% search relevance improvement |

| Recommendations | 4-6 weeks | Collaborative filtering / embeddings | 10-25% engagement increase |

| Content generation | 1-3 weeks | LLM API + prompt engineering | 40-60% reduction in content creation time |

| Intelligent categorization | 1-2 weeks | LLM API + function calling | 80-90% automation of manual tagging |

| Anomaly detection | 3-5 weeks | ML model or LLM-based analysis | 35% more issues caught pre-production |

The API-First Integration Pattern

The architectural pattern for adding AI to an existing product follows a consistent structure. You add an AI service layer between your existing application logic and the AI provider APIs. This layer handles prompt construction, response parsing, caching, fallback behavior, and cost management.

Here is the pattern in practice:

Step 1: Create an AI service abstraction

Step 1: Create an AI service abstraction. Build a thin service layer that wraps AI provider APIs. This layer standardizes request/response formats, manages API keys, handles rate limiting, and provides a consistent interface to your application code.

When you switch from OpenAI to Anthropic (or use both), only this layer changes.

Step 2: Design prompts as configuration, not code. Store prompt templates externally - in your CMS, a configuration service, or a dedicated prompt management tool. This lets your product team iterate on prompt quality without engineering deployments. A prompt change should be a config update, not a code release.

Step 2: Design prompts as configuration, not code

Step 3: Implement graceful degradation. AI APIs fail. They time out. They return unexpected outputs. Your product must handle these cases without breaking. If the AI search enhancement fails, fall back to keyword search. If content generation times out, show the empty editor.

Users tolerate missing AI features far better than they tolerate broken core features.

Step 4: Add observability from day one. Log every AI request - the input, the output, the latency, the cost, and the user action that followed. You cannot optimize what you do not measure.

Track which AI features users actually use, which outputs they accept vs. reject, and how AI interactions correlate with your core product metrics.

This pattern works with any architecture - monolith, microservices, or anything in between. The AI service layer is a sidecar, not a core architectural dependency.

When Does Semantic Search Beat Keyword Search?

The most common first AI feature for existing products is upgrading search. It is also the feature with the most nuance in implementation. Not every search use case benefits from semantic search, and adding it incorrectly can degrade the experience.

Semantic search adds value when your users search with natural language rather than exact terms, when your content uses different vocabulary than your users, or when relevant results span multiple document types.

It underperforms keyword search when users know exactly what they are looking for (product SKUs, order numbers, specific filenames) or when the corpus is small and well-structured.

The hybrid approach works best for most products. Use semantic search for broad queries and keyword search for exact-match queries. Combine the scores to rank results.

This is how modern search platforms (Elasticsearch with vector extensions, Algolia, or Typesense) handle the transition - they add semantic capabilities alongside existing keyword infrastructure rather than replacing it.

For the underlying technology, Retrieval-Augmented Generation (RAG) is the standard pattern. 51% of enterprises deploying LLMs use RAG [6]. It reduces hallucinations by 40-60% compared to using a base LLM alone [7].

In the context of product search, RAG retrieves relevant documents from your knowledge base and uses them to generate contextual answers - turning a search bar into a question-answering system.

How Do You Handle Data Privacy With AI APIs?

Every enterprise team considering AI integration asks the same question: what happens to our data when we send it to an API? The answer depends on the provider, the contract, and the architecture.

Major LLM providers (OpenAI, Anthropic, Google) offer enterprise tiers that guarantee data is not used for model training. API data is processed and discarded - it does not become part of the model.

For DACH enterprises operating under GDPR, this distinction matters: data processing agreements, data residency options (EU-hosted inference), and compliance certifications are available from all major providers.

For use cases involving highly sensitive data - medical records, financial data, classified information - the architecture shifts to on-premise or private cloud deployment. Open-source models (Llama, Mistral) can run on your own infrastructure with no data leaving your network.

The trade-off is operational complexity: you manage the inference infrastructure, the model updates, and the scaling.

56% of enterprises lack a formal AI governance framework [8]. Before integrating any AI feature, define three things: what data can be sent to external APIs, what data must stay on-premise, and who approves the classification.

This governance decision takes a day to make and prevents months of compliance cleanup later.

Our AI framework cuts development time in half

ebiCore is our proprietary agentic AI framework that accelerates innovation and reduces cost.

Start with a Strategy CallThe Incremental Integration Roadmap

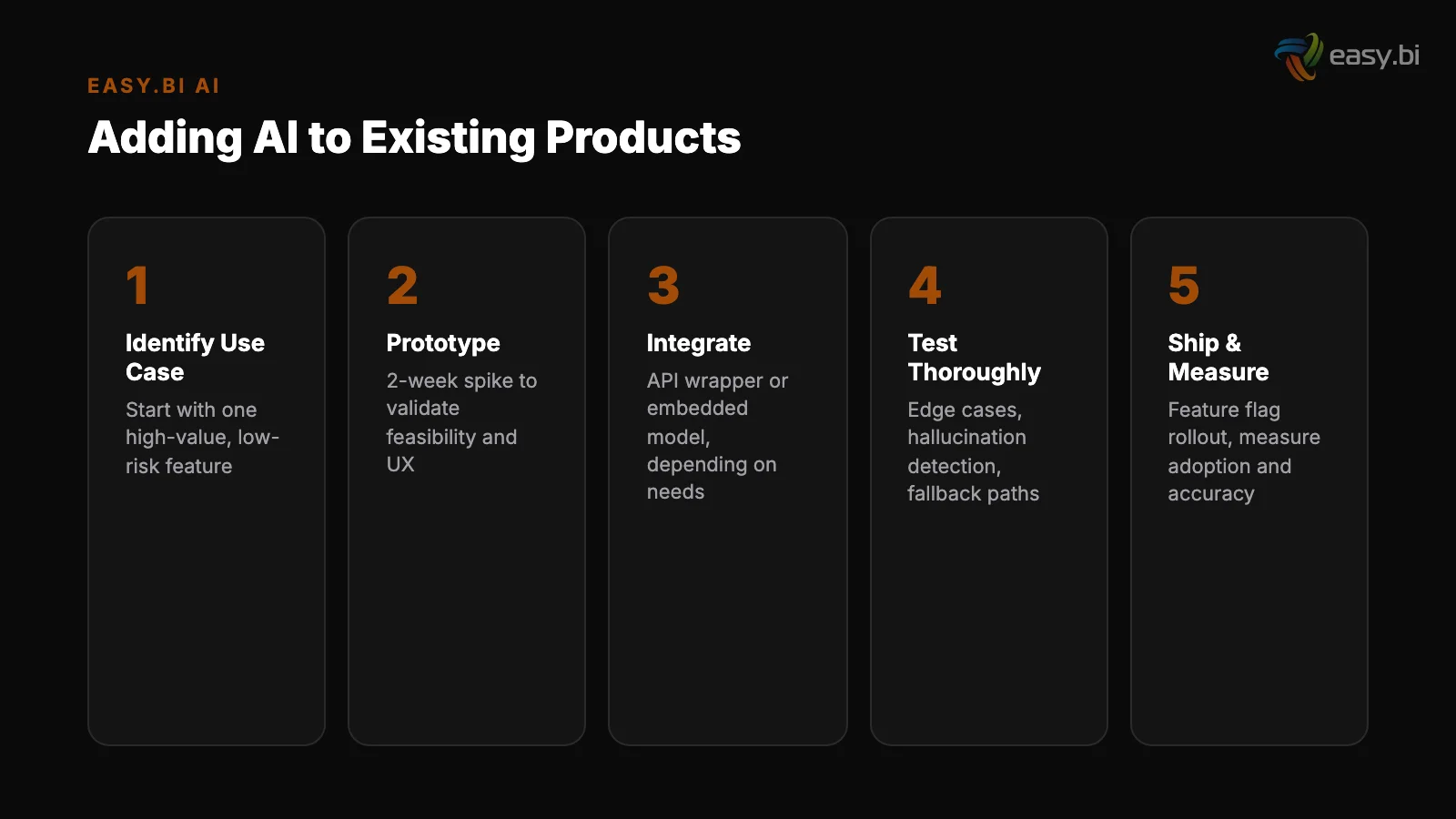

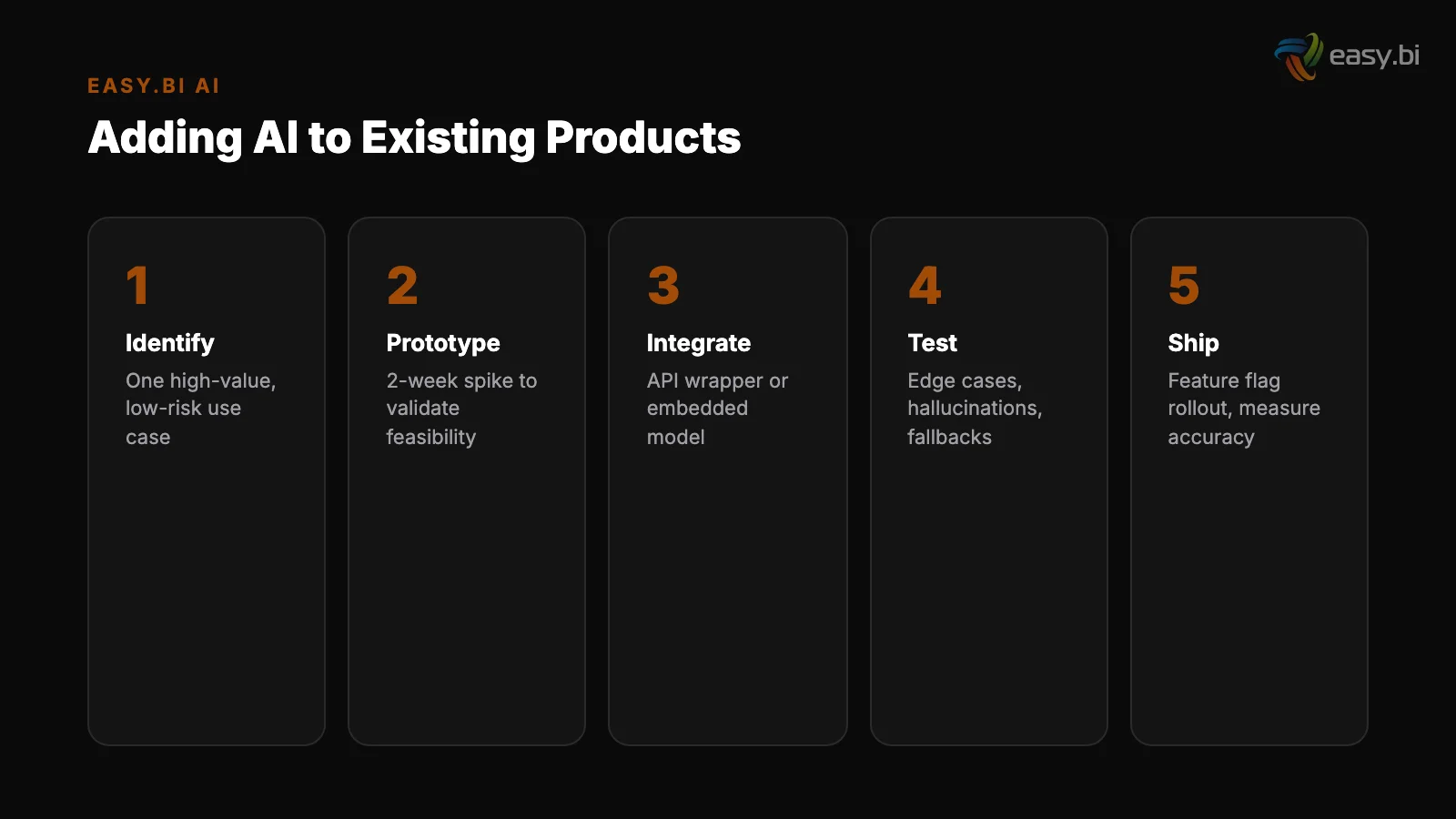

The path from no AI to production AI features follows a predictable progression. Each stage builds on the previous one, and each delivers measurable value on its own.

Stage 1: API-based features (Week 1-4). Integrate LLM APIs for content generation, classification, or summarization. No infrastructure changes required. Ship the feature, measure adoption and quality.

Stage 1: API-based features (Week 1-4)

Stage 2: RAG for knowledge grounding (Week 5-10). Add a vector database and build a retrieval pipeline against your product's data. This powers semantic search, contextual help, and knowledge-base Q&A. RAG is the most production-ready LLM pattern for enterprise use.

Stage 3: Fine-tuning for domain accuracy (Week 11-18). If API-based features work but the output quality needs domain-specific improvement, fine-tune a model on your data. Fine-tuning improves task accuracy by 20-40% compared to prompt engineering alone.

Only pursue this stage if Stages 1 and 2 demonstrate that the use case has real user demand.

Stage 2: RAG for knowledge grounding (Week 5-10)

Stage 4: Agentic workflows (Week 19+). For complex multi-step processes - order processing, document workflows, automated reporting - agentic AI frameworks orchestrate multiple AI calls with tool use and decision logic. This is the most advanced integration pattern and belongs after you have proven value with simpler approaches.

For a deeper look at this stage, see our guide to agentic AI frameworks.

Each stage is independently valuable. You do not need Stage 4 to get ROI from Stage 1. Most products generate significant user value by the end of Stage 2.

What Does the Cost Structure Look Like?

AI API costs follow a per-token pricing model that scales with usage. For a typical B2B SaaS product, the cost breakdown depends on the feature type and volume.

Content generation features (email drafts, summaries, descriptions) cost $0.01-0.10 per generation using GPT-4-class models. For a product with 10,000 daily active users generating 2 content pieces per day, the monthly API cost is $6,000-60,000. Smaller models (GPT-4o-mini, Claude Haiku) reduce this by 5-10x with acceptable quality for most use cases.

Semantic search costs depend on the embedding volume and vector database hosting. Embedding your knowledge base is a one-time cost ($5-50 depending on size). Query-time costs are $0.0001-0.001 per search.

At 100,000 daily searches, the monthly cost is $300-3,000 for the AI layer - less than most teams spend on their existing search infrastructure.

The cost optimization pattern is consistent: start with the most capable model, validate the feature works, then switch to the smallest model that maintains acceptable quality. Most features that launch on GPT-4 can run on GPT-4o-mini or Claude Haiku in production with no measurable quality loss for the end user.

Global corporate AI investment reached $189.6 billion in 2023, nearly 8x the 2017 level [9]. The companies driving this investment are not building custom models. They are integrating AI capabilities into existing products through APIs and managed services - and they are seeing 3-5x returns within 14 months.

Avoiding the Three Most Common Integration Mistakes

After working on AI integrations across multiple enterprise products, the failure patterns are predictable.

Mistake 1: Building before validating. Teams spend 6 weeks building a sophisticated AI feature that users do not want. Instead: build a simple version in 1 sprint, measure usage, then invest in sophistication. The first version of your AI search should take 2 weeks, not 2 months.

Mistake 1: Building before validating

Mistake 2: Ignoring the fallback path. When the AI API is slow or unavailable, the feature breaks entirely. Every AI feature needs a non-AI fallback that preserves core functionality. Your product was useful before AI - it should remain useful when the AI layer is temporarily unavailable.

Mistake 3: Treating AI output as trusted output. AI-generated code requires 40% more review time than human-written code due to subtle errors [10]. The same principle applies to AI-generated content, recommendations, and decisions in your product.

Build review mechanisms, confidence thresholds, and human-in-the-loop checkpoints for any AI output that affects user decisions or business data.

Mistake 2: Ignoring the fallback path

The companies that ship AI features successfully treat them like any other product feature: scoped, measured, iterated, and held to the same quality bar as everything else. The "AI" prefix does not excuse lower standards or longer timelines.

For the broader strategic context on AI adoption in enterprise products - including governance frameworks and build-vs-buy decisions - see our comprehensive guide: AI in Enterprise Software: What's Real, What's Hype, and What to Build First.

For the specific decision framework on when to use third-party APIs versus building your own models, read The AI Build vs. Buy Decision: Custom Models vs. API Wrappers. And for teams ready to explore custom AI integration, starting with a scoped pilot is the fastest path to measurable results.

References

- [1] Gartner, 2023 - Only 22% of AI projects make it from pilot to production.

- [2] NewVantage Partners, 2024 - 92% of large companies report positive AI ROI; media

- [3] Gartner, 2023 - By 2026, 80% of enterprises will have used generative AI APIs or

- [4] McKinsey, 2023 - 79% of AI high performers report revenue increases in areas whe

- [5] McKinsey, 2024 - One-third of organizations use generative AI regularly in at le

- [6] Databricks, 2024 - RAG is used by 51% of enterprises deploying LLMs.

- [7] Vectara, 2024 - RAG reduces hallucinations by 40-60% compared to base LLM alone.

- [8] Deloitte, 2023 - 56% of enterprises lack a formal AI governance framework.

- [9] Stanford HAI, 2024 - Global corporate AI investment reached $189.

- [10] Stanford University, 2023 - AI-generated code requires 40% more review time due

Explore Other Topics

Ready to accelerate with AI?

30-minute call with an engineering lead. No sales pitch - just honest answers about your project.

98% engineer retention · 14-day delivery sprints · No lock-in contracts