Agentic AI Frameworks: What They Are and Why Your Dev Team Needs One

Table of Contents+

- The Three Generations of AI in Development

- What Makes a Framework "Agentic"

- How ebiCore Works as a Production Agentic Framework

- Real Use Cases Beyond Code Generation

- The Compound Advantage of Early Adoption

- The Governance Layer That Makes Agents Safe

- Getting Started Without Disrupting Current Delivery

- References

TL;DR

The first wave of AI in software development gave developers autocomplete. GitHub Copilot suggests the next line of code. ChatGPT answers a technical question. These tools speed up individual engineers on individual tasks. They do not change how a team delivers software.

Key Takeaways

- •Agentic AI frameworks autonomously plan, execute, and iterate on multi-step tasks - unlike LLM wrappers that generate one response at a time with no memory or tool access.

- •Adoption is accelerating fast: agentic AI frameworks saw 10x growth in GitHub stars from Q1 2023 to Q1 2024, and 42% of enterprises are actively experimenting with agent-based systems.

- •ebiCore, easy.bi's proprietary agentic AI framework, orchestrates code generation, test scaffolding, code review triage, and deployment configuration across the full development lifecycle.

- •The ROI of agentic AI compounds over sprints: teams using agentic frameworks ship 40% faster than those relying on standalone code assistants, with the gap widening every 2-week cycle.

- •Starting small is critical - deploy an agentic framework on one team, one project, and measure impact over 2-3 sprints before scaling across the engineering organization.

Agentic AI frameworks go beyond code completion - they orchestrate entire delivery pipelines. Learn what separates agentic AI from LLM wrappers, real use cases, and how ebiCore accelerates enterprise development.

The first wave of AI in software development gave developers autocomplete. GitHub Copilot suggests the next line of code. ChatGPT answers a technical question. These tools speed up individual engineers on individual tasks. They do not change how a team delivers software.

Agentic AI frameworks operate at a different level entirely. They do not wait for a prompt. They plan multi-step workflows, call external tools, make decisions based on intermediate results, and iterate until a complex task is complete.

The shift from code assistants to agentic frameworks is the difference between giving a developer a faster keyboard and giving your delivery pipeline an autonomous co-pilot.

This article breaks down what agentic AI frameworks actually are, how they differ from the LLM wrappers flooding the market, where the real use cases exist, and how easy.bi built ebiCore - our proprietary agentic framework - to accelerate enterprise software delivery.

The Three Generations of AI in Development

Understanding agentic AI requires context on what came before it. AI in software development has evolved through three distinct generations, each operating at a different scope.

Generation 1: Code completion (2021-2022). Tools like GitHub Copilot and Tabnine predict the next line or block of code. They operate at the function level - one developer, one file, one suggestion at a time. Copilot users complete tasks 55% faster and accept 30% of code suggestions [1]. Useful.

But limited to the scope of a single developer's current file.

Generation 1: Code completion (2021-2022)

Generation 2: Chat-based assistants (2023). ChatGPT, Claude, and similar tools let developers ask questions, generate code snippets, and debug errors through conversation. They understand more context than autocomplete, but they are stateless - every conversation starts from zero.

They cannot access your codebase, your CI/CD pipeline, or your project's architectural constraints unless you paste them in manually.

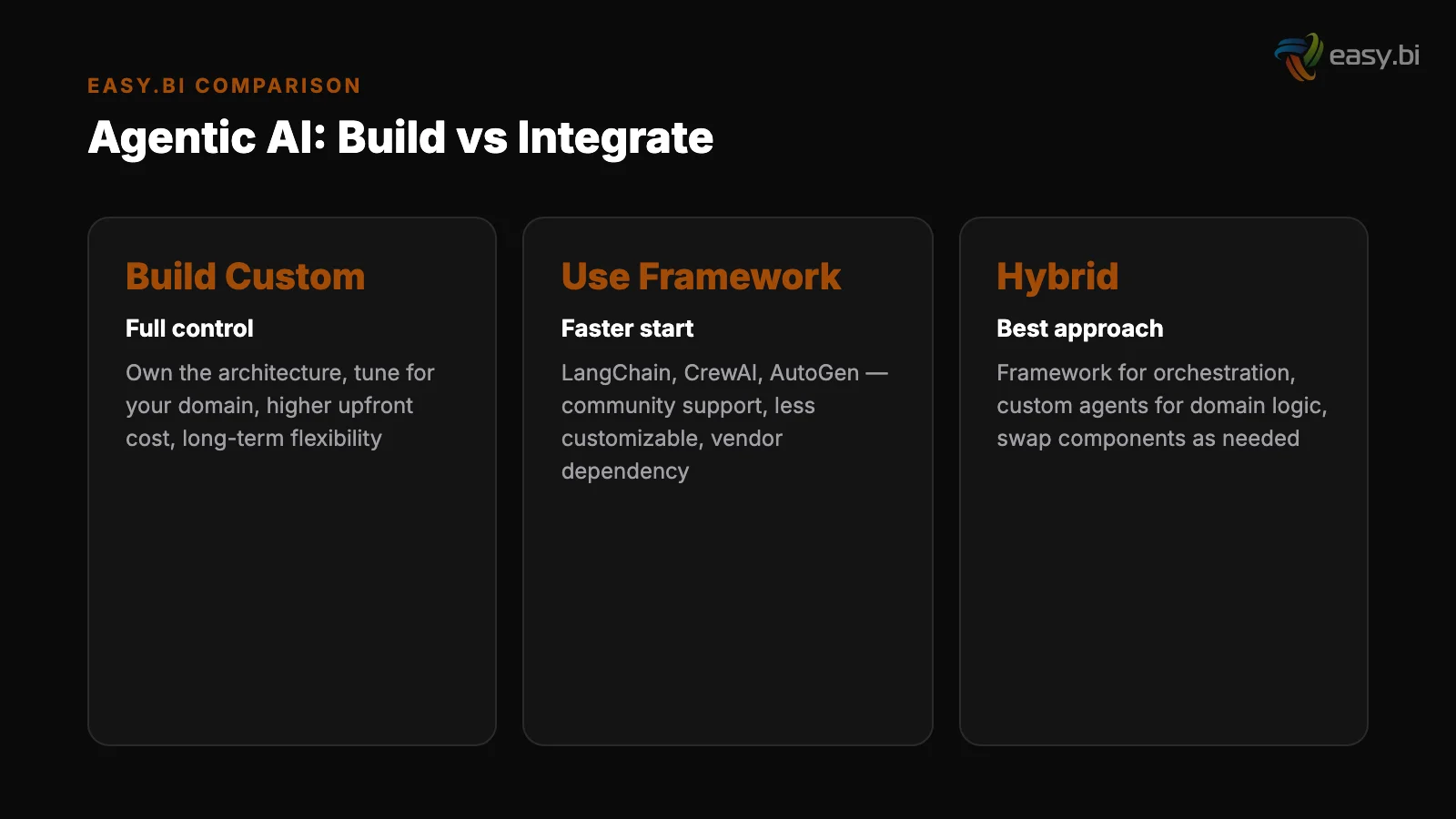

Generation 3: Agentic frameworks (2024-present). Systems like AutoGen, LangGraph, CrewAI, and proprietary frameworks like ebiCore that autonomously orchestrate multi-step tasks. They maintain state across interactions, call external tools (APIs, databases, deployment systems), make decisions based on intermediate results, and adapt their approach when something fails.

They operate at the project level, not the function level.

Generation 2: Chat-based assistants (2023)

The adoption curve for generation 3 is steep. Agentic AI frameworks saw 10x growth in GitHub stars from Q1 2023 to Q1 2024 [2]. Agentic AI systems are already in production at 8% of enterprises, with 42% actively experimenting [3].

This mirrors the early adoption curve of CI/CD - early movers built compounding advantages that late adopters spent years trying to close.

| Capability | Code Completion | Chat Assistant | Agentic Framework |

|---|---|---|---|

| Scope | Single function/file | Single conversation | Full project lifecycle |

| State management | None | Session only | Persistent across tasks |

| Tool access | IDE only | None (manual paste) | APIs, CI/CD, databases, repos |

| Decision making | Next token prediction | Response generation | Multi-step planning and execution |

| Error handling | None | Suggests fixes on request | Detects, diagnoses, retries autonomously |

| Team impact | Individual developer | Individual developer | Entire delivery pipeline |

See how ebiCore accelerates development.

What Makes a Framework "Agentic"

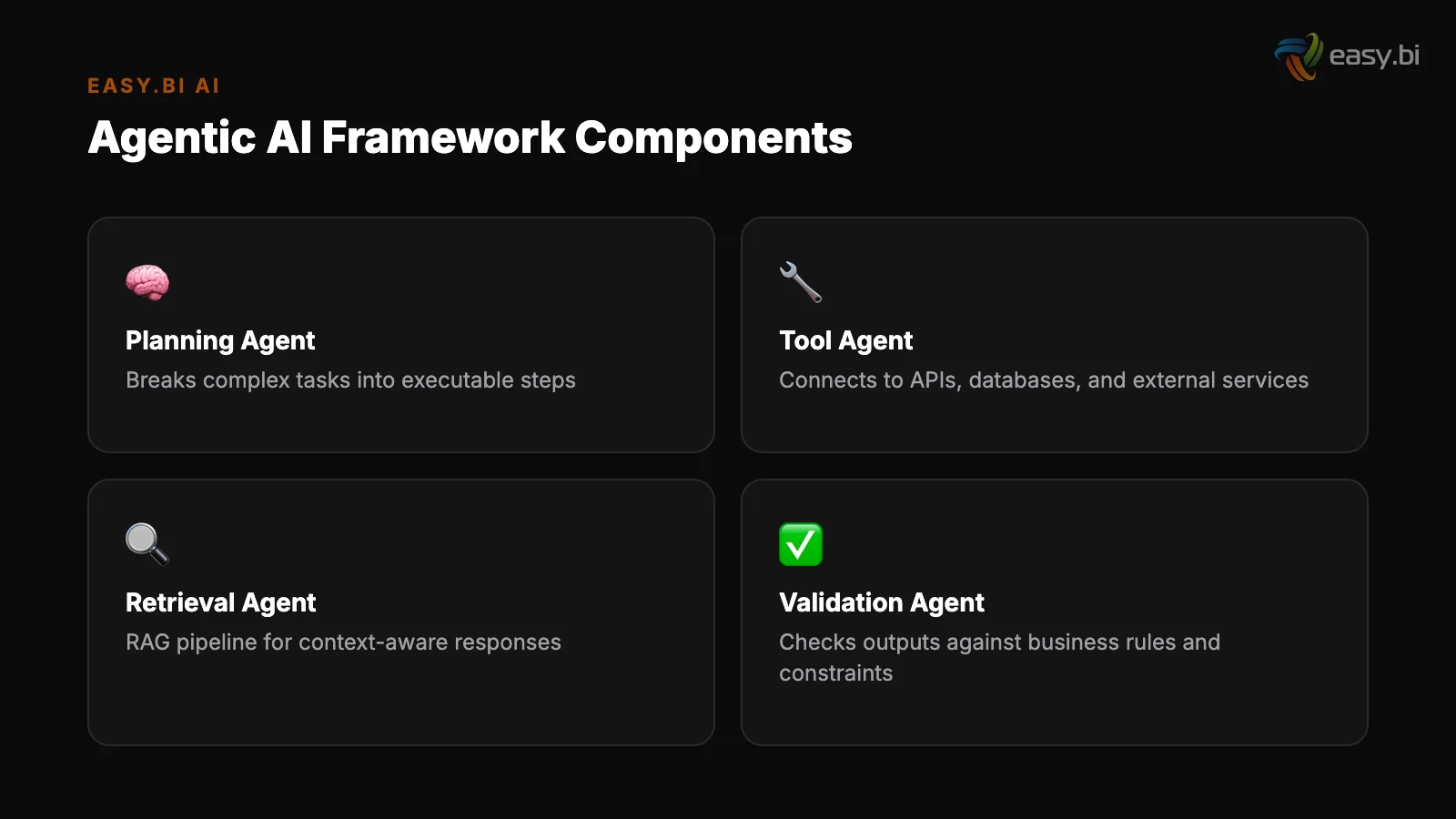

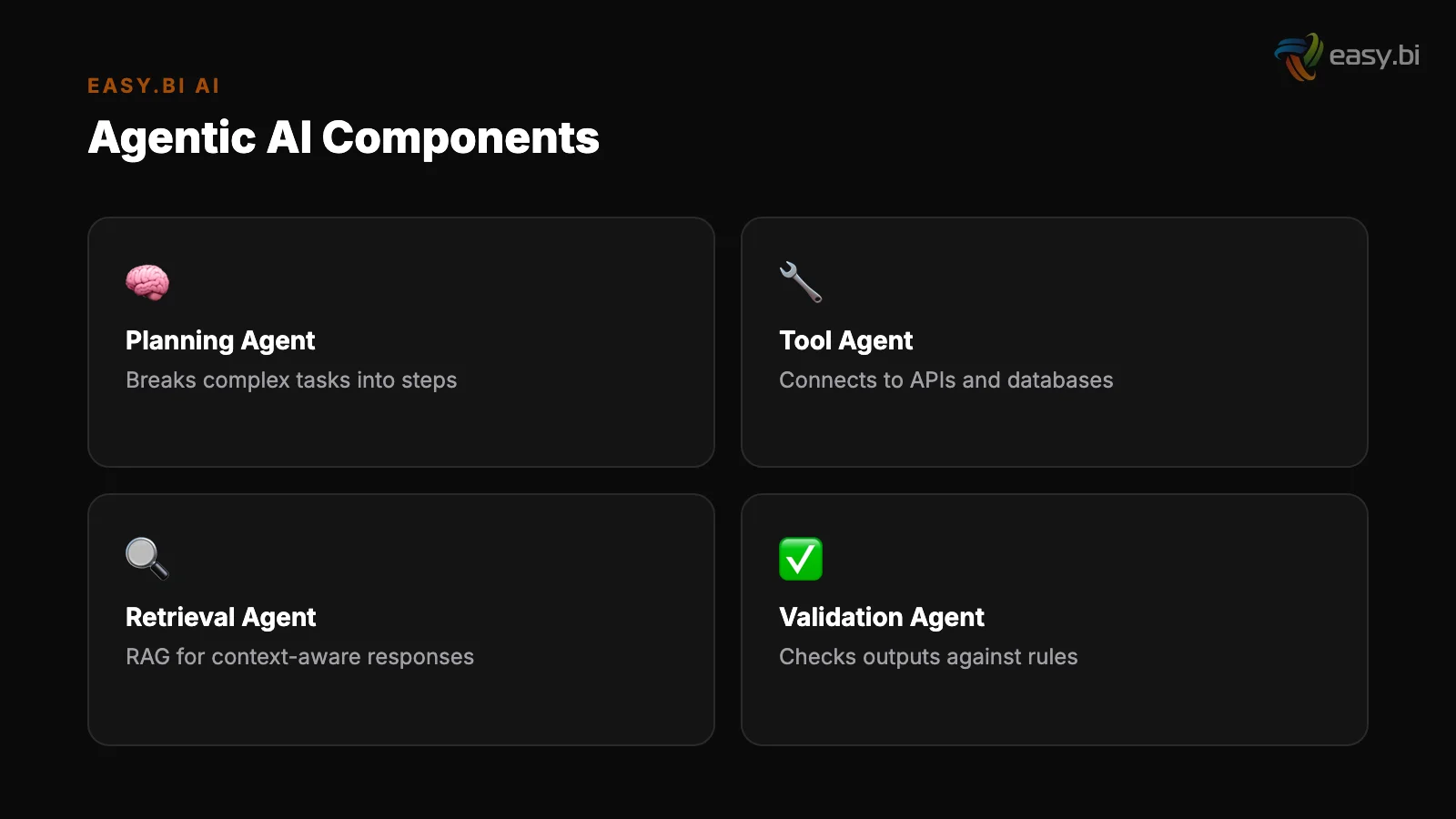

The term "agentic AI" gets applied loosely. Every SaaS product with an API call to GPT-4 claims to be agentic. Most are not. An actual agentic AI framework has four properties that separate it from LLM wrappers with better marketing.

Autonomous planning. Given a goal ("generate integration tests for the user authentication module"), the agent breaks the task into subtasks, determines the execution order, and identifies the tools and data it needs. It does not require step-by-step instructions from the developer.

Autonomous planning

Tool use. The agent calls external systems - reads from your Git repository, queries your database schema, runs your test suite, checks your CI/CD pipeline status. It operates on real project data, not on whatever the developer remembered to paste into a chat window.

Memory and context. The agent maintains state across tasks and sessions. It knows the patterns established in previous sprints, the architectural decisions documented in ADRs, and the coding standards enforced across the project. Each interaction builds on what came before.

Tool use

Iterative execution. When a generated test fails, the agent reads the error, adjusts its approach, and tries again. When a code review finds a pattern violation, the agent can fix it without human intervention for well-defined rules.

This feedback loop is what makes agentic AI qualitatively different from tools that generate one output and stop.

"An LLM wrapper gives you a response. An agentic framework gives you a result. The difference is that the framework plans, executes, evaluates, and iterates until the task is done - not until the token limit is reached."

How ebiCore Works as a Production Agentic Framework

ebiCore is easy.bi's proprietary agentic AI-based SaaS development framework running on enterprise high-availability architecture. No competitor in the DACH nearshore space has an equivalent AI-powered development framework. That is not a marketing claim - it is a verifiable market position.

ebiCore connects to the full project context: the codebase, the CI/CD pipeline, the sprint backlog, and the architectural constraints defined by the solution architect. When a developer starts a task, ebiCore already knows the project's patterns, the testing requirements, and the deployment target.

Sprint planning

Here is what that looks like across the delivery lifecycle:

Sprint planning. ebiCore analyzes the backlog, flags dependencies between tickets, and estimates complexity based on historical data from similar tasks across 100+ delivered projects. The senior engineer makes the final call - but they start with data instead of gut feeling.

Code scaffolding. For a Symfony backend service, ebiCore generates entity classes, the repository layer, API endpoints, and corresponding test stubs - all following the project's existing patterns. The developer focuses on the business logic that makes the service unique, not the boilerplate that every service requires.

Code scaffolding

Code review triage. Every merge request gets an automated pre-review against architectural rules, security patterns, and performance baselines. ebiCore catches the issues that slip past human reviewers late in the day: missing null checks, N+1 query patterns, unhandled error responses.

Human reviewers then focus on logic, architecture, and business correctness - the decisions AI cannot reliably make.

Test generation. From user stories and acceptance criteria, ebiCore generates integration tests, edge case scenarios, and regression test suites. AI-powered test generation reduces manual test writing effort by 60% and increases test coverage by 25% in enterprise codebases [4].

The tests that teams skip because they are tedious actually get written.

Deployment configuration. Kubernetes manifests, Docker configurations, CI/CD pipeline updates - generated from templates tuned to the project's infrastructure. No copy-paste errors in YAML files at 2 AM.

Real Use Cases Beyond Code Generation

The obvious application of agentic AI is writing code faster. The less obvious - and often higher-value - applications extend across the entire software delivery lifecycle and beyond.

Automated incident response. An agent detects an anomaly in production logs, queries monitoring systems for correlated events, identifies the root cause by comparing against known incident patterns, applies a known fix from the runbook, and escalates to a human only if the automated fix fails.

AI in DevOps reduces mean time to resolution (MTTR) by 50% and automates 40% of incident triage [5].

Automated incident response

Intelligent document processing. An agent receives a business document (contract, invoice, specification), classifies it, extracts structured data, validates the data against business rules, and routes it to the correct downstream workflow.

This is not OCR plus templates - it is contextual understanding that adapts to document variations without manual rule updates.

Multi-system orchestration. An agent coordinates actions across ERP, CRM, and warehouse management systems to fulfill a complex business process.

Instead of building point-to-point integrations that break when one system changes its API, the agent interprets the business intent and adapts its execution path based on the current state of each system.

Intelligent document processing

Legacy code comprehension. For enterprises sitting on large legacy codebases, agentic frameworks can analyze undocumented code, generate documentation, identify dead code paths, and create test coverage for modules that were never tested. This is a prerequisite for legacy modernization projects where understanding the existing system is half the battle.

72% of developers already use AI tools for coding [6]. The next step is extending AI from individual code tasks to the orchestration layer that connects them.

The Compound Advantage of Early Adoption

Agentic AI adoption follows the same pattern as CI/CD, containerization, and cloud-native architecture. Early adopters build institutional knowledge and tooling advantages that compound over time. Late adopters spend years closing a gap that widens every sprint.

The math works like this. A team using an agentic framework ships 40% faster than one relying on traditional processes. After one quarter (6 sprints), the agentic team has delivered the equivalent of 8.4 sprints of traditional output. After two quarters, the gap is 5 full sprints.

That is not a difference you close by working harder - it requires a structural change in how your team operates.

The institutional knowledge component matters even more. Every sprint that an agentic framework runs against your codebase, it builds a deeper model of your project's patterns, failure modes, and architectural constraints.

The framework at sprint 20 is measurably better at generating relevant scaffolding and catching project-specific anti-patterns than the framework at sprint 1. This learning curve is non-transferable - your competitor cannot buy your institutional AI context.

For DACH mid-market companies, the talent gap amplifies this effect. With 149,000 IT specialist positions unfilled in Germany, you cannot hire your way to faster delivery.

Multiplying the output of your existing team through agentic AI is not just more efficient - for many companies, it is the only viable path to competitive shipping speed.

Our AI framework cuts development time in half

ebiCore is our proprietary agentic AI framework that accelerates innovation and reduces cost.

Start with a Strategy CallThe Governance Layer That Makes Agents Safe

Autonomous systems need guardrails. The companies deploying agentic AI responsibly invest as much in the governance layer as in the agent capabilities themselves.

56% of enterprises lack a formal AI governance framework [7]. For agentic systems that autonomously execute multi-step workflows, this gap creates real risk. An agent that deploys code without human approval, modifies customer data without audit trails, or makes architectural decisions outside defined boundaries can create damage at machine speed.

Effective governance for agentic AI includes:

- Trust boundaries: Define which actions an agent can take autonomously and which require human-in-the-loop approval. Code scaffolding and test generation are safe to automate. Production deployments and database schema changes need a human checkpoint.

- Audit trails: Every action an agent takes - every tool call, every decision, every file modification - gets logged with full traceability. The EU AI Act requires this for high-risk systems [8]. Build it in from day one.

- Fallback mechanisms: When an agent encounters a situation outside its training or capabilities, it must fail gracefully - stop, notify a human, and preserve system state. Silent failures in autonomous systems are unacceptable.

- Output validation: AI-generated code requires 40% more review time than human-written code due to subtle logic errors and security vulnerabilities [9]. Agentic frameworks must integrate validation steps before any generated artifact enters the production pipeline.

At easy.bi, ebiCore operates within strict governance boundaries defined per project. The framework handles scaffolding, testing, and review triage autonomously. Architectural decisions, production deployments, and security-critical changes go through human review. The agent accelerates the delivery pipeline without bypassing the quality gates that protect it.

Getting Started Without Disrupting Current Delivery

The path to agentic AI adoption is incremental, not revolutionary. Teams that attempt a full pipeline overhaul on day one create confusion, resistance, and regression. Teams that start with a single, measurable use case create proof that pulls adoption forward.

Week 1-2: Pick one team and one project. Choose a project with established patterns and an active codebase - not a greenfield experiment. The goal is to measure impact against an existing baseline, not to explore what is theoretically possible.

Week 1-2: Pick one team and one project

Week 3-4: Deploy AI-assisted code review and test generation. These are the lowest-risk, highest-visibility entry points. AI-assisted code review reduces review time by 30-50% and catches 15-20% more bugs than human-only reviews [10].

Test generation is where teams see the most immediate time savings because it eliminates the tedious-but-necessary work that engineers defer.

Week 5-8: Measure, iterate, expand. Track review turnaround time, test coverage, and post-deployment bug rates before and after. If the numbers improve - and across 100+ projects, they consistently do - build the business case for broader adoption. Then expand to the next team.

Week 5-8: Measure, iterate, expand

This is exactly how we rolled out ebiCore across easy.bi's 50+ engineers and 4 countries. One team at a time. One sprint at a time.

Within 6 months, every team was using the framework - not because leadership mandated it, but because the teams that adopted it first were visibly outperforming.

For companies that want to accelerate this timeline, working with a team that has already integrated agentic AI into production workflows is faster than building the capability internally. The patterns are proven. The pitfalls are mapped. The results are measurable from sprint 1.

To understand the broader context of AI in enterprise development - from LLM integration patterns to governance frameworks - see our comprehensive guide: AI in Enterprise Software: What's Real, What's Hype, and What to Build First.

For a focused look at how AI specifically changes enterprise development pipelines, read How AI Is Reshaping Enterprise Software Development.

References

- [1] GitHub, 2022 - Copilot users complete tasks 55% faster and accept 30% of code su

- [2] GitHub, 2024 - Agentic AI frameworks (AutoGen, LangGraph, CrewAI) saw 10x growth

- [3] Sequoia Capital, 2024 - Agentic AI systems in production at 8% of enterprises; 4

- [4] Diffblue, 2023 - AI-powered test generation reduces manual test writing effort b

- [5] Gartner, 2023 - AI in DevOps (AIOps) reduces MTTR by 50% and automates 40% of in

- [6] Stack Overflow, 2024 - 72% of developers report using AI tools for coding, up fr

- [7] Deloitte, 2023 - 56% of enterprises lack a formal AI governance framework.

- [8] European Commission, 2024 - EU AI Act requires high-risk AI systems to meet stri

- [9] Stanford University, 2023 - AI-generated code requires 40% more review time due

- [10] Google Research, 2023 - AI-assisted code review reduces review time by 30-50% an

Explore Other Topics

Ready to accelerate with AI?

30-minute call with an engineering lead. No sales pitch - just honest answers about your project.

98% engineer retention · 14-day delivery sprints · No lock-in contracts