AI-Assisted Code Review: The 60% Time Savings Nobody Expected

Table of Contents+

- The Cost of Slow Code Reviews

- What AI Code Review Actually Catches

- What AI Code Review Misses

- The GitHub Copilot Effect on Review Workload

- Integrating AI Review Into Existing PR Workflows

- The Economics of AI-Assisted Review at Scale

- Common Pitfalls and How to Avoid Them

- Connecting AI Review to the Full Development Lifecycle

- References

TL;DR

Code review is the single most effective quality gate in software development. It catches 60-65% of defects before they reach production - more than any testing strategy alone . Yet most engineering teams treat it as a bottleneck. Reviews stack up. Pull requests sit for hours or days.

Key Takeaways

- •AI-assisted code review reduces review time by 30-50% and catches 15-20% more bugs than human-only reviews - the biggest quality gain comes from consistency, not intelligence.

- •Code review catches 60-65% of defects before production, making it more effective than testing alone. AI amplifies this by handling pattern-matching work that human reviewers miss under time pressure.

- •AI-generated code requires 40% more review time due to subtle logic errors - teams using AI code generation without AI code review are creating a quality gap that compounds every sprint.

- •The integration path is incremental: start with AI pre-review on pull requests, measure defect escape rates for 2-3 sprints, then expand to architectural rule enforcement and security scanning.

- •AI does not replace senior reviewers. It eliminates the 60% of review time spent on style, formatting, and common anti-patterns so humans can focus on logic, architecture, and business correctness.

AI-assisted code review cuts review time by 30-50% and catches 15-20% more bugs. Learn how to integrate AI into your PR workflow without replacing human reviewers.

Code review is the single most effective quality gate in software development. It catches 60-65% of defects before they reach production - more than any testing strategy alone [1]. Yet most engineering teams treat it as a bottleneck. Reviews stack up. Pull requests sit for hours or days.

Senior engineers spend their afternoons reading code instead of designing systems.

AI-assisted code review changes the economics of this equation. Not by replacing human reviewers, but by handling the pattern-matching work that consumes most of the review time - style inconsistencies, common security vulnerabilities, performance anti-patterns, and coding standard violations.

The human reviewer then focuses on what AI cannot do well: evaluating business logic, questioning architectural decisions, and catching the subtle design flaws that create long-term technical debt.

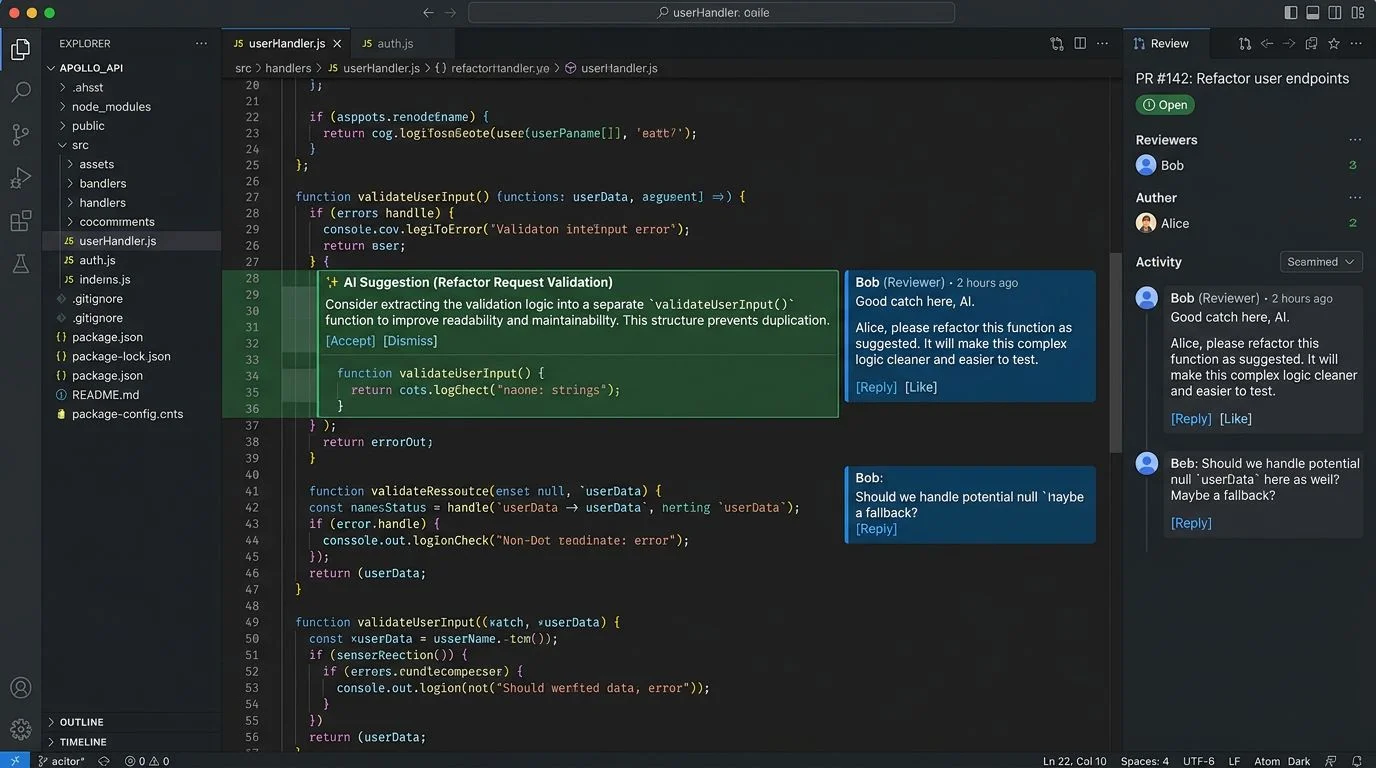

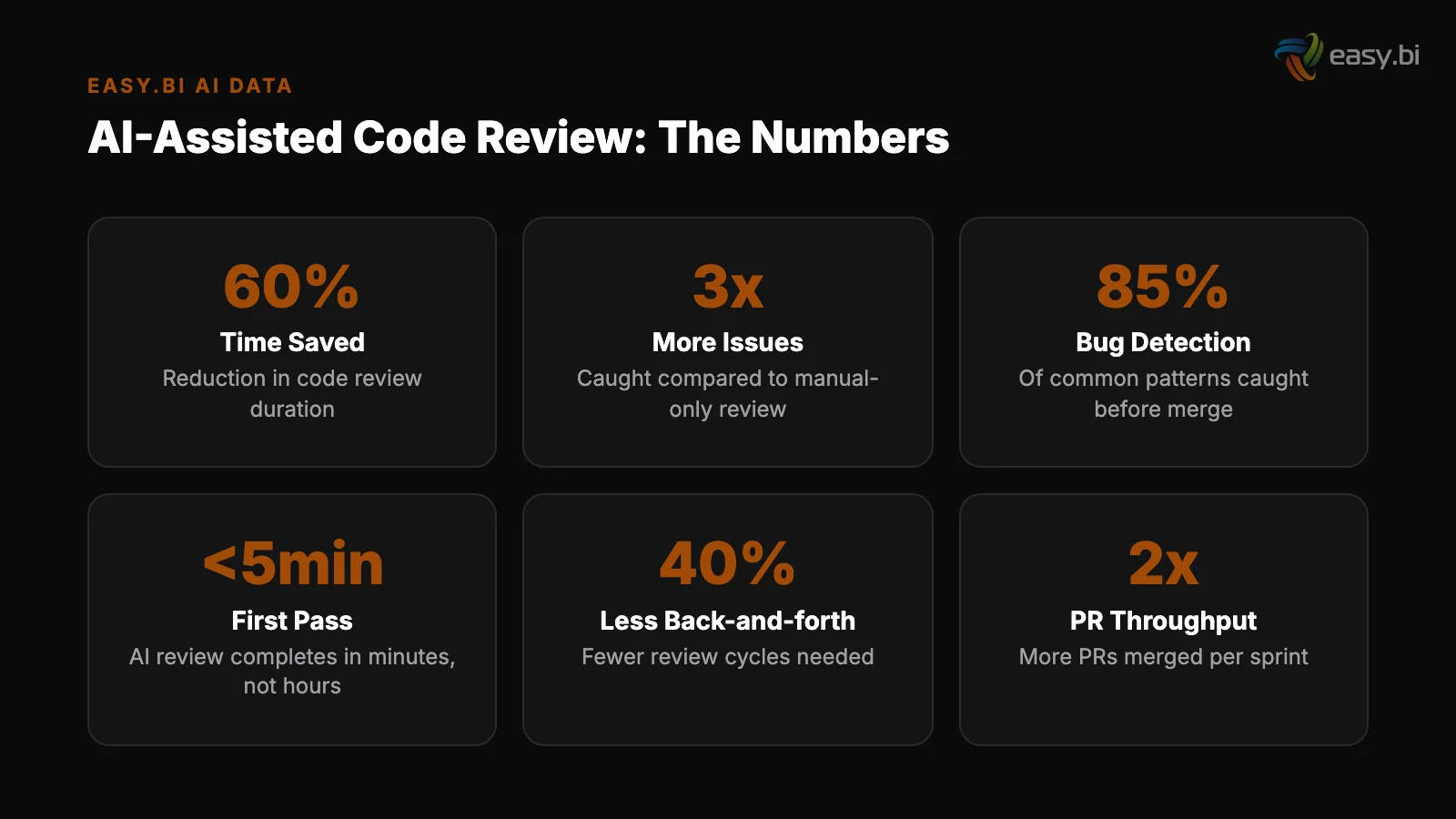

The numbers from production environments are concrete. AI-assisted code review reduces review time by 30-50% and catches 15-20% more bugs than human-only reviews [2].

This article covers what AI code review actually does in practice, what it misses, how to integrate it into existing PR workflows, and how the economics of review shift when you combine AI tooling with experienced engineers.

The Cost of Slow Code Reviews

Before discussing how AI changes code review, it is worth quantifying the problem it solves. Slow code reviews are not just an inconvenience - they are a measurable drag on delivery velocity, quality, and team morale.

The average code review turnaround in enterprise teams is 4-8 hours. For distributed teams across time zones, it can stretch to 24+ hours. Every hour a pull request waits is an hour the developer context-switches to another task, only to context-switch back when review feedback arrives.

Research shows that context-switching costs 20-30 minutes of productive time per switch.

The quality impact is worse. When reviews pile up - 5, 10, 15 open PRs - reviewers start skimming. A reviewer examining their 8th pull request of the day at 4 PM catches fewer issues than the same reviewer examining their first PR at 9 AM.

The defects that slip through become production bugs, and the cost of fixing a bug in production is 6x higher than fixing it during implementation [3].

The compounding effect hits team dynamics. When senior engineers spend 3-4 hours daily reviewing code, they have less time for architecture work, mentoring, and the high-impact engineering that attracted them to the role in the first place.

Over time, review burden becomes a driver of senior engineer attrition - the people who are most valuable as reviewers are the same people most likely to leave if their work becomes repetitive.

"Code review is the most effective defect-prevention tool in software engineering. It is also the most expensive one - unless you separate the work AI can do from the work only humans should do."

See how ebiCore accelerates development.

What AI Code Review Actually Catches

AI-assisted code review tools operate at a specific layer of the review process. Understanding that layer - and its boundaries - is essential for integrating AI review without creating false confidence.

AI excels at pattern-based detection. This includes:

- Security vulnerabilities: SQL injection patterns, XSS vectors, insecure deserialization, hardcoded credentials, and exposed API keys. AI-assisted vulnerability detection identifies 95% of known vulnerability patterns and reduces false positives by 60% compared to traditional SAST tools [4].

- Performance anti-patterns: N+1 query patterns, unbounded loops, missing pagination, unoptimized database queries, and memory leaks in common frameworks.

- Style and consistency: Coding standard violations, naming convention mismatches, formatting inconsistencies, and import ordering. This is the work that consumes the most reviewer time and delivers the least intellectual value.

- Common bugs: Null pointer risks, off-by-one errors, unclosed resources, race conditions in common patterns, and type mismatches that compile but fail at runtime.

- Dependency risks: Outdated dependencies with known CVEs, license compliance issues, and unnecessary dependency additions that inflate the attack surface.

These categories account for roughly 60% of the issues found in a typical code review.

They are also the categories where human reviewers are most inconsistent - catching the same pattern in one PR and missing it in the next, depending on fatigue, time pressure, and familiarity with the specific codebase area.

What AI Code Review Misses

The 40% of review work that AI handles poorly is also the 40% that matters most for long-term codebase health.

Business logic correctness. AI can verify that a function does what its implementation suggests. It cannot verify that the function does what the business requirement intended. A perfectly implemented feature that solves the wrong problem passes every AI check and fails the only check that matters.

Business logic correctness

Architectural coherence. Does this new service belong in the domain where the developer placed it? Does this data flow respect the bounded contexts the team defined? Does this abstraction make the codebase easier or harder to understand in 6 months?

These questions require understanding the project's architectural vision - something that requires human judgment anchored in project history.

Design trade-offs. Should this be a synchronous or asynchronous call? Should this data be denormalized for read performance or normalized for consistency? These trade-offs depend on business context, traffic patterns, and future requirements that no AI tool can fully model.

Architectural coherence

Team-specific patterns. Every mature codebase develops conventions that are not documented in any style guide. Naming patterns for specific domain concepts, preferred testing strategies for certain module types, and architectural decisions that only make sense in the context of the project's history.

AI tools improve at learning these patterns over time - especially agentic frameworks like ebiCore that maintain project context - but they start from zero on a new codebase.

The implication is clear: AI code review replaces the mechanical work, not the intellectual work. The senior engineer who reviews 8 PRs per day does not become unnecessary. They become 3x more effective because they spend their review time on architecture and business logic instead of chasing missing null checks.

The GitHub Copilot Effect on Review Workload

There is an irony in AI-assisted development that most teams are not talking about. AI code generation tools like GitHub Copilot increase the volume of code entering the review pipeline. Copilot-assisted developers write 46% more code and complete pull requests 55% faster [5].

That means more code arriving at the review stage, faster.

At the same time, AI-generated code requires 40% more review time than human-written code due to subtle logic errors and security vulnerabilities [6]. Code generation tools produce secure code only 67% of the time [7].

The math is uncomfortable. More code generated faster, each line requiring more careful review. Without AI-assisted review to match AI-assisted generation, teams create a growing quality gap. They ship faster, but they ship more defects. The defects compound as technical debt that future sprints must address.

| Metric | Without AI Code Gen | With AI Code Gen, No AI Review | With AI Code Gen + AI Review |

|---|---|---|---|

| Code volume per sprint | Baseline | +46% | +46% |

| PR turnaround time | 4-8 hours | 6-12 hours (more code to review) | 1-3 hours (AI pre-review) |

| Defect escape rate | Baseline | +25-35% (subtle AI-generated bugs) | -15-20% (AI catches more patterns) |

| Senior reviewer time per day | 3-4 hours | 5-6 hours | 1.5-2.5 hours |

| Review focus | Everything | Everything (more of it) | Architecture and business logic |

This table illustrates why AI code review is not optional for teams already using AI code generation. It is the quality counterweight that prevents generation speed from becoming a liability.

Integrating AI Review Into Existing PR Workflows

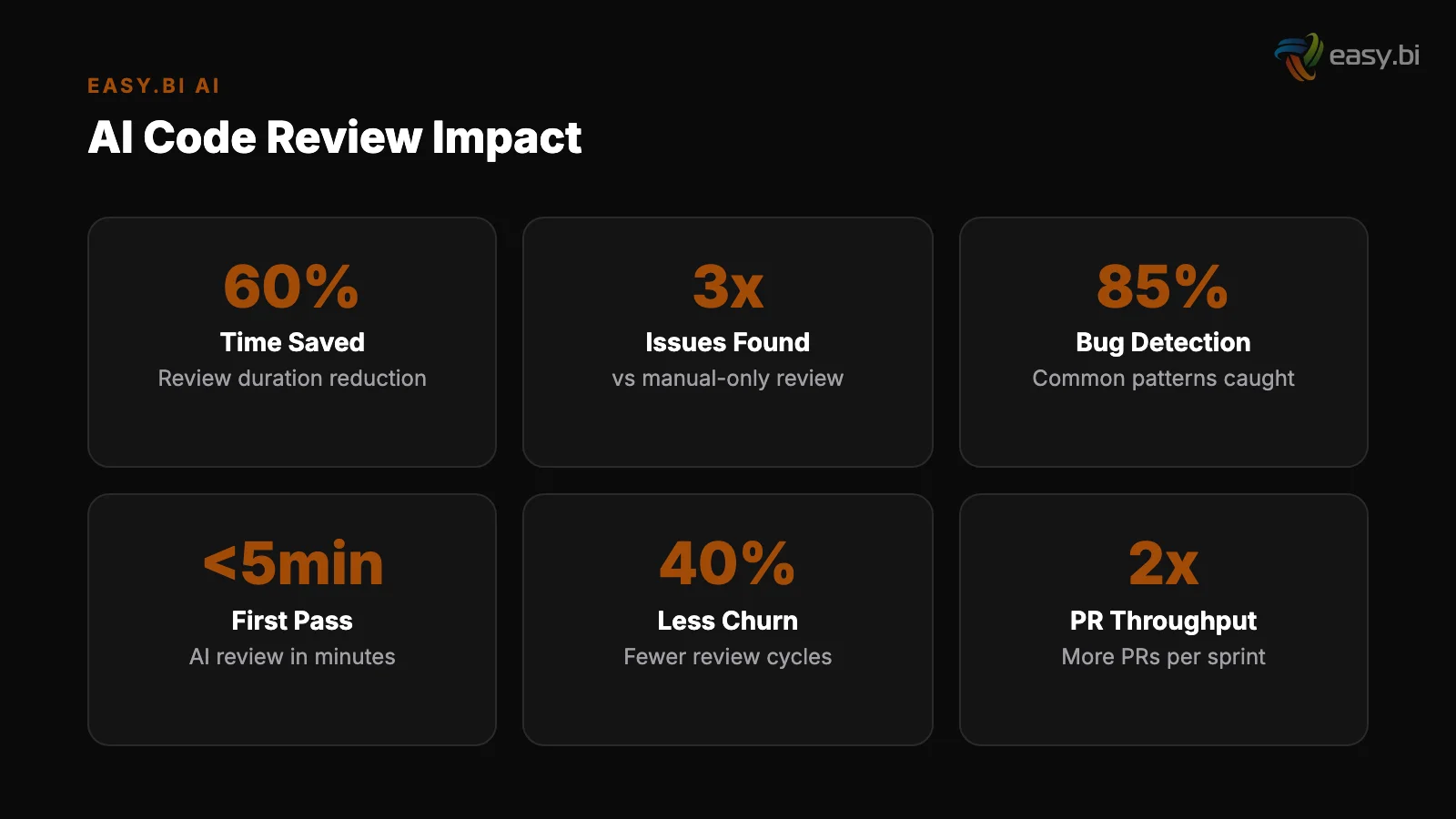

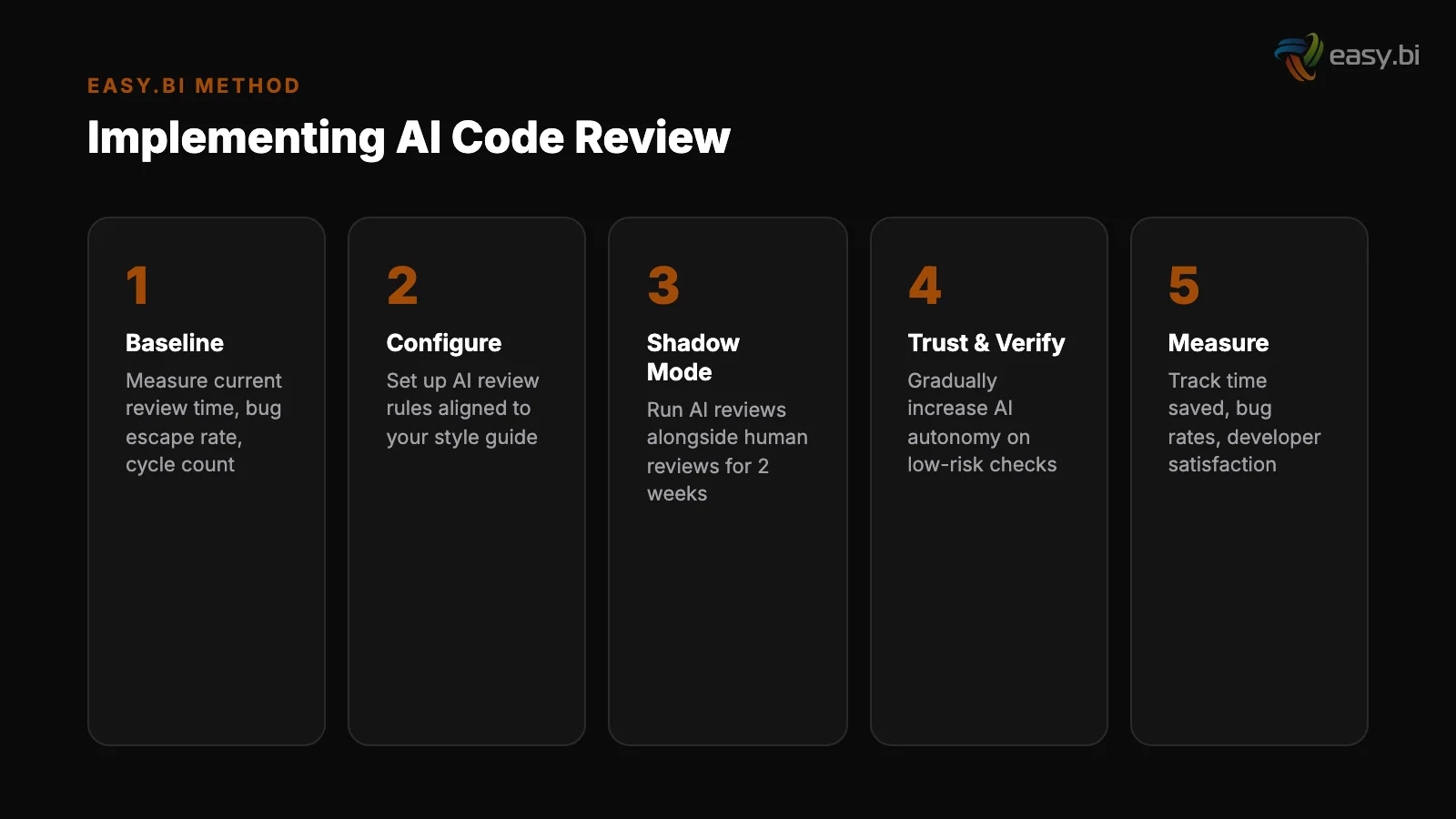

The integration path for AI code review is designed to be incremental. You do not need to change your team's workflow on day one. You layer AI review into the existing process and let the results speak for themselves.

Step 1: AI pre-review on every PR (Week 1). Configure your CI/CD pipeline to run an AI review step before a human reviewer is assigned. The AI review generates inline comments on the PR - same format as human comments.

The developer addresses AI-flagged issues before the human reviewer sees the code. This immediately reduces the back-and-forth cycle that extends review timelines.

Step 1: AI pre-review on every PR (Week 1)

Step 2: Measure baseline and impact (Weeks 2-6). Track four metrics: PR turnaround time, number of review cycles per PR, defect escape rate (bugs found in staging or production that should have been caught in review), and human reviewer time per PR. Compare against your pre-AI baseline.

Teams running AI pre-review typically see a 30-50% reduction in turnaround time within the first 3 sprint cycles.

Step 3: Add architectural rule enforcement (Weeks 7-10). Define your project's architectural constraints as rules the AI review tool enforces. Dependency direction rules (service A must not import from service B), API contract validation, naming conventions for domain objects, and required test patterns for specific module types.

This converts tribal knowledge into automated enforcement.

Step 2: Measure baseline and impact (Weeks 2-6)

Step 4: Security and compliance scanning (Weeks 11-14). Integrate AI-powered security analysis into the review pipeline. For DACH enterprises operating under GDPR, this includes data handling pattern validation - ensuring personal data is not logged, persisted in unencrypted stores, or transmitted to unauthorized services.

AI-driven anomaly detection in CI/CD pipelines catches 35% more issues pre-production than traditional rule-based approaches [8].

At easy.bi, ebiCore integrates AI-assisted code review into every sprint across 100+ delivered projects. The framework runs pre-review against project-specific architectural rules, security baselines, and performance patterns. Human reviewers receive PRs that have already been cleaned of mechanical issues - they focus exclusively on the decisions that require human judgment.

Our AI framework cuts development time in half

ebiCore is our proprietary agentic AI framework that accelerates innovation and reduces cost.

Start with a Strategy CallThe Economics of AI-Assisted Review at Scale

For a team of 10 engineers, code review consumes 30-40 hours per week. At senior developer rates in the DACH market (EUR 72,000-85,000 annually), that is EUR 45,000-65,000 per year spent on review alone. AI-assisted review does not eliminate this cost - it redirects it.

With AI handling 60% of the pattern-matching work, human review time drops to 12-16 hours per week. The remaining 18-24 hours go back to architecture, feature development, and mentoring. That is the equivalent of recovering 0.5-0.6 FTEs on a 10-person team - without hiring anyone.

The quality return is equally significant. AI review catches issues that humans miss under time pressure, and it does so with perfect consistency. The reviewer who catches N+1 queries at 9 AM but misses them at 4 PM is no longer a quality variable.

The AI pre-review catches every instance, every time, in every PR.

Scale this across an organization with 50+ engineers - the size of easy.bi's engineering team - and the numbers become significant. 150-200 hours per week recovered from mechanical review work. A measurable reduction in post-deployment defects.

And senior engineers who spend their time on the work that retains them: solving hard problems, not policing coding standards.

Common Pitfalls and How to Avoid Them

Teams that fail with AI code review typically make one of three mistakes.

Treating AI review as a replacement for human review. AI catches patterns. Humans catch intent mismatches, architectural violations, and business logic errors. Removing human reviewers because "the AI handles it" leads to a codebase that is syntactically clean and architecturally incoherent. Always maintain human review for logic and design.

Ignoring AI review feedback. If developers routinely dismiss AI-generated comments because they are noisy or irrelevant, the tool becomes invisible. Calibrate your AI review rules to your project's actual standards. Start with high-confidence rules (security vulnerabilities, known bug patterns) and add project-specific rules incrementally.

A tool that generates 5 relevant comments is more valuable than one that generates 50 comments, 45 of which get dismissed.

Not adapting rules over time. Your project's patterns evolve. New frameworks, new services, new architectural decisions - these all change what "correct" looks like. Schedule quarterly reviews of your AI review rules. Remove rules that generate false positives. Add rules for new patterns the team has adopted.

This maintenance overhead is minimal compared to the cost of letting review rules drift from reality.

Connecting AI Review to the Full Development Lifecycle

AI-assisted code review delivers the most value when it is part of a broader AI-assisted development pipeline, not an isolated tool.

When AI code generation, AI testing, and AI review operate as integrated capabilities - as they do in an agentic AI framework - the feedback loops between them create compounding quality improvements.

Code generated by an AI tool gets reviewed by an AI review tool that knows the project's patterns. Issues flagged in review feed back into the generation model's context, reducing the same type of issue in future code.

Tests generated by AI catch integration issues that neither the generation tool nor the review tool anticipated. Each component makes the others more effective.

This is how ebiCore operates at easy.bi. Code scaffolding, review triage, test generation, and deployment configuration are not separate tools bolted together. They share project context, learn from each other's outputs, and operate as a single agentic framework that accelerates the full delivery pipeline.

For the broader perspective on how AI transforms enterprise development, see our pillar guide: AI in Enterprise Software: What's Real, What's Hype, and What to Build First.

For a practical look at how AI changes the testing side of the equation, read Automated QA: How AI Changes the Economics of Testing.

References

- [1] SmartBear / Cisco study, 2023 - Code review catches 60-65% of defects before the

- [2] Google Research, 2023 - AI-assisted code review reduces review time by 30-50% an

- [3] IBM Systems Sciences Institute, 2023 - The cost of fixing a bug in production is

- [4] Snyk, 2023 - AI-assisted vulnerability detection identifies 95% of known vulnera

- [5] Microsoft Research, 2023 - Copilot-assisted developers write 46% more code and c

- [6] Stanford University, 2023 - AI-generated code requires 40% more review time due

- [7] Stanford University, 2023 - Code generation tools produce secure code only 67% o

- [8] CircleCI, 2023 - AI-driven anomaly detection in CI/CD pipelines catches 35% more

Explore Other Topics

Ready to accelerate with AI?

30-minute call with an engineering lead. No sales pitch - just honest answers about your project.

98% engineer retention · 14-day delivery sprints · No lock-in contracts