AI Data Readiness: The Assessment Your Team Skips (And Pays For Later)

Table of Contents+

- Why Is AI-Ready Data Different from BI-Ready Data?

- What Does a Practical Data Readiness Assessment Cover?

- What Is the Minimum Viable Data for an AI Pilot?

- How Do You Score Data Readiness and Prioritize Remediation?

- What Are the Most Common Data Readiness Gaps in Mid-Market Companies?

- Frequently Asked Questions

- References

TL;DR

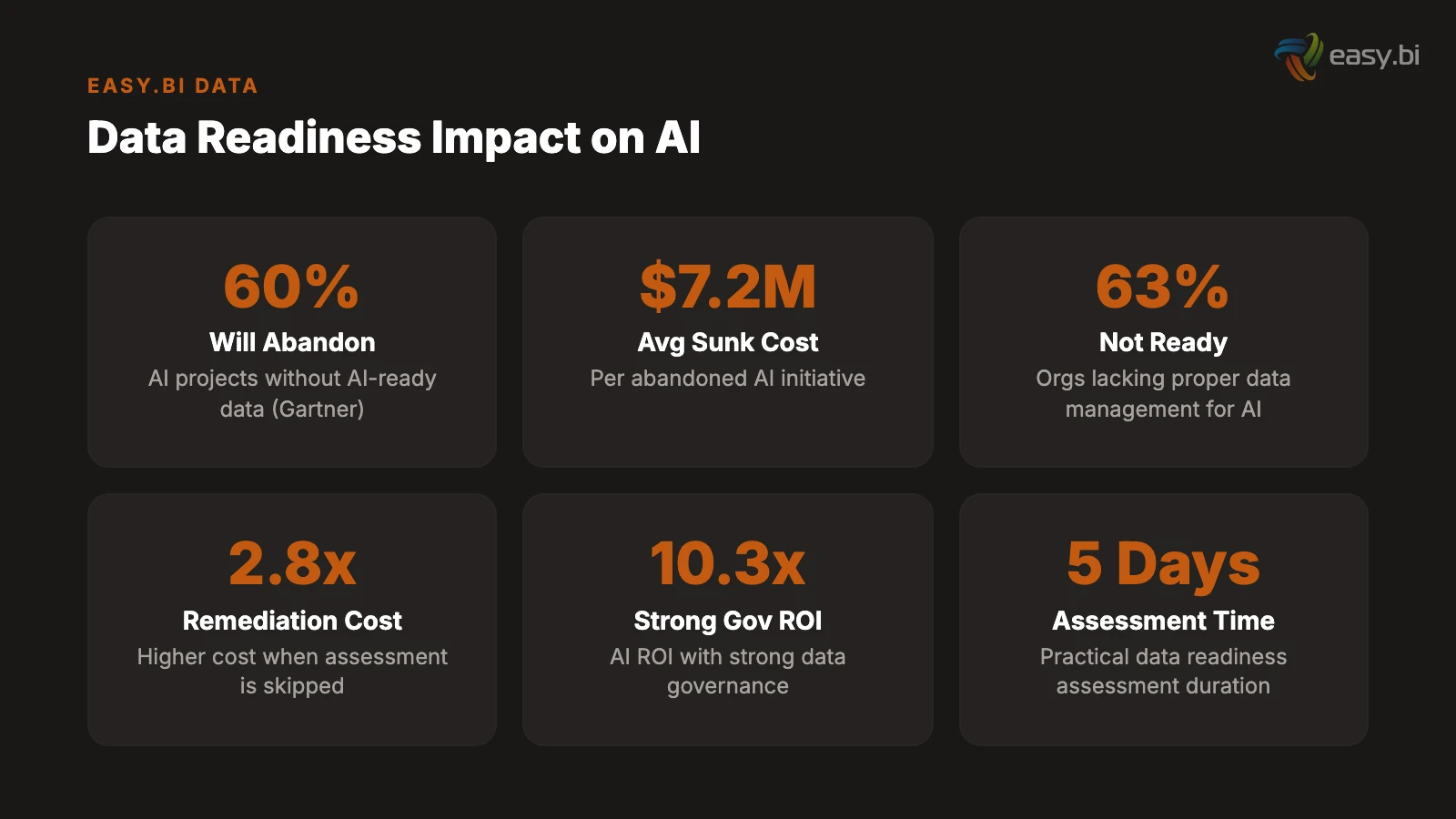

Gartner predicts 60% of AI projects lacking AI-ready data will be abandoned through 2026, at an average cost of USD 7.2 million per failed initiative. This post provides a 5-day data readiness assessment framework covering data quality, infrastructure, governance, and organizational readiness - plus the minimum viable data requirements that separate successful AI pilots from expensive failures.

Key Takeaways

- •Gartner predicts that through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data - and the average sunk cost per abandoned AI initiative is USD 7.2 million, making data readiness assessment the highest-leverage activity before any AI investment.

- •AI-ready data is fundamentally different from BI-ready data. Traditional data warehouses optimize for structured queries on clean, aggregated datasets. AI models need raw, representative data with every pattern, outlier, and edge case preserved - the opposite of what most data teams have been trained to produce.

- •The minimum viable data for an AI pilot is far less than vendors claim. Most use cases need 3-6 months of historical data, 500-5,000 labeled examples, and one clean integration point. Companies that wait for perfect data never ship - companies that start with adequate data and iterate succeed.

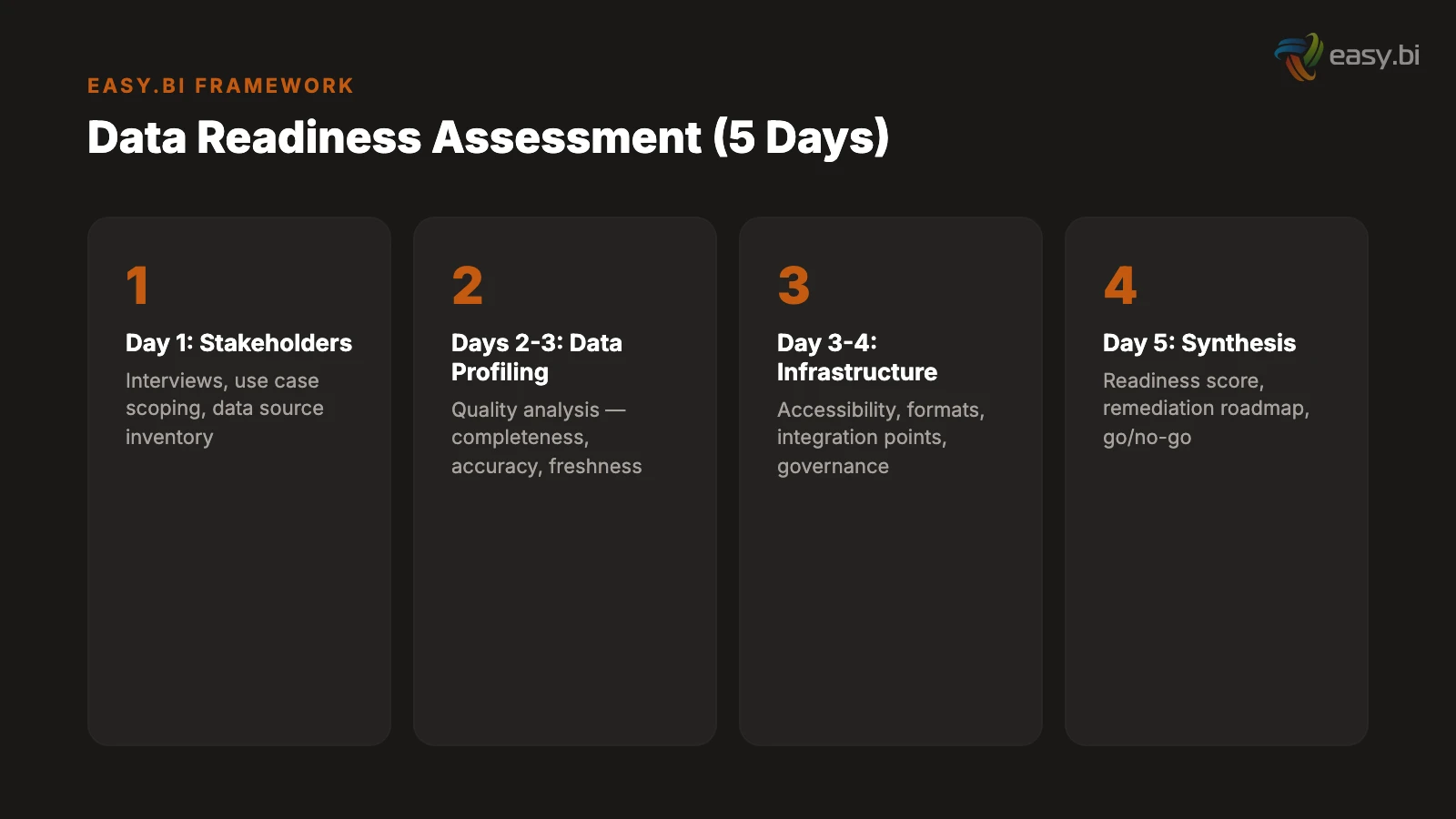

- •A practical data readiness assessment takes 5 days and covers 4 dimensions: data quality (completeness, accuracy, freshness), data infrastructure (accessibility, format, integration), data governance (ownership, privacy, consent), and organizational readiness (skills, processes, culture).

- •Organizations that skip data readiness assessments pay 2.8x more in remediation costs later, and those with strong data governance achieve 10.3x ROI from AI initiatives versus 3.7x for those with poor data foundations.

A practical AI data readiness assessment framework for mid-market companies. Covers data quality checklists, infrastructure requirements, governance gaps, and the minimum viable data you actually need versus what vendors tell you.

The pattern repeats across every mid-market AI initiative I have been involved with. The executive team approves an AI project. The engineering team selects a use case. A vendor is engaged. Two months and EUR 80,000 later, the project stalls - not because the AI does not work, but because the data does not.

The model needs customer transaction history, but 30% of records are missing key fields. The training data comes from three systems with incompatible formats. The "clean" dataset turns out to contain 18 months of test transactions nobody removed. The GDPR team discovers that customer consent does not cover AI training.

Gartner predicts that through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data.[1] That is not a warning about future risk. It is a description of what is happening right now. 42% of companies abandoned most of their AI initiatives in 2025, up from 17% in 2024.[2] The average sunk cost per abandoned initiative: USD 7.2 million.[2]

The fix is not more data or better data infrastructure. It is a structured assessment before you write the first line of AI code.

Why Is AI-Ready Data Different from BI-Ready Data?

Most mid-market companies believe they have good data because their dashboards work. Monthly revenue reports render correctly. Inventory counts reconcile. Customer segments populate in the CRM. The data warehouse passes its nightly ETL jobs without errors.

None of this means the data is ready for AI.

According to a Q3 2024 Gartner survey of 248 data management leaders, 63% of organizations either do not have or are unsure if they have the right data management practices for AI.[1] This gap exists because AI-ready data has fundamentally different requirements from BI-ready data.

| Dimension | BI-Ready Data | AI-Ready Data |

|---|---|---|

| Granularity | Aggregated (monthly totals, averages) | Raw, event-level (every transaction, every click) |

| Completeness | Missing values handled by defaults or exclusions | Missing patterns must be understood and labeled |

| Outliers | Removed or smoothed for clean reporting | Preserved - outliers are the patterns AI needs to learn |

| History | Typically 12-24 months of summary data | 3-6 months minimum of granular event data |

| Format | Structured, normalized, schema-consistent | Can include unstructured (text, images, logs) |

| Freshness | Nightly or weekly refresh is acceptable | Real-time or near-real-time for operational AI |

| Labeling | Not required - humans interpret reports | Labeled examples required for supervised learning |

The most common failure: companies feed their BI data - aggregated, cleaned, outlier-free - into an AI model and wonder why it does not work. The model needs the messy, granular, complete data that the BI team spent years cleaning up. As Gartner states, "the data must be representative of the use case, of every pattern, errors, outliers and unexpected emergence that is needed to train or run the AI model."[1]

This is not a technology problem. It is a mindset shift. The data team has been rewarded for making data clean and structured. AI needs data that is complete and representative. These are often opposing goals.

See how ebiCore accelerates development.

What Does a Practical Data Readiness Assessment Cover?

A data readiness assessment is a 5-day structured evaluation that answers one question: can this organization's data support the planned AI use case, and if not, what needs to change?

The assessment covers 4 dimensions, each scored on a 1-5 maturity scale:

Dimension 1: Data Quality (Day 1-2)

Data quality is the dimension that 64% of organizations cite as their top AI challenge.[3] The assessment examines 5 quality metrics for each data source relevant to the planned AI use case:

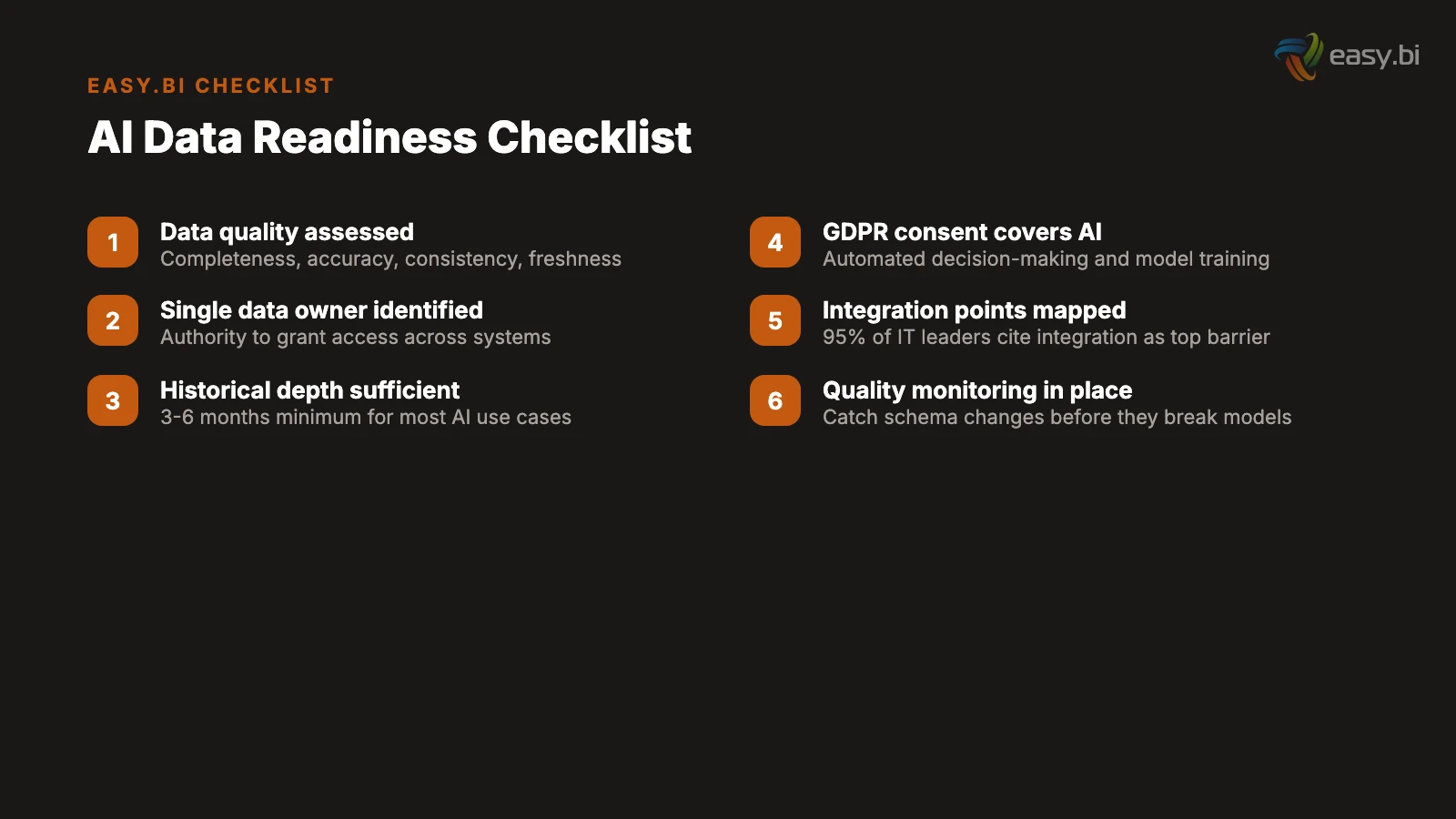

- Completeness: What percentage of records have all required fields populated? For AI training data, anything below 85% completeness requires remediation or imputation strategies.

- Accuracy: Do the values reflect reality? Cross-reference samples against source systems. A CRM that shows 10,000 active customers while the billing system shows 7,500 has an accuracy problem that will poison any AI model trained on it.

- Consistency: Do the same entities have the same representation across systems? Customer "Muller GmbH" in the CRM, "Mueller GmbH" in the ERP, and "Müller GmbH" in the billing system is one customer with three identities.

- Freshness: How current is the data? For operational AI (real-time recommendations, dynamic pricing), data older than 24 hours may be useless. For analytical AI (trend prediction, segmentation), monthly updates may be sufficient.

- Volume: Is there enough data to train a model? Most supervised learning tasks need 500-5,000 labeled examples as a minimum. Below that threshold, consider few-shot approaches or pre-trained models with fine-tuning.

Over a quarter of organizations estimate they lose more than USD 5 million annually due to poor data quality, with 7% reporting losses of USD 25 million or more.[4] These costs compound when poor data is used to train AI models - the model does not just fail, it fails confidently, making decisions based on flawed patterns.

Dimension 2: Data Infrastructure (Day 2-3)

Infrastructure readiness determines how quickly you can access, transform, and serve data to AI models. The assessment evaluates:

- Accessibility: Can the AI team query the data they need without filing IT tickets? Self-service data access is a prerequisite for iterative AI development. If every data request takes 2 weeks to fulfill, the AI project timeline doubles.

- Integration: How many systems hold pieces of the data puzzle? Each integration point adds complexity, latency, and potential failure modes. 95% of IT leaders cite integration issues as a top barrier to scaling AI.[3]

- Format and structure: Is the data in formats that AI tools can consume? CSV exports, PDF reports, and proprietary database formats require transformation pipelines. Modern AI platforms work best with parquet files, structured JSON, or direct database connections.

- Compute and storage: Does the infrastructure support model training workloads? Cloud-based training (AWS SageMaker, Google Vertex AI, Azure ML) eliminates the need for on-premise GPU infrastructure, but requires network bandwidth and data transfer policies that support cloud workflows.

Traditional data management operations are too slow, too structured, and too rigid for AI teams. Organizations that fail to realize the vast differences between AI-ready data requirements and traditional data management will endanger the success of their AI efforts.

Dimension 3: Data Governance (Day 3-4)

Governance readiness is the dimension most teams skip - and the one that kills projects at the compliance review stage. The assessment examines:

- Data ownership: Who owns each dataset? Who approves its use for AI training? In many mid-market companies, data ownership is implicit ("marketing owns the CRM data") but not documented. AI projects need explicit ownership and approval processes.

- Privacy and consent: Does the consent collected from customers cover AI training and automated decision-making? GDPR requires explicit consent for automated profiling, and many consent forms collected before 2024 do not include this. Remediation ranges from updating consent forms to re-collecting consent from existing customers.

- Data lineage: Can you trace each data point from source to AI model? Gartner predicts that by 2026, 60% of large enterprises will have deployed data lineage tools, up from 20% in 2023.[5] For mid-market companies, lineage does not require enterprise tools - a documented data flow diagram covering the AI use case is sufficient.

- Retention and deletion: Can you remove a customer's data from the AI training set if they exercise their right to erasure? If the model was trained on their data, can you retrain without it? These are GDPR requirements that many AI implementations overlook.

Dimension 4: Organizational Readiness (Day 4-5)

The final dimension assesses whether the organization has the skills, processes, and culture to support AI development. It covers:

- Technical skills: Does the team have experience with data pipelines, API integrations, and model deployment? 55% of organizations report a talent gap as the strongest barrier to AI adoption.[6] Mid-market companies do not need data scientists - they need software engineers who understand AI integration patterns.

- Data literacy: Do business stakeholders understand what data they have, where it lives, and what it represents? Low data literacy leads to misaligned expectations about what AI can and cannot do with available data.

- Process maturity: Are there established processes for data change management, quality monitoring, and incident response? AI models degrade when upstream data changes without notice. A single schema change in a source system can break a production AI pipeline.

- Executive sponsorship: Is there a named executive who owns the AI initiative and can unblock data access, budget, and cross-department coordination? AI projects that lack executive sponsorship have a 3x higher failure rate.

What Is the Minimum Viable Data for an AI Pilot?

Vendors will tell you that you need a data lake, a data governance platform, a feature store, and 3 years of clean historical data before you can start an AI project. This is vendor-driven scope expansion, and it is the single biggest reason mid-market companies delay AI adoption by 12-18 months.

The actual minimum viable data for the 5 most common mid-market AI use cases:

Document processing (IDP): 200-500 sample documents of the type you want to process. No historical data needed. No data warehouse needed. Just representative examples of the documents your team handles daily.

Customer service automation: 3-6 months of support ticket history and an up-to-date knowledge base. The ticket history provides training examples for intent classification. The knowledge base provides the content for retrieval-augmented generation. Both exist in your helpdesk system today.

Demand forecasting: 12-24 months of transaction-level sales data by SKU, plus external signals (seasonality calendars, promotion schedules). Most ERP systems store this data by default. The challenge is not data availability but data extraction and formatting.

Predictive maintenance: 6-8 weeks of sensor data from the equipment you want to monitor, plus maintenance logs covering the same period. The model learns normal operating patterns from the sensor data and correlates anomalies with recorded maintenance events.

LLM-powered internal tools: A structured knowledge base (product documentation, process guides, policy documents) in a searchable format. No historical data needed. The RAG pipeline retrieves relevant documents in real time. The quality of the AI output is directly proportional to the quality of the knowledge base.

In every case, the minimum viable data is less than what vendors recommend and more than what most companies think they have. The assessment bridges this gap by identifying exactly what exists, what is missing, and how long the remediation will take.

How Do You Score Data Readiness and Prioritize Remediation?

The output of a data readiness assessment is a maturity score across all 4 dimensions, with specific remediation actions prioritized by impact and effort.

The scoring framework uses a 1-5 scale for each dimension:

- Level 1 (Not Ready): Fundamental gaps. Data does not exist, is inaccessible, or has critical quality issues. Remediation: 3-6 months before AI is feasible.

- Level 2 (Partially Ready): Data exists but has significant quality or accessibility issues. Remediation: 4-8 weeks of focused data engineering.

- Level 3 (Adequate): Data is usable for a pilot with known limitations. AI development can begin with parallel data quality improvement.

- Level 4 (Ready): Data meets quality, accessibility, and governance requirements for the planned use case. AI development can proceed without data remediation.

- Level 5 (Optimized): Data infrastructure supports continuous AI development with automated quality monitoring, real-time access, and full governance. Rare among mid-market companies.

Most mid-market companies score Level 2-3 across dimensions. This is not a failure - it is a starting point. The critical insight: you do not need Level 5 to start an AI project. You need Level 3 for a pilot and Level 4 for production. Companies that wait for Level 5 before starting never ship.

Organizations that skip data readiness assessments pay 2.8x more in remediation costs later.[2] Those with strong data governance achieve 10.3x ROI from AI initiatives versus 3.7x for those with poor data connectivity.[2] The 5-day assessment is the highest-leverage activity in any AI roadmap.

Our AI framework cuts development time in half

ebiCore is our proprietary agentic AI framework that accelerates innovation and reduces cost.

Start with a Strategy CallWhat Are the Most Common Data Readiness Gaps in Mid-Market Companies?

After conducting data readiness assessments across multiple mid-market enterprises, I see the same 5 gaps repeatedly:

Gap 1: Siloed data with no integration layer. Customer data lives in the CRM, transaction data in the ERP, support data in the helpdesk, and product data in the PIM. Each system has its own customer identifier, its own data format, and its own update cadence. Building an AI model that spans these systems requires a data integration layer that most mid-market companies do not have.

Gap 2: Historical data was cleaned for BI, not AI. The data warehouse contains aggregated, de-duplicated, outlier-free data that produces clean reports but terrible AI models. The raw, granular data that AI needs was discarded or archived years ago. Recovery requires re-extracting from source systems.

Gap 3: No labeled data for supervised learning. The company has millions of transactions but zero labeled examples. Nobody tagged which support tickets were successfully resolved, which maintenance events were preventable, or which customer interactions led to churn. Creating labeled datasets is the most labor-intensive remediation step, typically requiring 2-4 weeks of manual labeling by domain experts.

Gap 4: GDPR consent does not cover AI use. Customer consent was collected under terms that cover "service delivery" and "marketing communications" but not "automated decision-making" or "model training." Using this data for AI training creates compliance risk. Remediation requires updating consent language and frequently re-collecting consent.

Gap 5: No data quality monitoring. Data quality is checked when reports break, not proactively. Schema changes in source systems propagate silently through pipelines. A field that was 99% populated last month drops to 60% after a system update, and nobody notices until the AI model's accuracy degrades. Automated data quality monitoring catches these issues within hours instead of weeks.

77% of organizations rate their data quality as average or worse.[3] This is not a condemnation - it is the reality that data readiness assessments are designed to work with. You do not need perfect data. You need assessed data with a clear remediation plan.

For the engineering perspective on building AI systems that work with real-world data quality, read our guide on AI in enterprise software. For practical patterns on taking AI from prototype to production (including data pipeline architecture), see from GPT wrapper to production AI. And for the governance framework that addresses data privacy in AI deployments, explore our AI governance guide for mid-market.

If you are planning an AI initiative and want to know if your data is ready, explore our AI Impact Assessment. We run a structured data readiness evaluation as part of every engagement - so you invest in AI with full visibility into what your data can and cannot support.

Frequently Asked Questions

How long does a data readiness assessment take for a mid-market company?

A focused data readiness assessment takes 5 working days: 1 day for stakeholder interviews and use case scoping, 2 days for data profiling and quality analysis, 1 day for infrastructure and governance review, and 1 day for findings synthesis and roadmap creation. This produces an actionable readiness score across 4 dimensions and a prioritized remediation plan.

What is the minimum amount of data needed for an AI pilot?

Most AI pilots need 3-6 months of historical data and 500-5,000 labeled examples for supervised learning use cases. For LLM-based applications using retrieval-augmented generation, you need a structured knowledge base with current, accurate content rather than historical training data. The threshold is consistently lower than what enterprise vendors recommend.

What is the most common data quality issue that kills AI projects?

Inconsistent data formats and entity resolution across systems. When the same customer appears as 3 different records in 3 different systems with different naming conventions and identifiers, any AI model trained on this data inherits the inconsistency. Data normalization and entity resolution are the first remediation steps in nearly every assessment.

References

- [1] Gartner (2025). "Lack of AI-Ready Data Puts AI Projects at Risk. gartner.com

- [2] Pertama Partners (2026). "AI Project Failure Rate 2026. pertamapartners.com

- [3] CIT Solutions (2026). "Why Data Quality and Integration Are the Biggest Barriers citsolutions.net

- [4] IBM (2025). "The True Cost of Poor Data Quality. ibm.com

- [5] Integrate.io (2026). "Data Transformation Challenge Statistics. integrate.io

- [6] McKinsey (2025). "The state of AI in 2025. mckinsey.com

- [7] Info-Tech Research Group (2026). "Data Priorities 2026. morningstar.com

Explore Other Topics

Ready to accelerate with AI?

30-minute call with an engineering lead. No sales pitch - just honest answers about your project.

98% engineer retention · 14-day delivery sprints · No lock-in contracts