From GPT Wrapper to Production AI: The Engineering Gap Nobody Talks About

Table of Contents+

- What Are the 5 Engineering Layers Between a Wrapper and Production AI?

- How Do You Solve the Latency Problem in Production LLM Systems?

- Why Does Error Handling in LLM Systems Require a Different Approach?

- How Do You Manage Hallucinations in Production Systems?

- What Does LLM Cost Optimization Look Like at Production Scale?

- Why Is Observability the Layer That Teams Skip and Regret?

- How Do You Handle Security in Production AI Systems?

- What Is the Path from Wrapper to Production?

- Frequently Asked Questions

- References

TL;DR

Five engineering layers separate a GPT wrapper from production AI: latency optimization (prompt caching cuts costs 60-90%), error handling (5% of LLM calls fail), hallucination management (no model exceeds 70% factuality), cost control (70-85% savings through routing and caching), and observability. This post maps each layer with implementation patterns for mid-market teams.

Key Takeaways

- •A ChatGPT demo takes a weekend to build. A production AI system that handles 10,000 daily requests with 99.9% uptime, sub-2-second latency, and auditable outputs takes 3-6 months of engineering - the gap between these two is where 80% of enterprise AI projects die.

- •Five engineering layers separate a GPT wrapper from production AI: latency optimization (prompt caching cuts costs by 60-90%), error handling (5% of all LLM API calls return errors), hallucination management (no model breaks 70% on factuality benchmarks), cost control (output tokens cost 3-8x more than input tokens), and observability (you cannot fix what you cannot measure).

- •LLM cost optimization through model routing, context compaction, and prompt caching reduces API spend by 70-85% without changing output quality - the difference between a prototype that costs EUR 50,000/month in API fees and a production system that costs EUR 7,500.

- •Over 90% of production AI teams now run 5+ LLMs simultaneously, making gateway infrastructure and model routing non-negotiable for any system beyond a single-use-case prototype.

- •Security in production AI is not an add-on - it is architecture. Prompt injection, data exfiltration through model outputs, and PII leakage in API calls are production risks that do not exist in demos.

The engineering gap between a working ChatGPT demo and production-grade AI. Covers latency, error handling, hallucination management, cost optimization, observability, and security for enterprise LLM deployments.

Every week, someone shows me a demo. "Look, we built an AI assistant in a weekend. It answers questions about our product docs. It even handles follow-up questions." The demo works. The stakeholders are impressed. The budget is approved.

Then reality arrives.

The assistant hallucinates a refund policy that does not exist. The API bill for month one is EUR 12,000 - four times the estimate. Response times spike to 8 seconds during peak hours. A customer pastes their credit card number into the chat, and it gets logged in plain text to a third-party API.

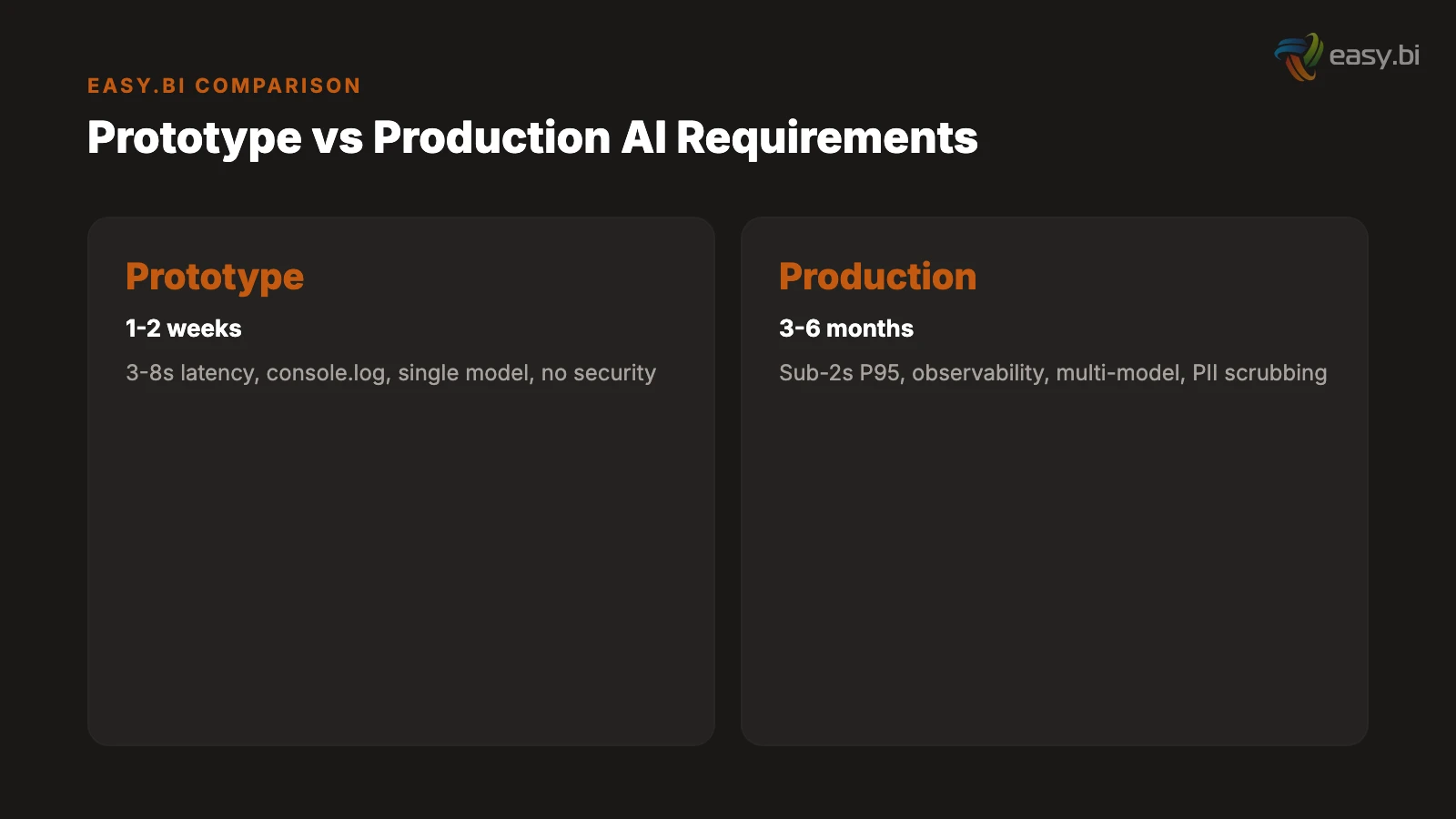

This is the engineering gap nobody talks about. A ChatGPT demo takes a weekend. A production AI system that handles 10,000 daily requests with 99.9% uptime, sub-2-second latency, and auditable outputs takes 3-6 months of focused engineering. The prototype is 5-10% of the total effort. The other 90% is the boring, critical work that separates a demo from a system.

80% of AI projects fail to reach meaningful production.[1] Most of them had working demos. The demo was never the problem.

What Are the 5 Engineering Layers Between a Wrapper and Production AI?

After building production AI systems across multiple enterprise deployments, I have identified 5 engineering layers that every GPT wrapper must cross to become a production system. Skip any one of them, and the system will fail - not in the demo, but in month 3 when real users hit real edge cases at real scale.

| Layer | Prototype State | Production Requirement | Engineering Effort |

|---|---|---|---|

| Latency | 3-8 second responses, no caching | Sub-2 seconds P95, prompt caching, streaming | 2-4 weeks |

| Error Handling | Try/catch around API call | Retry logic, fallback models, circuit breakers, graceful degradation | 2-3 weeks |

| Hallucination Management | "It seems mostly accurate" | RAG pipeline, output validation, confidence scoring, citation tracking | 4-8 weeks |

| Cost Optimization | Single model, full context every call | Model routing, context compaction, caching, batching | 2-4 weeks |

| Observability | Console.log | Token tracking, latency monitoring, quality scoring, cost dashboards | 2-3 weeks |

Total engineering effort: 12-22 weeks. That is 3-5 months of a senior engineer's time, or 6-10 weeks with a dedicated 2-person team. This is on top of the prototype work, not a replacement for it.

See how ebiCore accelerates development.

How Do You Solve the Latency Problem in Production LLM Systems?

In a demo, a 5-second response time is impressive. In production, it is a user experience failure. Users expect sub-2-second responses for interactive applications. For real-time applications like customer support or search, the target drops to under 1 second.

The latency problem in LLM applications has three components: time-to-first-token (TTFT), token generation speed, and total response time. GPT-5 improved TTFT by 15-20% compared to its predecessors,[2] but model improvements alone do not solve the problem. You need engineering at every layer.

Prompt caching is the single highest-impact optimization. Anthropic's prompt caching delivers up to 90% cost reduction on cached tokens - cache reads cost USD 0.30 per million tokens versus USD 3.00 per million for fresh computation.[3] For applications with system prompts, knowledge base context, or repeated conversation patterns, caching cuts both latency and cost dramatically.

Response streaming transforms perceived latency. Instead of waiting for the complete response, stream tokens to the user as they are generated. The user sees the first word within 200-500 milliseconds, even if the complete response takes 3 seconds. This is a front-end engineering change, not a model change, and it transforms the user experience.

Context window management directly affects latency. Every additional token in the prompt increases processing time. Production systems need aggressive context compaction - summarizing conversation history, trimming irrelevant document chunks from RAG results, and structuring prompts for minimum token usage without losing essential context. Context compaction alone delivers 50-70% token reduction.[3]

One pattern I have deployed across multiple production systems: a tiered latency architecture. Simple queries (classification, routing, yes/no answers) go to fast, small models with sub-500ms response times. Complex queries (analysis, generation, multi-step reasoning) go to larger models with 2-3 second budgets. The router itself adds only 11 microseconds of overhead.[2]

Why Does Error Handling in LLM Systems Require a Different Approach?

Traditional API error handling follows a simple pattern: the API returns an error code, you handle it, done. LLM APIs break this pattern in three ways that prototype builders rarely anticipate.

Rate limiting is the dominant failure mode. In February 2026, analysis of production LLM deployments showed that 5% of all LLM call spans reported an error, and 60% of those errors were caused by exceeded rate limits.[4] Rate limits are not bugs - they are a feature of every major LLM API. Production systems need request queuing, adaptive rate limiting, and multi-provider fallback.

Partial failures are invisible. An LLM can return a 200 OK response with hallucinated, incomplete, or incoherent content. There is no error code for "the model made something up." Production error handling must include output validation - checking that responses meet structural requirements, contain required elements, and do not include prohibited content.

Latency variance is extreme. The same prompt can return in 800 milliseconds or 12 seconds depending on API load, model congestion, and prompt complexity. Production systems need timeout management with graceful degradation - if the primary model does not respond within the latency budget, fall back to a faster model or a cached response.

The error handling architecture for production LLM systems looks like this:

Layer 1 - Request management: Rate limiting, request queuing, priority classification. High-priority requests (customer-facing) get guaranteed capacity. Low-priority requests (batch processing, analytics) queue during peak load.

Layer 2 - Provider failover: Over 90% of production AI teams now run 5+ LLMs simultaneously.[4] If the primary model is unavailable or slow, route to the secondary. If both fail, return a graceful degradation response ("I cannot process this request right now") rather than an error page.

Layer 3 - Output validation: Check every response against structural requirements before returning it to the user. Does it contain required fields? Does it exceed length limits? Does it include content that should have been filtered? This layer catches the failures that HTTP status codes miss.

How Do You Manage Hallucinations in Production Systems?

Hallucination management is the engineering challenge that separates production AI from demos. In a demo, occasional hallucinations are "interesting edge cases." In production, a single hallucinated refund policy or fabricated product specification creates legal liability and erodes customer trust.

The uncomfortable truth: no current model breaks 70% accuracy on the FACTS benchmark - meaning every model is wrong more than 30% of the time on factual queries without search augmentation.[2] With search-enabled factuality, the best models reach 77-84%, but that still means 1 in 5 to 1 in 4 factual statements may be incorrect.

Production hallucination management is a three-layer system:

Retrieval-Augmented Generation (RAG): The foundation. Instead of relying on the model's training data, retrieve relevant documents from your knowledge base and include them in the prompt. This constrains the model's responses to information you control. A well-implemented RAG pipeline reduces hallucination rates by 60-80% compared to bare model queries.

Output validation and citation tracking: Every factual claim in the model's response should reference a specific document from the RAG pipeline. If the model makes a claim that cannot be traced to a source document, flag it for review or strip it from the response. This is not foolproof - models can misrepresent source content - but it catches the most egregious fabrications.

Confidence scoring and human escalation: Assign confidence scores to model responses based on source coverage, prompt-response alignment, and response consistency across multiple generations. Low-confidence responses get routed to human review rather than delivered directly to users.

A dangerous paradox exists in production AI: when reasoning models are placed in closed enterprise systems without web access, they prioritize prompt constraint satisfaction over factual accuracy - systematically distorting facts to follow formatting rules perfectly. Your production system must account for this behavior.

The engineering work here is substantial. A production-grade RAG pipeline with output validation and confidence scoring takes 4-8 weeks to build and tune. But the alternative - deploying an unguarded LLM into a customer-facing application - is a liability that no responsible engineering team should accept.

What Does LLM Cost Optimization Look Like at Production Scale?

The cost shock hits around month 2. The prototype used a single model for every request, sent full conversation history as context, and ran on the most capable (most expensive) model available. At 100 requests per day, the API bill was manageable. At 10,000 requests per day, it is EUR 15,000-50,000 monthly.

Five optimization levers, applied together, cut LLM API spend by 70-85% without changing output quality.[3]

Model routing (40-70% savings): Route simple queries to fast, cheap models. Simple queries represent 80% of enterprise traffic.[2] A classification request does not need GPT-5 - a smaller model handles it at 1/10th the cost. Reserve expensive reasoning models for the 20% of queries that actually require them.

Context compaction (50-70% token reduction): Summarize conversation history instead of sending full transcripts. Trim RAG results to the most relevant chunks. Remove redundant system prompt content. Every token you remove from the context window reduces both cost and latency.

Prompt caching (up to 90% on cache hits): For applications where the system prompt and base context remain consistent across requests, prompt caching eliminates reprocessing of static content. Applications with knowledge bases or lengthy instructions see 60-80% cost reduction from caching alone.[3]

Batching (50% discount): For non-real-time use cases (report generation, batch analysis, content processing), batch API calls reduce per-token costs by up to 50%. The trade-off is latency - batch responses take minutes to hours instead of seconds.

Output structure control: Output tokens cost 3-8x more than input tokens across every major provider.[3] Structuring outputs (JSON schemas, constrained generation) reduces output verbosity without losing information. A structured response with 200 tokens carries the same information as an unstructured response with 600 tokens - at one-third the output cost.

The combined effect: a system costing EUR 50,000 monthly in API fees at prototype scale drops to EUR 7,500-15,000 after applying all five levers. That is the difference between a project that gets killed for cost reasons and one that scales to the next phase.

Why Is Observability the Layer That Teams Skip and Regret?

Enterprise LLM spending has surged past USD 8.4 billion.[4] The LLM observability platform market grew to USD 1.97 billion in 2025, projected to reach USD 2.69 billion in 2026 at a 36.3% CAGR.[5] Those numbers reflect a hard-learned lesson: you cannot optimize what you cannot measure.

Prototype observability is console.log. Production observability covers four dimensions:

Token usage tracking: Which endpoints consume the most tokens? Which users generate the longest conversations? Where are tokens being wasted on redundant context? Without token-level tracking, cost optimization is guesswork.

Latency monitoring: P50, P95, and P99 latency by endpoint, model, and request type. Latency regressions often indicate upstream issues (RAG pipeline slowdowns, database query performance, model provider degradation) that token-level metrics miss.

Quality scoring: Automated evaluation of response quality using rubrics, reference comparisons, or user feedback signals. A quality score that drops 10% over a week indicates model drift, RAG pipeline degradation, or changes in query patterns that need investigation.

Cost dashboards: Real-time cost tracking by model, endpoint, user segment, and time period. The ability to forecast next month's API costs based on current usage patterns prevents budget surprises and enables proactive optimization.

89% of organizations have implemented observability for their AI agents, with quality issues emerging as the primary production barrier at 32%.[4] The teams that invested in observability from day one catch quality regressions within hours. The teams that added observability after a production incident spend weeks reconstructing what happened.

Our AI framework cuts development time in half

ebiCore is our proprietary agentic AI framework that accelerates innovation and reduces cost.

Start with a Strategy CallHow Do You Handle Security in Production AI Systems?

Security in production AI is not an add-on feature. It is an architectural requirement that affects every layer of the system. Three threat categories require explicit engineering:

Prompt injection: Users (or attackers) craft inputs designed to override the system prompt and make the model behave in unintended ways. Production systems need input sanitization, prompt structure isolation (separating user input from system instructions at the API level), and output filtering to catch injection-driven responses.

Data exfiltration through model outputs: An LLM with access to your knowledge base can be tricked into revealing information that should be access-controlled. If your RAG pipeline includes HR documents, financial data, and product specifications, the model can potentially surface any of them to any user. Production systems need document-level access controls in the RAG pipeline, not just user authentication at the application level.

PII leakage in API calls: Every piece of data sent to an LLM API is processed by a third party. Customer names, email addresses, phone numbers, and account details in user messages become third-party data processing. Production systems need PII detection and scrubbing before data hits the API, data processing agreements with every LLM provider, and audit logs for compliance.

For DACH companies, GDPR adds another dimension. Personal data sent to LLM APIs outside the EU requires explicit legal basis. Enterprise-tier API deployments from Azure OpenAI Service and AWS Bedrock offer EU data residency - but the default API endpoints do not. This is a configuration detail that prototypes ignore and production systems cannot.

What Is the Path from Wrapper to Production?

The path is sequential, not parallel. Each layer builds on the previous one:

Week 1-2: Harden the prototype. Add structured error handling, request timeouts, and basic logging. This is not production-grade yet, but it stops the system from failing silently.

Week 3-6: Build the RAG pipeline and hallucination management layer. This is the highest-risk engineering work. Get it right before scaling.

Week 7-10: Implement cost optimization. Add model routing, prompt caching, and context compaction. Run cost projections against production traffic estimates.

Week 11-14: Deploy observability and security. Add token tracking, quality scoring, PII scrubbing, and access controls. This is the layer that enables safe scaling.

Week 15-18: Load test, security audit, and gradual rollout. Start with 5% of production traffic, monitor all observability metrics, and scale incrementally.

The total timeline is 4-5 months from prototype to production. Companies that try to compress this into 4-5 weeks end up with a prototype running in production - and the incidents that follow.

For the architectural patterns that make production AI work at enterprise scale, read our guide on LLM integration patterns. For the build-versus-buy decision on AI infrastructure, see AI build vs. buy models. And for the governance framework that keeps production AI compliant, explore our AI governance guide for mid-market.

If you have a working AI prototype and need to take it to production, explore our AI engineering services. We bridge the gap between demo and production-grade system - with the error handling, cost optimization, and security that prototypes skip.

Frequently Asked Questions

How long does it take to move from a GPT wrapper prototype to production AI?

A well-scoped production deployment takes 3-6 months of engineering work after the prototype phase. The timeline depends on integration complexity, security requirements, and scale. The prototype represents 5-10% of the total engineering effort. The remaining 90% covers error handling, observability, cost optimization, and security hardening that demos do not require.

What is the biggest technical risk when deploying LLMs in production?

Hallucination management is the highest-risk engineering challenge. No current model breaks 70% accuracy on factuality benchmarks without search augmentation. Production systems need retrieval-augmented generation, output validation layers, and confidence scoring to keep hallucination rates below business-acceptable thresholds for customer-facing applications.

How much does LLM API cost optimization actually save in production?

Applying five optimization levers - model routing, context compaction, prompt caching, batching, and output structure control - reduces LLM API spend by 70-85%. A system costing EUR 50,000 monthly in API fees at prototype stage typically drops to EUR 7,500-15,000 after optimization, without degrading output quality or response accuracy.

References

- [1] Pertama Partners (2026). "AI Project Failure Rate 2026: 80% Fail. pertamapartners.com

- [2] Suprmind (2026). "AI Hallucination Rates and Benchmarks in 2026. suprmind.ai

- [3] Morph (2026). "LLM Cost Optimization: 5 Levers to Cut API Spend 70-85%. morphllm.com

- [4] Datadog (2026). "State of AI Engineering. datadoghq.com

- [5] Research and Markets (2026). researchandmarkets.com

- [6] ProjectDiscovery (2025). "How We Cut LLM Costs by 59% With Prompt Caching. projectdiscovery.io

- [7] Lakera (2026). "LLM Hallucinations in 2026: How to Understand and Tackle AI's Mo lakera.ai

Explore Other Topics

Ready to accelerate with AI?

30-minute call with an engineering lead. No sales pitch - just honest answers about your project.

98% engineer retention · 14-day delivery sprints · No lock-in contracts