Why 85% of AI Projects Fail Before Production

Table of Contents+

- How Bad Is the AI Project Failure Rate, Really?

- What Are the 5 Categories of AI Project Failure?

- Why Is Data Readiness the Top Killer?

- Why Do Business Cases Collapse Mid-Project?

- Why Does Executive Sponsorship Erode?

- Why Does Technical Infeasibility Only Account for 12%?

- How Does Organizational Resistance Compound Every Other Failure?

- The Diagnostic Checklist: Score Your Organization Before You Start

- What Separates the 15% That Succeed?

- Frequently Asked Questions

- References

TL;DR

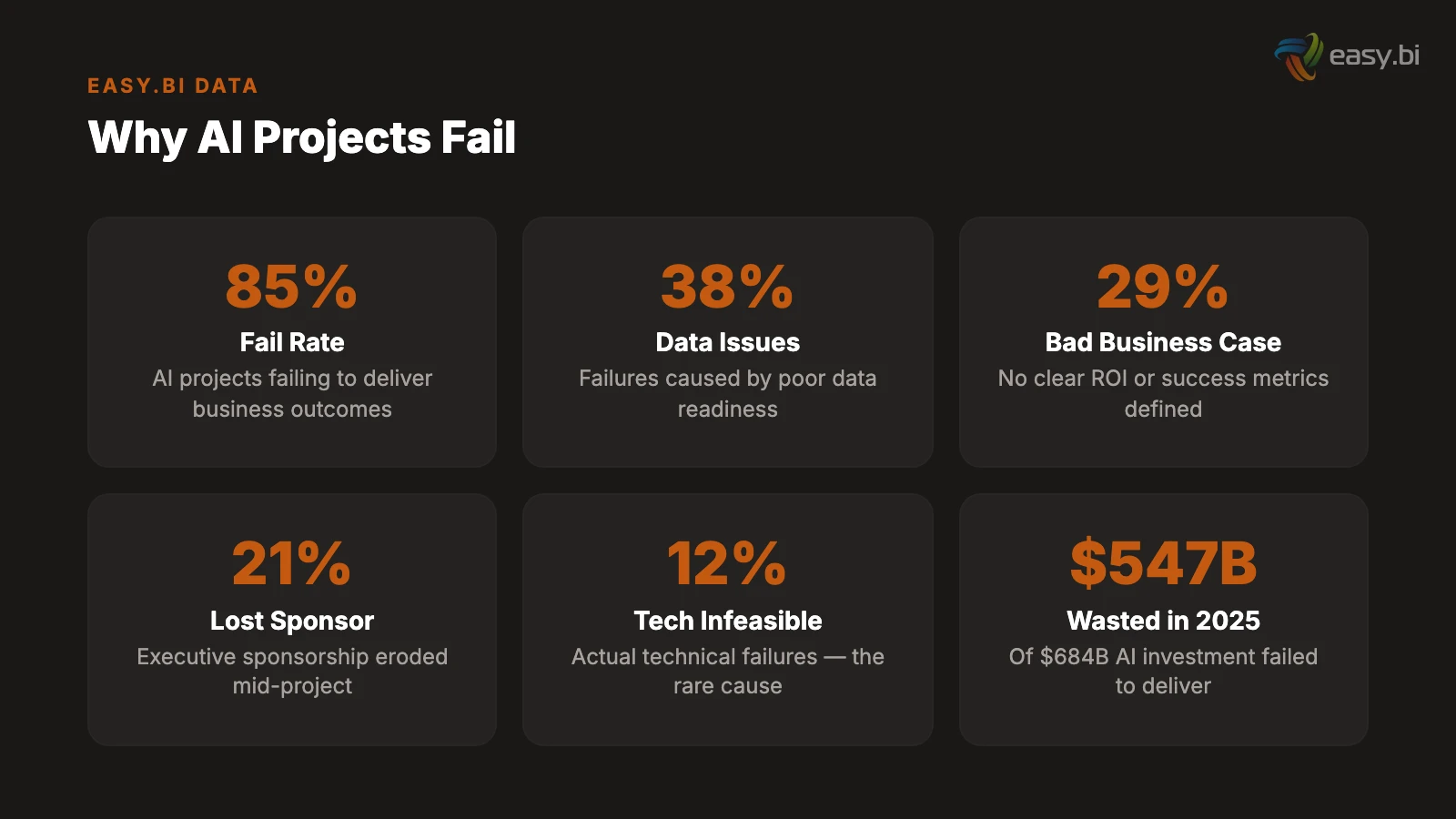

85% of AI projects fail not because of bad models but because of organizational causes: poor data readiness (38%), unviable business cases (29%), and lost executive sponsorship (21%). Only 12% fail for technical reasons. Use the diagnostic checklist in this article to identify your risk signals before committing budget.

Key Takeaways

- •The failure is almost never the model. Of AI projects that fail, only 12% cite technical infeasibility as the cause. The remaining 88% fail due to poor data readiness (38%), unviable business cases (29%), and loss of executive sponsorship (21%).

- •42% of companies abandoned at least one AI initiative in 2025, up from 17% in 2024. The average sunk cost per abandoned project was EUR 4.2 million. This acceleration signals that organizations are hitting scaling walls, not technology walls.

- •Projects with clear pre-approval success metrics achieve 54% success rates versus 12% for those without. The single highest-leverage action before starting any AI project is defining what measurable outcome constitutes success.

- •Data readiness is the root cause that masquerades as every other problem. When executives say the AI does not work, the model is inaccurate, or the results are not useful, the underlying issue in 63% of cases is that the organization does not have AI-ready data practices.

- •The diagnostic checklist in this article covers 5 failure categories with 23 specific risk signals. Score your organization before committing budget to an AI initiative - not after the pilot stalls.

Data-driven analysis of why 85% of AI projects fail before production. Covers organizational, data, process, and partner-selection causes with a diagnostic checklist for mid-market CTOs in the DACH region.

The statistic is everywhere: 85% of AI projects fail to deliver their intended business outcomes.[1] What is not everywhere is a useful analysis of why. Most failure postmortems blame "bad data" or "lack of strategy" and move on, as if naming the symptom were the same as diagnosing the disease.

It is not. After leading AI implementations that succeeded and inheriting ones that failed, I have mapped the actual failure patterns. They are not random. They cluster into 5 categories, and most organizations trigger 3 or more of them simultaneously.

This article breaks down each category with the data behind it, the specific signals that predict failure, and a diagnostic checklist you can score before committing budget to your next AI initiative.

How Bad Is the AI Project Failure Rate, Really?

The numbers are worse than most executive summaries admit. Here is what the research actually says:

- RAND Corporation (2025): 80.3% of AI projects fail to deliver intended business value. Of those, 33.8% are abandoned before reaching production, 28.4% reach completion but miss expected value, and 18.1% deliver some value but cannot justify the investment.[2]

- Gartner (2025): At least 50% of generative AI projects are abandoned after proof of concept due to poor data quality, inadequate risk controls, escalating costs, or unclear business value.[3]

- MIT Sloan (2025): 95% of GenAI pilots fail to scale to production deployment. Infrastructure limitations account for 64% of scaling failures.[2]

- S&P Global (2025): 42% of companies abandoned most of their AI initiatives in 2025, up from 17% in 2024.[2]

The trend is accelerating. Not because AI technology is getting worse - it is getting dramatically better. The failure rate is climbing because more organizations are attempting AI projects without the organizational prerequisites to succeed. The technology works. The organizations do not.

Over USD 547 billion of the USD 684 billion invested in AI initiatives in 2025 failed to deliver intended business value.[2] That is not a technology problem. That is a management problem.

See how ebiCore accelerates development.

What Are the 5 Categories of AI Project Failure?

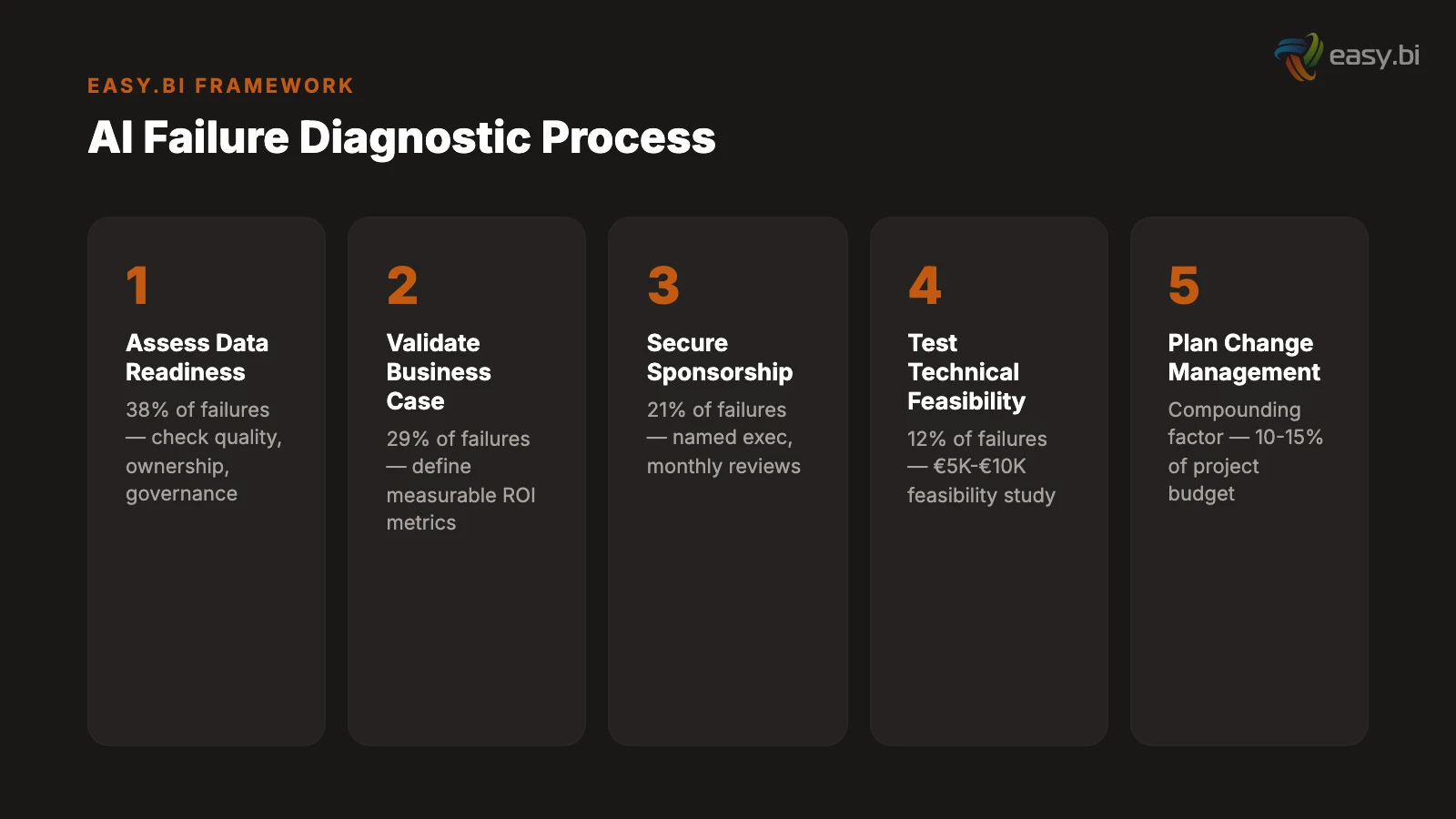

When you group failure causes across research studies and real-world postmortems, they cluster into 5 categories. Understanding which category your organization is most vulnerable to is more valuable than any technical architecture decision.

| Failure Category | % of Failures | Root Cause | When It Becomes Visible |

|---|---|---|---|

| Data readiness | 38% | Data quality, availability, or governance is insufficient | Weeks 2-4 of pilot |

| Business case viability | 29% | No clear ROI or success metrics defined upfront | Months 3-6 (during validation) |

| Executive sponsorship | 21% | Decision-maker support erodes during the project | Months 4-8 (during scaling) |

| Technical infeasibility | 12% | The technology cannot solve the problem as scoped | Weeks 3-6 of pilot |

| Organizational resistance | Compounding factor | End users reject or bypass the AI system | Months 2-6 (during rollout) |

Notice: only 12% of failures are technical.[2] The model worked. The algorithm performed. The system was built to spec. And the project still failed because the organization was not ready for what it built.

Why Is Data Readiness the Top Killer?

38% of AI project failures trace directly to data quality or data availability.[3] But "bad data" is too vague to be actionable. Here is what data readiness failure actually looks like in practice.

Scattered data ownership. The data the AI system needs exists in 4 different systems owned by 4 different teams. No single person has the authority to grant access to all of it. The data engineering team spends 6 weeks negotiating access and building extraction pipelines before the first model training run.

Inconsistent data quality. The CRM has 3 different formats for phone numbers. The ERP has product codes that changed naming conventions twice in the last 5 years. The support system has free-text fields where agents entered data however they wanted. Cleaning this data is not a sprint task - it is a quarter-long project.

Insufficient historical depth. Predictive models need historical data to learn patterns. A churn prediction model needs 2-3 years of customer behavior data. A demand forecasting model needs seasonal data spanning multiple years. If the company migrated systems 18 months ago and the old data was not migrated, the historical record is too shallow for reliable predictions.

63% of organizations either do not have or are unsure whether they have the right data management practices for AI.[3] Gartner predicts that through 2026, organizations will abandon 60% of AI projects that are unsupported by AI-ready data.

I have never seen an AI project fail because the model architecture was wrong. I have seen dozens fail because the training data was a mess. The most underrated AI investment is not GPU infrastructure or ML talent - it is a data engineer who spends 3 months fixing your data before any model training begins.

The diagnostic signals for data readiness failure:

- No single owner for the data the AI system needs

- Data quality has never been formally assessed

- Key data lives in spreadsheets, email attachments, or unstructured documents

- The company migrated core systems in the last 2 years without full data migration

- No documented data dictionary or schema governance

If 3 or more of these signals are present, address data readiness before starting the AI pilot. A EUR 5,000-10,000 data audit saves the EUR 50,000-200,000 you would spend discovering the problem during the build.

Why Do Business Cases Collapse Mid-Project?

29% of abandoned AI projects fail because the business case was never viable - or was never properly defined in the first place.[2]

The pattern is consistent: an executive sees a demo, gets excited about AI capabilities, and sponsors a pilot without defining what success looks like in business terms. The team builds something technically impressive. The demo works. Then someone asks: "What is the ROI?" And nobody has a clear answer.

Projects with clear pre-approval success metrics achieve 54% success rates. Projects without defined metrics achieve 12%.[2] That is not a marginal difference - it is the difference between a coin flip and a near-certain failure.

The business case failure modes:

Undefined success metrics. "Improve customer experience" is not a metric. "Reduce average first-response time from 4 hours to 15 minutes for the top 20 question categories, measured over 60 days" is a metric. The specificity forces the team to scope the pilot correctly and gives the CFO a clear ROI calculation.

Misaligned expectations. The board expects "AI transformation." The team delivers a chatbot that handles 30% of support queries. Technically, this is a success - it reduces support workload and improves response times. Politically, it is a failure because the expectations were set at "transformation" rather than "incremental improvement." 57% of organizations that reported AI failure said they expected too much, too fast.[4]

Cost overruns that destroy the business case. The pilot was budgeted at EUR 50,000. Data preparation added EUR 20,000. Integration complexity added EUR 15,000. The total hit EUR 85,000 - and the business case that justified a EUR 50,000 investment does not justify an EUR 85,000 one. 68% of AI projects exceed initial estimates by an average of 42%.[5]

The diagnostic signals for business case failure:

- No written success metrics before pilot approval

- The executive sponsor cannot articulate the expected ROI in specific numbers

- The pilot scope keeps expanding ("while we are at it, can it also...")

- No kill criteria defined - the team does not know what results would cause them to stop

- The budget does not include a contingency buffer

Why Does Executive Sponsorship Erode?

21% of AI project failures trace to loss of executive sponsorship.[2] The executive who championed the initiative moves on, loses interest, or faces competing priorities. Without sustained sponsorship, the project loses budget protection, organizational priority, and the authority to make cross-departmental decisions.

AI pilots with sustained executive sponsorship achieve 68% success rates. Those that lose sponsorship mid-project achieve 11%.[2] That 57-percentage-point gap makes sponsorship the single most predictive factor in AI project outcomes.

Sponsorship erodes for predictable reasons:

Long timelines with invisible progress. The first 4-6 weeks of an AI pilot produce no visible output. Data preparation, pipeline engineering, and model training look like nothing is happening to a non-technical sponsor. If the sponsor does not understand that this is normal, they lose confidence and redirect attention to projects with more visible progress.

Organizational restructuring. In DACH mid-market companies, the average tenure of a CTO or CDO is 3-4 years. If the sponsor leaves mid-project, the successor inherits a commitment they did not make and may not prioritize. AI pilots that span more than 6 months are particularly vulnerable to this risk.

Competing priorities. A cybersecurity incident, a major client escalation, or a regulatory deadline pulls the sponsor's attention. AI pilot reviews get rescheduled, then skipped, then forgotten. The team continues building without strategic guidance, and the deliverable drifts from what the business needs.

The fix is structural: assign a named executive sponsor, schedule fixed monthly reviews that cannot be delegated, and define a succession plan if the sponsor changes roles. These are project management basics that AI projects routinely skip because they feel "too corporate" for an innovation initiative.

Why Does Technical Infeasibility Only Account for 12%?

This number surprises most technical leaders. Only 12% of AI project failures are caused by the technology itself not being able to solve the problem.[2]

The reason is straightforward: the technology has outpaced the organizations using it. In 2020, many AI use cases were genuinely infeasible - the models were not capable enough, the infrastructure was too expensive, the tooling was too immature. In 2026, frontier models handle the vast majority of enterprise AI use cases at production quality. RAG, fine-tuning, and prompt engineering cover the full spectrum from simple chatbots to complex predictive systems.

When technical infeasibility does occur, it clusters in specific patterns:

- Accuracy thresholds that no current model meets. Medical diagnosis support that requires 99.9% accuracy. Safety-critical systems where even a 1% error rate is unacceptable. These use cases push beyond what current models reliably deliver.

- Latency requirements that conflict with model complexity. Real-time systems requiring sub-50-millisecond inference cannot use large language models, regardless of the use case. The physics of model inference set hard latency floors.

- Data that does not exist. A predictive model requires data that the organization does not collect and cannot retroactively generate. No amount of model sophistication compensates for absent input data.

Technical feasibility assessments catch these issues in weeks, not months. A EUR 5,000-10,000 feasibility study at the start of a project eliminates the 12% technical failure risk before significant budget is committed.

Our AI framework cuts development time in half

ebiCore is our proprietary agentic AI framework that accelerates innovation and reduces cost.

Start with a Strategy CallHow Does Organizational Resistance Compound Every Other Failure?

Organizational resistance is not a standalone failure category - it is a multiplier that makes every other category worse. When end users do not trust, do not understand, or actively resist the AI system, even technically perfect implementations fail in practice.

The resistance patterns:

"The AI is wrong" feedback loop. Users find one incorrect output, lose trust in the entire system, and revert to the manual process. A chatbot that answers 95% of questions correctly gets rejected because 5 out of 100 answers were wrong. The human process also has errors, but humans trust human errors more than machine errors.

Workflow interference. The AI system requires users to change how they work. New tools, new processes, new decision points. 77% of successful AI deployments attribute their success to integrating AI into existing workflows rather than requiring new ones.[4] Systems that demand behavior change without a clear personal benefit to the user face adoption rates below 20%.

Fear of replacement. Support agents, data analysts, and process specialists worry that the AI system will eliminate their roles. Whether this fear is justified is irrelevant - it drives resistance behaviors: delayed training participation, selective use of the system, and negative feedback that influences management decisions.

The change management investment that prevents organizational resistance is 10-15% of the total project budget. Most AI pilots allocate 0%. The pilot succeeds technically and fails organizationally - a pattern so common it has a name: the "last mile problem."

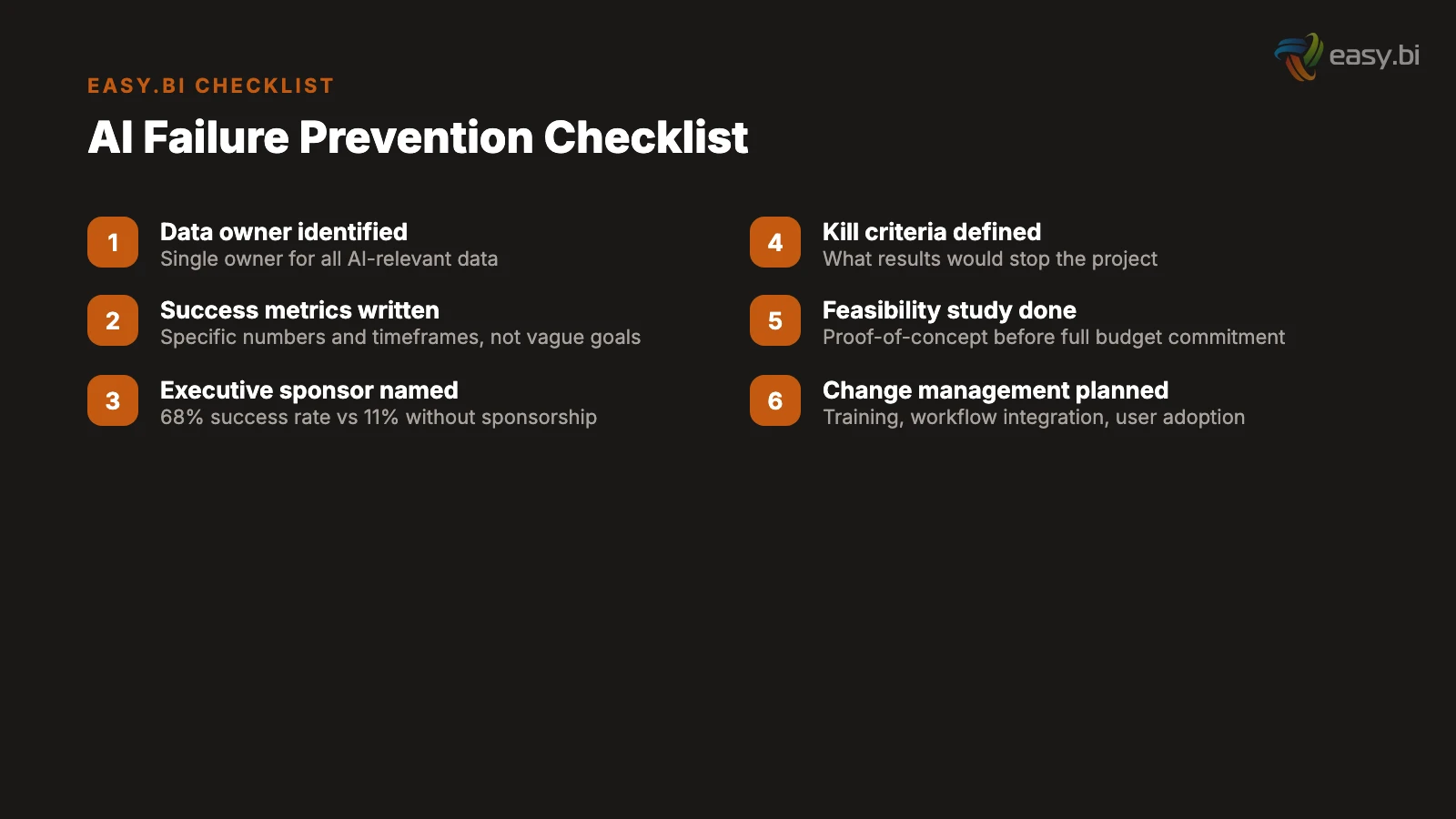

The Diagnostic Checklist: Score Your Organization Before You Start

Score each item from 0 (not in place) to 2 (fully in place). A total score below 25 signals high failure risk. Address the gaps before committing to a full pilot budget.

Data Readiness (10 points possible):

- Single identified owner for all data the AI system needs (0-2)

- Data quality has been formally assessed in the last 12 months (0-2)

- Core data is in structured, accessible systems with APIs (0-2)

- Historical data spans the required time period for the use case (0-2)

- Data dictionary or schema governance exists and is current (0-2)

Business Case (10 points possible):

- Written success metrics with specific numbers and timeframes (0-2)

- ROI calculation using total cost of ownership, not just build cost (0-2)

- Kill criteria defined - what results would stop the project (0-2)

- Budget includes 25% contingency buffer (0-2)

- Pilot scope is fixed and documented - no scope creep clause (0-2)

Executive Sponsorship (10 points possible):

- Named executive sponsor with decision authority (0-2)

- Monthly review meetings scheduled for full project duration (0-2)

- Sponsor can articulate the business case without assistance (0-2)

- Succession plan if sponsor changes roles (0-2)

- Cross-departmental authority documented for data access and integration (0-2)

Technical Feasibility (8 points possible):

- Feasibility assessment completed with proof-of-concept results (0-2)

- Integration points with existing systems identified and documented (0-2)

- Accuracy, latency, and availability requirements defined (0-2)

- Infrastructure capacity assessed for pilot and production scale (0-2)

Organizational Readiness (8 points possible):

- Change management plan with training schedule (0-2)

- End users involved in requirements and testing (0-2)

- AI system designed to integrate with existing workflows (0-2)

- Communication plan addressing job security concerns (0-2)

Scoring: 37-46 points: proceed with confidence. 25-36 points: address specific gaps before starting. Below 25: high failure risk - invest in readiness before committing to a pilot.

What Separates the 15% That Succeed?

The organizations that beat the failure rate share three characteristics that are organizational, not technical.

They validate before they build. Successful organizations spend 10-15% of the total project budget on feasibility assessment before committing to the full build. This includes a data audit, a technical proof of concept, a business case validation, and a stakeholder alignment workshop. The EUR 5,000-15,000 invested in validation prevents the EUR 50,000-200,000 wasted on a pilot that was never going to work.

They define success in numbers. Formal data readiness assessments achieve 47% success versus 14% without. Projects with clear pre-approval metrics achieve 54% success versus 12% without.[2] The successful 15% are not better at building AI - they are better at deciding what to build and how to measure whether it worked.

They treat AI as a team sport. Among the 77% of organizations that deliver at least one successful AI use case, success is attributed primarily to integrating AI into existing workflows and securing full support from business executives.[4] The CTO does not build AI in isolation. The CFO owns the business case. The COO owns the workflow integration. The CEO owns the sponsorship. AI succeeds when it is an organizational initiative, not a technology project.

For the cost framework behind successful AI pilots, read what an AI pilot actually costs. For the technical decision framework on implementation approaches, see RAG vs. fine-tuning vs. prompt engineering. And for the governance framework that supports production AI, read AI governance for mid-market.

If you want to stack the odds in your favor before starting an AI initiative, explore our AI services. We help DACH mid-market companies assess readiness, define success metrics, and build AI systems that reach production - because the 85% failure rate is not inevitable. It is preventable.

Frequently Asked Questions

What percentage of AI projects actually fail?

Research puts the failure rate between 80% and 85% depending on the definition. Gartner found that at least 50% of generative AI projects are abandoned after proof of concept. RAND Corporation reports 80.3% fail to deliver intended business value. The number rises to 95% for GenAI pilots that attempt to scale to production.

What is the most common reason AI projects fail?

Poor data readiness, cited by 38% of organizations that experienced AI project failure. This includes insufficient data quality, incomplete historical records, data scattered across incompatible systems, and the absence of data governance practices. 63% of organizations either do not have or are unsure whether they have appropriate data management practices for AI.

How can I prevent my AI project from failing?

Three actions prevent most failures: define measurable success metrics before writing code (54% success rate versus 12% without), run a data feasibility audit in the first two weeks (costs EUR 5,000 to 10,000 and eliminates the top failure cause), and secure sustained executive sponsorship for the full project duration (68% success rate versus 11% when sponsorship is lost).

How much money do failed AI projects waste?

The average sunk cost per abandoned AI initiative is EUR 4.2 million for enterprises. In 2025, over USD 547 billion of the USD 684 billion invested in AI initiatives failed to deliver intended business value. Mid-market projects typically waste EUR 50,000 to 200,000 per failed pilot when including internal team time and opportunity costs.

References

- [1] Gartner (2024). "85% of AI projects fail to deliver on intended business outcome gartner.com

- [2] Pertama Partners (2026). "AI Project Failure Rate 2026: 80% Fail. pertamapartners.com

- [3] Gartner (2025). "Lack of AI-Ready Data Puts AI Projects at Risk." gartner.com

- [4] Gartner (2026). "AI Projects in I&O Stall Ahead of Meaningful ROI Returns." gartner.com

- [5] Pertama Partners (2026). "Hidden Costs of AI Implementation. pertamapartners.com

- [6] Deloitte (2025). "The State of AI in the Enterprise. deloitte.com

- [7] McKinsey (2025). "The State of AI: Agents, Innovation, and Transformation. mckinsey.com

Explore Other Topics

Ready to accelerate with AI?

30-minute call with an engineering lead. No sales pitch - just honest answers about your project.

98% engineer retention · 14-day delivery sprints · No lock-in contracts