How to Evaluate a Software Development Partner (Without Getting Burned)

Table of Contents+

- Why Traditional RFPs Fail for Development Partner Selection

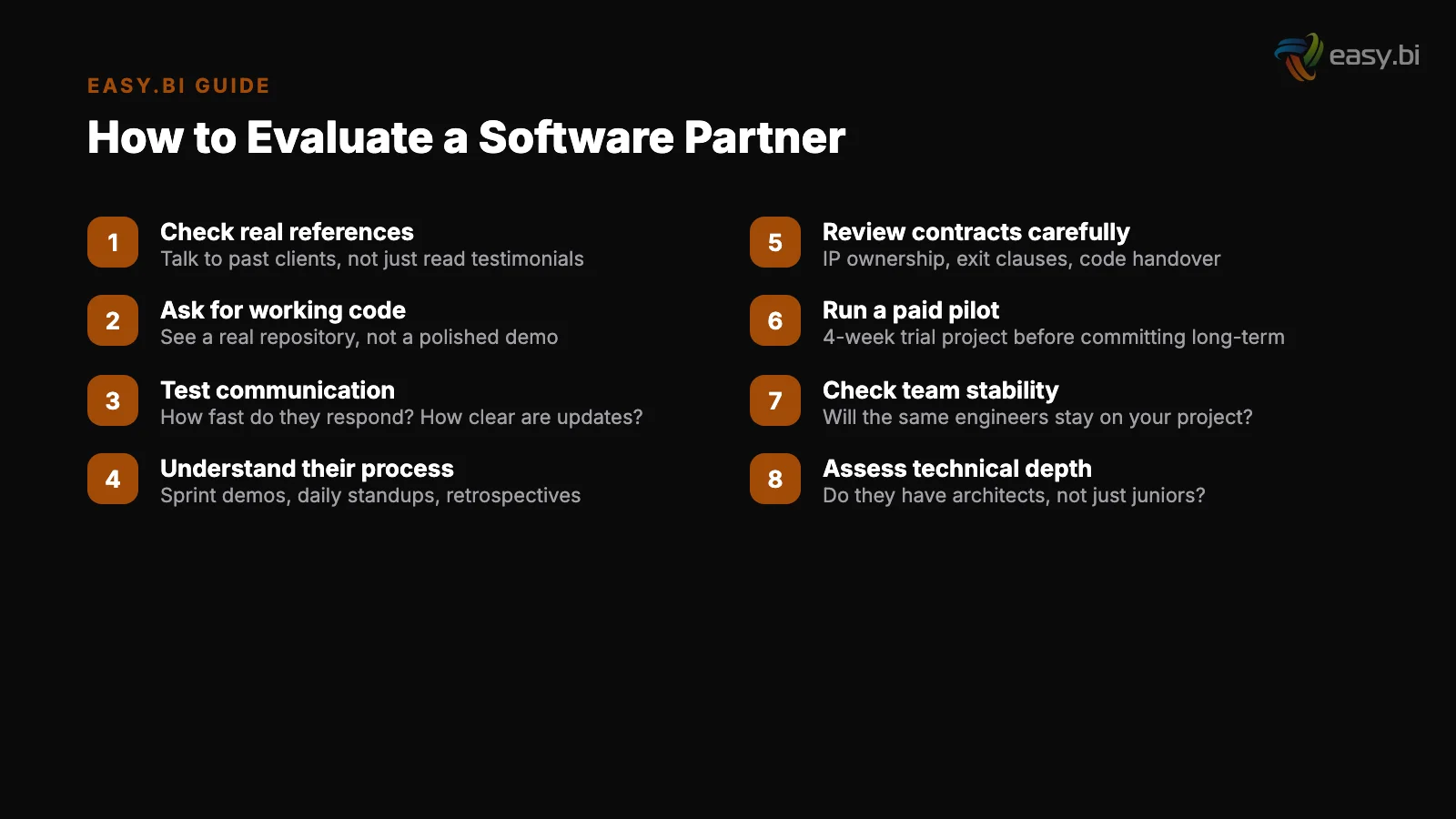

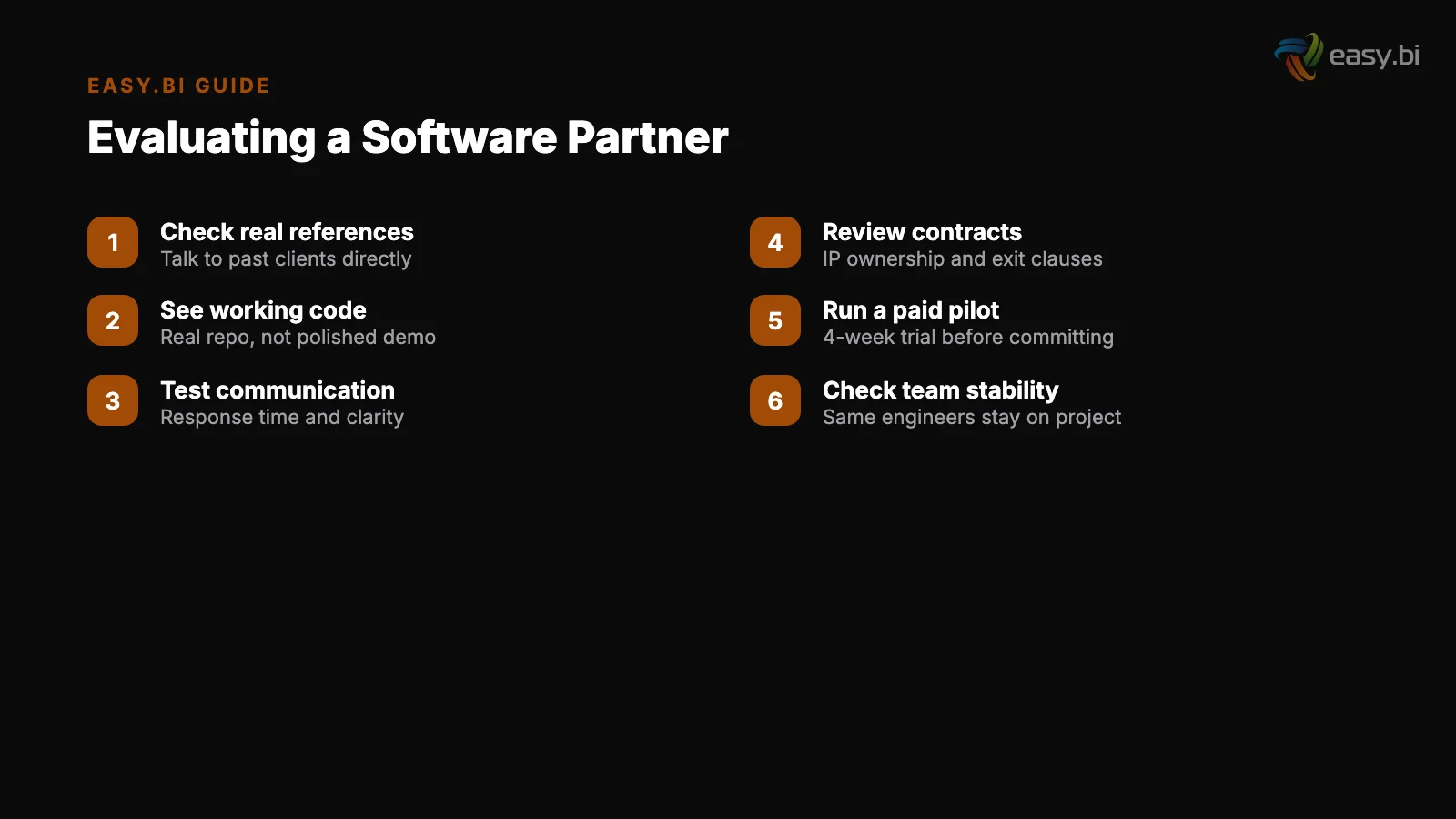

- The 8 Questions That Actually Predict Partner Quality

- Red Flags That Predict Failure

- How to Assess Technical Depth Without Being Technical

- The Paid Pilot: Your Best Evaluation Tool

- Reference Checks That Actually Reveal Something

- What Good Looks Like: A Partner Evaluation Scorecard

- How to Start the Conversation Right

- References

TL;DR

"We tried outsourcing before and got burned." We hear this in almost every first conversation with a DACH mid-market CTO. The story follows a familiar pattern: a vendor promised senior engineers, delivered juniors. The sales team was impressive, the delivery team was not. Communication broke down. Timelines doubled.

Key Takeaways

- •RFPs with 200 questions and polished vendor presentations produce confidence, not insight. The signals that predict partner quality are retention rates, deployment frequency, and whether the people who pitch are the people who build.

- •The industry average for IT outsourcing client retention is 72-78%. Any partner significantly above that threshold is doing something the market cannot easily replicate - ask for the number and verify it.

- •Run a paid 4-week pilot before committing to a 12-month engagement. You will learn more about communication patterns, code quality, and problem-solving under pressure than 6 months of evaluation ever reveals.

- •57% of project failures trace to communication breakdowns between business and technology stakeholders. The partner who asks 'What business outcome does this feature serve?' before writing code is the one who delivers value.

- •Red flags that predict failure: the sales team disappears after signing, the 'senior architects' in the pitch are replaced by junior developers on the project, and the partner cannot show a production deployment from the last 2 weeks.

A practical checklist for evaluating software development partners: what to ask, red flags to watch for, how to assess technical depth, and why paid pilots beat RFPs. Based on patterns from 100+ DACH enterprise projects.

"We tried outsourcing before and got burned." We hear this in almost every first conversation with a DACH mid-market CTO. The story follows a familiar pattern: a vendor promised senior engineers, delivered juniors. The sales team was impressive, the delivery team was not. Communication broke down. Timelines doubled.

The budget tripled. And the company went back to struggling with in-house hiring - not because it worked, but because the alternative felt worse.

The problem was never outsourcing itself. The problem was the evaluation process. Most companies select development partners using the same criteria they use to buy enterprise software: feature checklists, reference calls with hand-picked clients, and vendor presentations optimized for impressive slides.

None of this predicts whether the partner will actually deliver.

This guide gives you the evaluation framework we wish every client had used before their first failed engagement. It is based on patterns we have observed across 100+ projects in the DACH mid-market - both our own and the failed projects we were brought in to rescue.

Why Traditional RFPs Fail for Development Partner Selection

The Request for Proposal process was designed for commodity procurement. It works when you are buying 500 identical laptops. It fails spectacularly when you are selecting a team of humans to solve complex, evolving problems.

RFPs for software development have three structural flaws. First, they optimize for proposal quality, not delivery quality. The vendors who win RFPs are the ones with the best proposal writers and the most polished slide decks - not necessarily the ones with the best engineers.

Second, RFPs assume you can fully specify the problem upfront. Enterprise software projects evolve. Requirements change. The partner who rigidly follows a 200-page specification from 6 months ago will deliver exactly what you asked for - and nothing you actually need. Third, RFPs create adversarial dynamics.

The vendor optimizes for winning the contract, not for honest assessment of fit.

The alternative: structured evaluation based on observable behaviors, not promises. What has this partner actually shipped? How do their teams actually communicate? What happens when something goes wrong? These questions have verifiable answers. Slide decks do not.

See how enterprises modernize with one team.

The 8 Questions That Actually Predict Partner Quality

After seeing dozens of partner evaluations succeed and fail, we have identified the questions that correlate with project outcomes. Ask all of them. Accept only specific, verifiable answers.

1. What is your client retention rate? The industry average for IT outsourcing client retention is 72-78% [1]. This means 22-28% of clients choose not to return. A partner with 90%+ retention is doing something materially different from the market.

A partner who cannot answer this question - or deflects to "we have many long-term clients" without a number - is telling you everything you need to know.

2. Can I meet the actual team who will work on my project? This is the single most important question you can ask. Big consultancies are notorious for presenting senior architects during the sales process and staffing junior bench warmers on the project. Get names. Get LinkedIn profiles.

Get a written commitment that these specific people will be on your project for the first 6 months minimum.

1. What is your client retention rate

3. Show me your last 5 production deployments. Every agency claims to be "agile." Few can show continuous delivery evidence. Ask for deployment logs or a screenshot of their CI/CD pipeline's recent history. Teams using 2-week sprint cycles deliver 40% more features per quarter than those using 4-week cycles [2].

If the last deployment was 6 weeks ago, their agile claims are marketing.

4. What is your internal team turnover rate? The software development industry averages 13.2% annual turnover [3]. Every time a developer leaves your project, you lose 4-8 weeks of ramp-up productivity. A partner with 20%+ turnover means your project becomes a revolving door.

Ask for the number and ask what they do to keep it low.

5. Walk me through a project that failed or was significantly challenged. Every honest partner has failure stories. How they discuss failures reveals more about their culture than how they discuss successes.

Partners who claim zero failures are either lying or have not done enough complex work to encounter real challenges.

2. Can I meet the actual team who will work on my project

6. How do you handle scope changes mid-sprint? Scope creep affects 52% of all projects, increasing delivery time by 27% [4].

The answer you want: a structured change management process that evaluates impact before committing, not "we are flexible and accommodate all client requests." Unlimited flexibility is a red flag - it means no process.

7. Who owns the code and IP? The correct answer is: you do, from day one. Code lives in your repository. You have full access at all times.

If the partner builds on proprietary frameworks or insists on hosting code in their infrastructure, you are buying vendor lock-in along with your software.

8. What happens if we want to bring development in-house after 12 months? A confident partner has a transition plan. They use open-source frameworks, write documentation for the next team, and structure knowledge transfer as part of their standard process.

A partner who becomes evasive at this question is counting on lock-in for retention - not on the quality of their work.

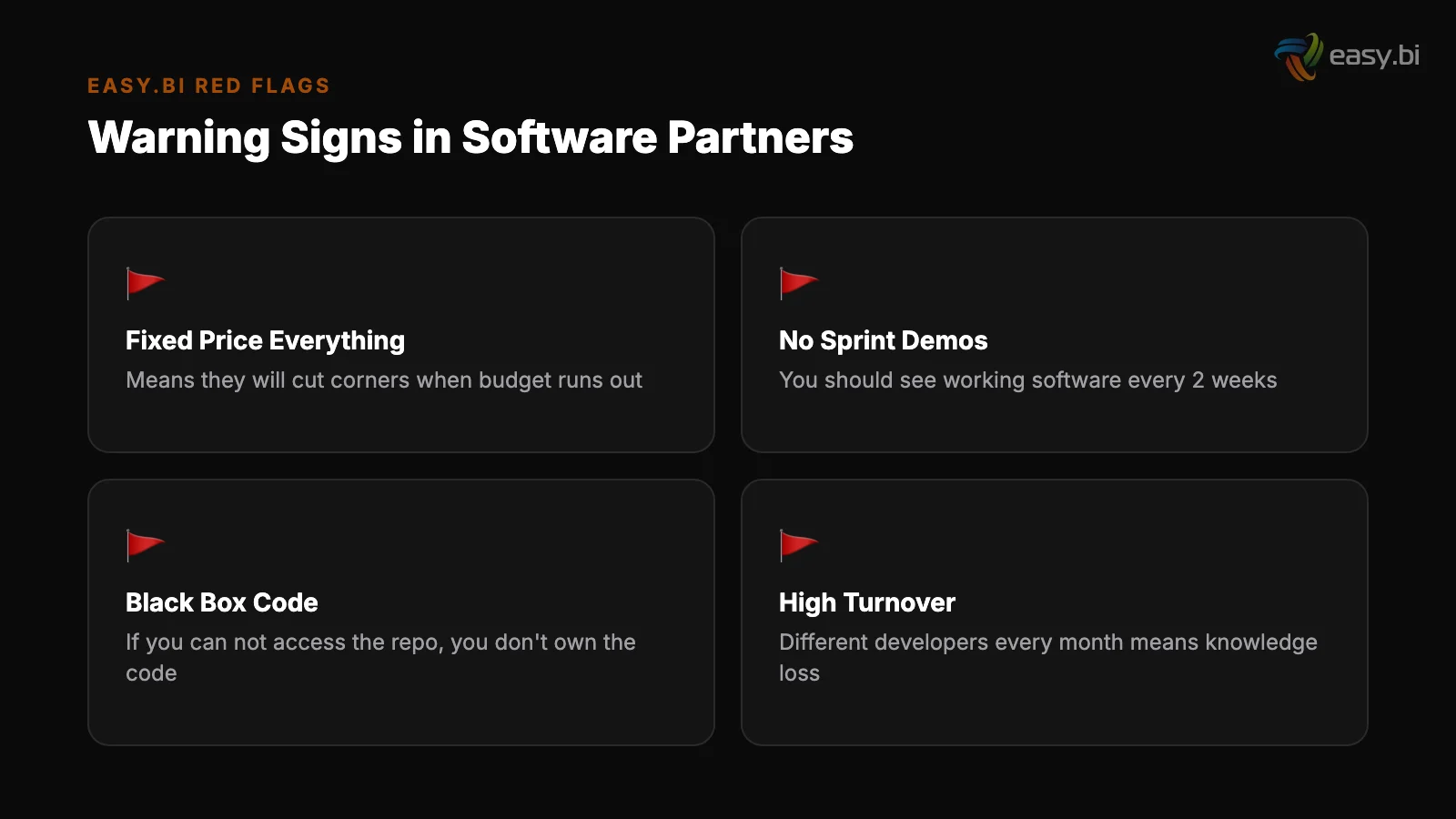

Red Flags That Predict Failure

Some warning signs are visible before a single line of code is written. Here is what to watch for during the evaluation process.

| Red Flag | What It Signals | What to Do |

|---|---|---|

| Sales team and delivery team are completely different people | Bait-and-switch culture; sales promises delivery cannot keep | Demand the delivery lead join all pre-contract meetings |

| Cannot provide client retention rate | Either do not track it (lack of rigor) or rate is embarrassing | Treat as disqualifying; retention is a basic business metric |

| No production deployments in the last 2 weeks | Not actually practicing continuous delivery | Ask for CI/CD pipeline evidence; verify with a pilot |

| Agrees to everything without pushback | Will say yes in sales, fail in delivery | Deliberately propose a bad technical approach; see if they challenge it |

| References are all from 3+ years ago | Recent clients did not have good experiences | Ask for references from the last 6 months specifically |

| Hourly rates are 40%+ below market average | Junior developers positioned as seniors, or unsustainable pricing that leads to quality shortcuts | Ask for average years of experience on the proposed team |

| Proprietary framework or custom tooling | Vendor lock-in by design; your code only works with their team | Require standard open-source stack (React, Angular, Symfony, etc.) |

70% of digital transformations fail to reach their stated goals. The partner you choose does not guarantee success - but the wrong partner guarantees failure. Evaluate based on delivery evidence, not sales presentations. [5]

How to Assess Technical Depth Without Being Technical

Not every CTO has a deep engineering background. Many DACH mid-market IT leaders come from business or operations roles. Here is how to assess technical competence without needing to read code yourself.

Ask about architecture decisions, not technology names. A strong partner explains why they chose a specific architecture for a specific business problem.

A weak partner lists technologies like a menu. "We used Kubernetes because the client needed zero-downtime deployments across 3 regions" is a signal. "We use Kubernetes, Docker, React, and GraphQL" is a word cloud.

Ask about architecture decisions, not technology names

Request a code review of your existing system. Offer to give the prospective partner read access to your current codebase for a structured assessment. A strong partner will produce a written evaluation within a week: what is working, what is risky, what they would prioritize.

This reveals their analytical depth and communication clarity. It also gives you a free (or low-cost) audit of your current system.

Check their approach to testing and quality. Ask what percentage of their code is covered by automated tests. Ask how they handle regression testing. Code review catches 60-65% of defects before they reach production [6].

Partners who invest in QA infrastructure deliver fewer defects - and the ones they do deliver get caught earlier, when they are cheap to fix.

Evaluate their documentation. Ask to see a sample architecture decision record, a sprint review document, or a technical specification from a past project (anonymized). Partners who write well communicate well. Partners who skip documentation create knowledge silos that become your problem when team members change.

The Paid Pilot: Your Best Evaluation Tool

A 4-week paid pilot with a defined scope tells you more about a partner than 6 months of evaluation. Here is how to structure one that produces actionable signal.

Define a real problem, not a toy exercise. The pilot should involve actual work on your actual codebase or system. A proof of concept built on a clean greenfield project tells you nothing about how the team handles legacy code, unclear requirements, and real-world constraints.

Pick a feature or module that is valuable but not critical - something you want built, but that will not derail your roadmap if the pilot partner does not work out.

Define a real problem, not a toy exercise

Set clear success criteria upfront. Define what "good" looks like before the pilot starts. Examples: code passes your internal review standards, feature is deployed to staging by week 3, documentation meets a specific template, at least 80% test coverage on new code. Vague success criteria lead to vague evaluations.

Observe communication patterns, not just output. How does the team handle ambiguity? Do they ask clarifying questions or make assumptions? How quickly do they escalate blockers? Do they propose alternatives when your initial approach has problems? These behavioral patterns predict long-term collaboration quality far better than code quality alone.

Set clear success criteria upfront

Include a deliberate scope change. Midway through the pilot, change a requirement. Not a massive pivot - a realistic adjustment that mirrors what happens on every real project. Watch how the team responds. Do they assess the impact before committing? Do they propose alternatives?

Or do they silently absorb the change and let the timeline slip?

The cost of a 4-week pilot is typically EUR 20,000-40,000. The cost of a failed 12-month engagement is EUR 200,000-500,000+. The pilot is the cheapest insurance you can buy.

Siemens, Lekkerland, WeberHaus chose us

One integrated partner. Three core competencies. From insight to production, with no handover gaps.

Start with a Strategy CallReference Checks That Actually Reveal Something

Standard reference calls are theater. The partner provides 3 hand-picked clients who agreed to say nice things. To get real signal, you need to go beyond the script.

Ask for references you choose, not references they provide. If the partner lists 20 clients on their website, pick 3 yourself and ask for introductions. If they resist, the clients they do not want you to talk to are the ones with the most informative stories.

Ask the reference specific questions. Not "Were you satisfied?" but: "What was the biggest challenge in the first 3 months?" "If you could change one thing about working with them, what would it be?" "Would you hire them again for a project twice as complex?" "What surprised you - positively or negatively?"

Talk to a reference whose project ended. Ongoing clients have an incentive to speak positively - they are still working together. A client whose engagement ended (by choice or naturally) has no such incentive. Their perspective is more honest.

Outsourcing partnerships lasting 3+ years deliver 25-30% more value than short-term engagements [7]. If most of the partner's references are from short engagements, ask why clients did not stay longer.

What Good Looks Like: A Partner Evaluation Scorecard

Use this scorecard to compare partners on the dimensions that actually predict outcomes. Score each dimension 1-5. Weight the dimensions based on your project's specific needs.

| Dimension | Weight | What 5/5 Looks Like |

|---|---|---|

| Client retention rate | High | 90%+ retention, verifiable with references |

| Team stability | High | Below 10% annual turnover, named team committed to your project |

| Delivery evidence | High | CI/CD pipeline visible, weekly deployments, sprint demo recordings |

| Cultural alignment | Medium-High | Same timezone, shared business language, direct communication style |

| Technical depth | Medium | Architecture decisions explained in business terms, code review capability demonstrated |

| Transparency | Medium | Open about failures, willing to share internal metrics, no proprietary lock-in |

| Pilot willingness | Medium | Eager to do a paid pilot, provides clear success criteria |

| IP and code ownership | High | Client-owned repository from day one, standard open-source stack |

A partner who scores 4+ on retention, team stability, and delivery evidence is worth 20% more per hour than a partner who scores 2 on those dimensions but offers a lower rate.

The total cost of a project with the wrong partner - including rework, delays, and eventual re-engagement - is 2-3x the cost of doing it right the first time.

How to Start the Conversation Right

The way you engage a potential partner in the first conversation sets the tone for the entire relationship. Here is what we recommend.

Skip the RFP. Instead, have a 60-minute working session where you describe your business challenge (not your technical requirements) and ask the partner how they would approach it. Strong partners will push back on assumptions, ask clarifying questions, and propose approaches you had not considered.

Weak partners will agree with everything and promise to put it in a proposal.

Be transparent about your budget range. Not the exact number - but the order of magnitude. A partner who knows you are thinking EUR 50,000 vs. EUR 500,000 can give you realistic scope expectations instead of promising the world and negotiating later.

Ask about failure modes. "What is the most likely reason this project would fail?" A partner who can articulate risks honestly is a partner who will manage those risks proactively. A partner who says "It won't fail" has either not thought about it or is not being honest.

The build vs. buy decision is the strategic framework that sits above partner selection. Get that decision right first, then use this evaluation guide to find the right execution partner.

For the team retention dynamics that make or break long-term partnerships, read our deep dive on what drives 98% retention in software teams. And for the talent market context that makes all of this urgent, see our analysis of why senior engineers leave.

If you want to see how our evaluation process works from the other side - including our pilot engagement structure - explore our custom solutions approach or book an expert call. No slide deck. No sales pitch. A working conversation about your specific challenge.

References

- [1] Deloitte (2024). "Outsourcing client retention rates average 72-78%. deloitte.com

- [2] Scrum.org (2023). "2-week sprint cycles deliver 40% more features per quarter th scrum.org

- [3] LinkedIn / BLS (2024). linkedin.com

- [4] PMI (2024). "Scope creep affects 52% of all projects, increasing delivery time b pmi.org

- [5] McKinsey (2023). "70% of digital transformations fail to reach their stated goal mckinsey.com

- [6] SmartBear / Cisco (2023). smartbear.com

- [7] Deloitte (2024). "Outsourcing partnerships lasting 3+ years deliver 25-30% more deloitte.com

Explore Other Topics

Ready to transform your business?

30-minute call with an engineering lead. No sales pitch - just honest answers about your project.

98% engineer retention · 14-day delivery sprints · No lock-in contracts